How to Scale Ad Creative Production with AI

Jump to a section

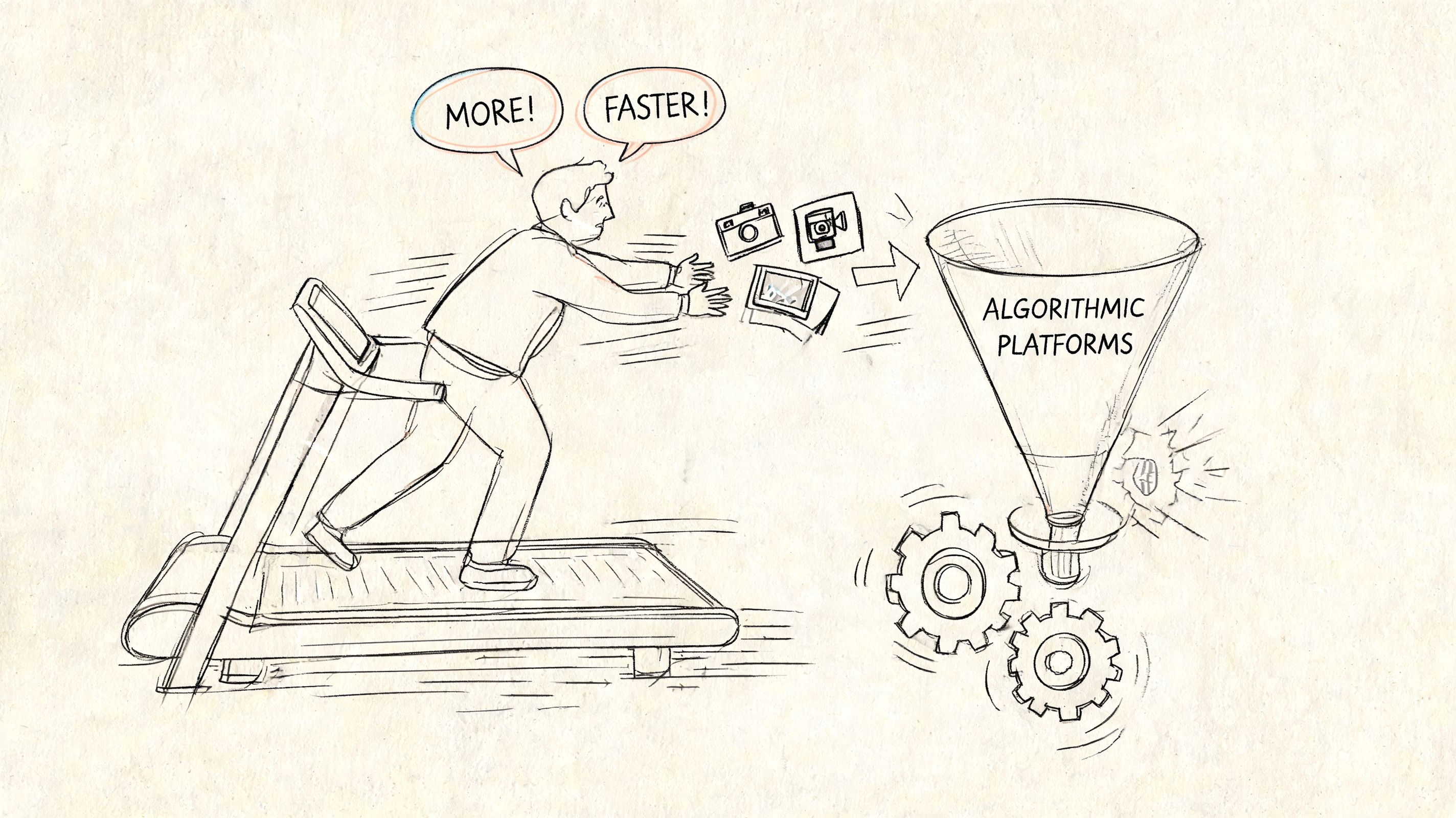

You can feel when an ad account runs out of oxygen. Spend is still there, the audience size hasn't changed, and nothing is obviously broken. But CTR softens, frequency climbs, comments get stale, and every review meeting ends with the same instruction: make more creative.

That advice is directionally right and operationally useless.

Creative teams don't have a volume problem. They have a system problem. They still produce ads like campaigns are handcrafted projects, while Meta's delivery environment now rewards teams that can launch, learn, and replace creative fast. If you're trying to figure out how to scale ad creative production, the answer isn't "hire more editors" or "ask AI for more scripts." It's to redesign the production workflow around modular assets, automated formatting, and tighter feedback loops.

The pressure is even sharper inside Meta's Andromeda-driven environment. Since the rollout in late 2025, teams have had to support much faster creative rotation, and agencies report 2-3x longer setup times without specialized platforms built for direct Ads Manager pushes, AI-powered asset banks, and bulk editing tools for real-time testing needs, according to Madgicx's breakdown of ad creative scaling challenges. That's the part generic creative advice misses. Scaling isn't only about making more ads. It's about making more usable ads that can move through Meta's workflow without friction.

The New Rules of Creative Velocity

Most UA managers are stuck in the same loop. One ad wins, spend scales, performance degrades, and the team scrambles to brief "three new concepts" that won't be ready until the current winner is already tired. That lag is what kills momentum.

The fix is creative velocity. Not just output. Not just variety. Velocity means your team can turn performance signals into new variations quickly enough to keep the account learning before fatigue takes over.

Volume only helps if learning speed improves

A lot of teams hear "scale creative" and respond with random volume. More scripts, more edits, more raw exports. That usually creates review debt, naming chaos, and a folder full of near-duplicates nobody can analyze properly.

The stronger model is systematic production. Agencies using template libraries, batch processing, AI-assisted copywriting, and automated asset generation have achieved a 4-5x increase in ad creative production volume, moving from a manual baseline of 8-12 high-quality ads per week to 40-60 variants weekly per team, while creative hit rates improved from 15-20% to 40-50% with the infrastructure shift, according to Ryze's analysis of scaled agency production.

That matters because the advantage isn't the raw count. It's the shorter distance between a market signal and the next test.

Practical rule: Stop asking, "How do we find the next winner?" Ask, "How fast can we turn this winner into ten disciplined follow-ups?"

Why Andromeda changes the operating model

Meta's current environment puts more pressure on freshness and rotation. Teams that still build every ad as a one-off object can't keep up, especially when production, resizing, approvals, and upload setup all live in separate tools.

That's why I treat creative like a pipeline, not a portfolio. Every launched ad should create inputs for the next batch. The winning hook becomes a category. The body becomes a reusable proof block. The CTA becomes a testable closing asset. This is also where strong content repurposing strategies become operationally useful, because they force teams to think in reusable source material instead of isolated deliverables.

A healthy creative engine doesn't chase novelty for its own sake. It builds enough speed to replace fatigue with iteration.

Build Your Modular Creative Framework

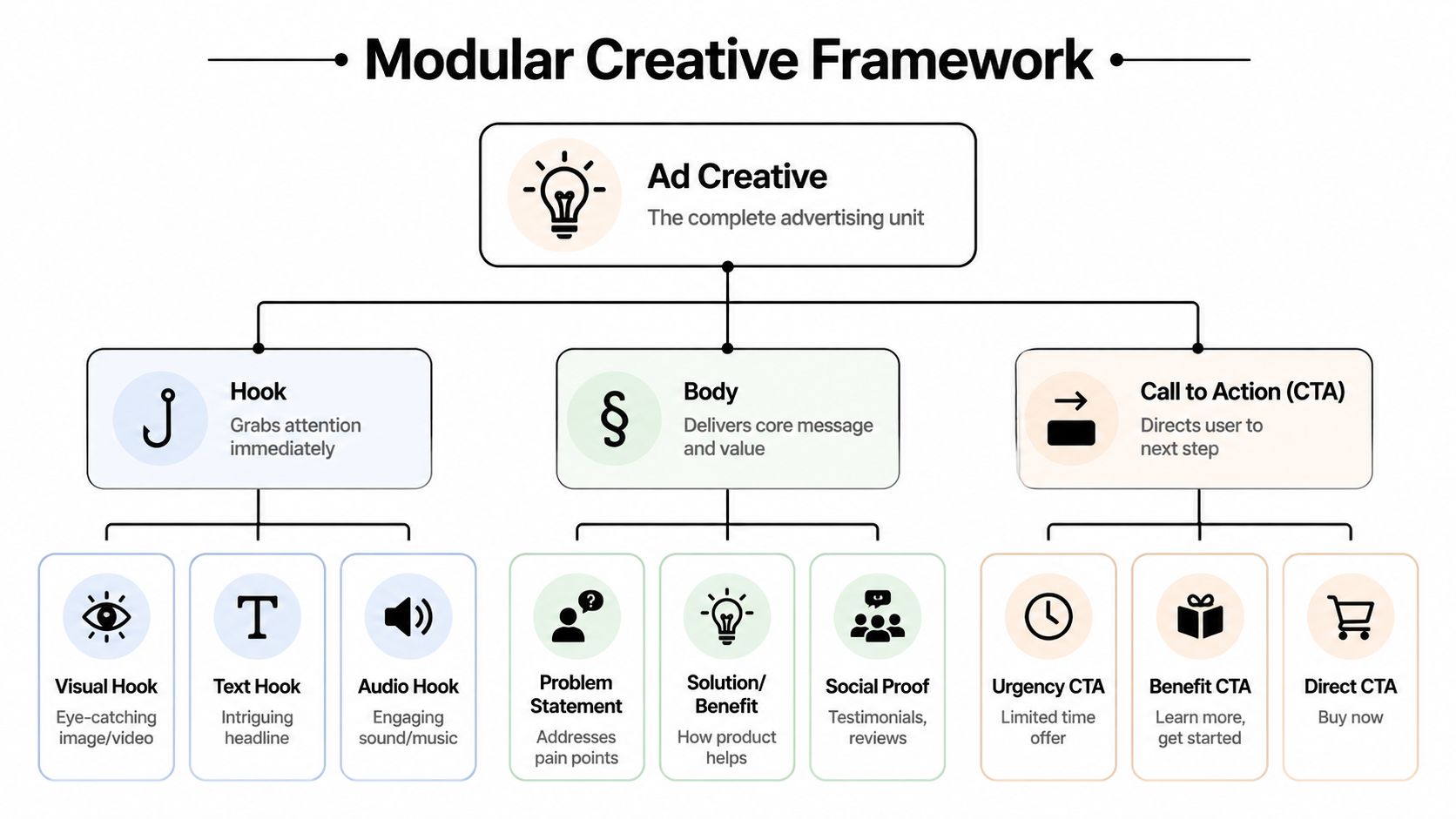

Teams usually understand the Hook, Body, CTA model at a script level. That's fine for writing. It doesn't solve scaling. To scale, you need to treat those parts as swappable production units.

Think of the ad less like a finished film and more like a kit. If the opening line works with multiple proof sections, and the same offer can close different story structures, you stop rebuilding from scratch every time.

Build component libraries, not isolated ads

A modular framework starts with three libraries:

- Hook library: First-frame visuals, opening claims, text-on-screen intros, pattern interrupts, founder openers, demo cold opens, testimonial starts.

- Body library: Product demos, feature explanations, before-and-after sequences, social proof clips, objection handling, use-case scenes, offer framing.

- CTA library: Hard sell endings, soft education closes, urgency angles, discount overlays, free trial prompts, app install pushes.

The important part is tagging each component by function, not just by filename. "UGC_03.mp4" is useless. "Hook_problem_agitation_vertical_creatorA" is usable.

A single raw shoot should produce parts that can survive outside the original edit. If your editor exports only final ads, you've trapped value inside timelines.

Modular production changes the economics

The framework transcends creative preference, becoming a business necessity. With modular production, where hooks, bodies, and CTAs are recombined, the marginal cost of each additional ad variation drops significantly. Nest Commerce gives a useful operating example: if a team needs 5 winning ads a week and works from a 20% hit rate, it needs 25 total ad creative variants per week to maintain pipeline velocity. That volume is only economically realistic through a modular system, as explained in Nest Commerce's guide to scaling ad creative production.

That should change how you brief shoots and creators.

| Creative approach | What happens in production | What happens later |

|---|---|---|

| One-off ad build | Team makes a finished asset around one concept | Iteration is slow because every change needs a new edit path |

| Modular build | Team captures hooks, proof blocks, CTAs, and alternate overlays as separate units | New variants can be assembled quickly without new shoots |

What to standardize and what to leave loose

Don't over-template the ad itself. Template the infrastructure.

Standardize these:

- Component naming rules so editors and buyers can find the right version.

- Approved CTA formats so legal and brand review don't restart every cycle.

- Hook categories so testing can compare like with like.

- Aspect-ratio safe zones so assets survive reformatting later.

Leave these loose:

- Creator delivery style

- Visual texture

- Native platform cues

- Tone variations across audiences

If every variation looks mechanically different but emotionally identical, the system will still stall.

For teams building video-first workflows, a dedicated modular video ad framework is useful because it forces production planning around reusable blocks instead of final edits. That's a mental shift often overlooked.

Systemize Asset Ingestion and Management

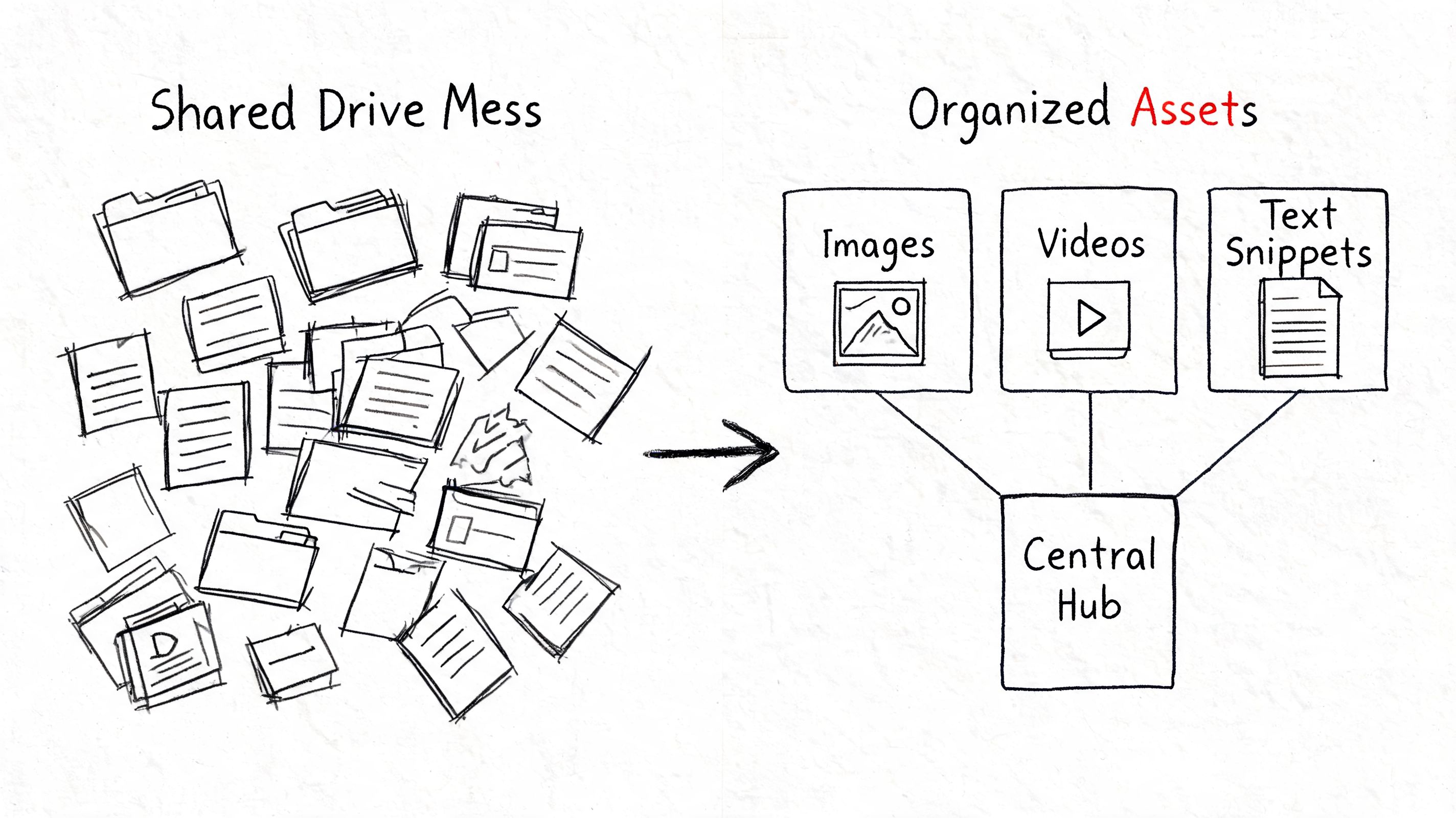

Creative scale usually breaks in a boring place. Not strategy. Not editing. Not AI. It breaks when nobody can find the right clip, confirm the right transcript, or tell which version is approved for paid use.

Folder chaos kills speed. Shared drives with loose naming conventions create hidden tax on every iteration cycle. The editor wastes time searching. The strategist briefs from memory. The buyer launches the wrong cut. Then the team says production is the bottleneck, when retrieval is the actual bottleneck.

The asset bank has to beat the camera roll

A scalable system makes finding the right clip faster than asking for a new one. That's the standard.

I like an ingestion workflow built around four moments:

- Raw intake: Every source asset enters one place immediately after creation.

- Functional tagging: Clips get tagged by scene type, product angle, creator, audience, claim category, and format suitability.

- Transcript and visual search readiness: Spoken lines, on-screen text, and scene content become searchable.

- Usage status: Teams can see whether a clip is raw, approved, active, deprecated, or restricted.

That last part matters more than many realize. Good footage is not the same thing as deployable footage.

Naming conventions should serve analysis

Most file structures are built for storage. Scaled paid social needs structures built for retrieval and testing.

A useful naming system answers these questions immediately:

| Question | Example tag type |

|---|---|

| What role does this asset play? | Hook, body, CTA, bridge, proof |

| What angle does it represent? | Value, speed, credibility, problem-aware |

| What format is it safe for? | Vertical, square, feed-safe, sound-off |

| Who is in it? | Creator ID, founder, customer, motion graphic |

| Can it be reused? | Evergreen, seasonal, promo-specific |

This doesn't need to become bureaucratic. It does need to become consistent.

AI should reduce search time, not add novelty

A lot of teams adopt AI generation before they fix AI-assisted organization. That's backwards. Before you make new assets at scale, make the existing library searchable.

The practical features that matter are simple:

- Auto-tagged scenes so product shots, demos, testimonials, and talking-head clips are easy to pull

- Searchable transcripts so you can find exact phrases or objections fast

- Visual search so the team can locate similar clips without knowing filenames

- Centralized approvals so nobody cuts from unapproved material

The best asset system doesn't feel like documentation. It feels like instant recall.

If you're formalizing this layer, these asset management best practices for creative teams are the kind of operational guidelines worth using because they focus on retrieval discipline, not just storage hygiene.

In Andromeda-heavy workflows, this is even more important. When rotation pressure rises, the team can't afford to re-open old projects just to rebuild usable ingredients. The asset bank has to expose them on demand.

Automate Generation and Cross-Platform Formatting

Monday morning, the buyer asks for six fresh Meta variants by lunch. The core idea is already approved. The delay comes from resizing, overlay swaps, caption cleanup, naming, export settings, and re-uploading the same ad into three placements. That is where creative throughput breaks.

Manual resizing is one of the highest-cost low-value tasks in paid social. It keeps editors busy, but it does not improve the concept, the message, or the test.

The bottleneck has moved from editing to adaptation

Once the team has usable modules and a searchable asset bank, the next constraint is production handling. Editors are no longer stuck finding ingredients. They are stuck turning one approved concept into every version required for delivery.

That work usually looks like this:

- applying text overlays one ad at a time

- rebuilding captions manually

- exporting the same structure into multiple aspect ratios

- swapping CTAs individually

- creating platform-specific versions from scratch

None of those steps sharpen the idea. They slow launch speed and create more room for QA mistakes.

In Meta's current Andromeda-driven setup, repetitive formatting work creates direct operational drag. The platform rewards faster rotation, broader variation, and cleaner feedback loops. If the team still relies on hand-built exports and manual trafficking, production capacity gets capped long before the testing strategy does.

What to automate first

Start with the transforms around the creative decision, not the decision itself.

Aspect-ratio adaptation

Build one master version, then auto-format for 9:16, 1:1, and feed-safe outputs. The goal is controlled adaptation, not three separate edits.Bulk text overlays

Hooks, offers, and proof points change often. Overlay systems should let the team update message layers in batches without reopening every cut.Voiceover and caption generation

This helps when you need localized variants, faster creator-style iterations, or sound-off coverage without another recording round.Direct deployment workflows

Exports, downloads, re-uploads, and manual renaming create avoidable handoff errors. In high-volume Meta workflows, those small frictions pile up fast.

A practical reference is this automatic video maker workflow for ad production. It shows the type of system that removes one-off rendering and formatting tasks from the editor's queue.

For teams evaluating broader short-form automation, this look at the DailyShorts AI video generator is useful context for reducing repetitive production work across channels.

Integration matters more than feature count

A lot of tools can spit out variants. Fewer can pass those variants into the buying workflow with usable filenames, correct metadata, placement-safe formatting, and a clean path into Ads Manager.

That trade-off matters more in Andromeda workflows than it did in older Meta setups. In 2026, the problem is not generating another asset. The problem is getting approved variations into market fast enough, with enough consistency, that the buying team can test and learn without production chaos.

That is why connected systems outperform stacked point tools here. If one tool handles generation, another handles captions, a third handles resizing, and a human handles final upload, the process slows down at every transfer point. Platforms such as Sovran are relevant because they combine asset banking, bulk overlays, format adaptation, and direct Meta deployment in a single production path.

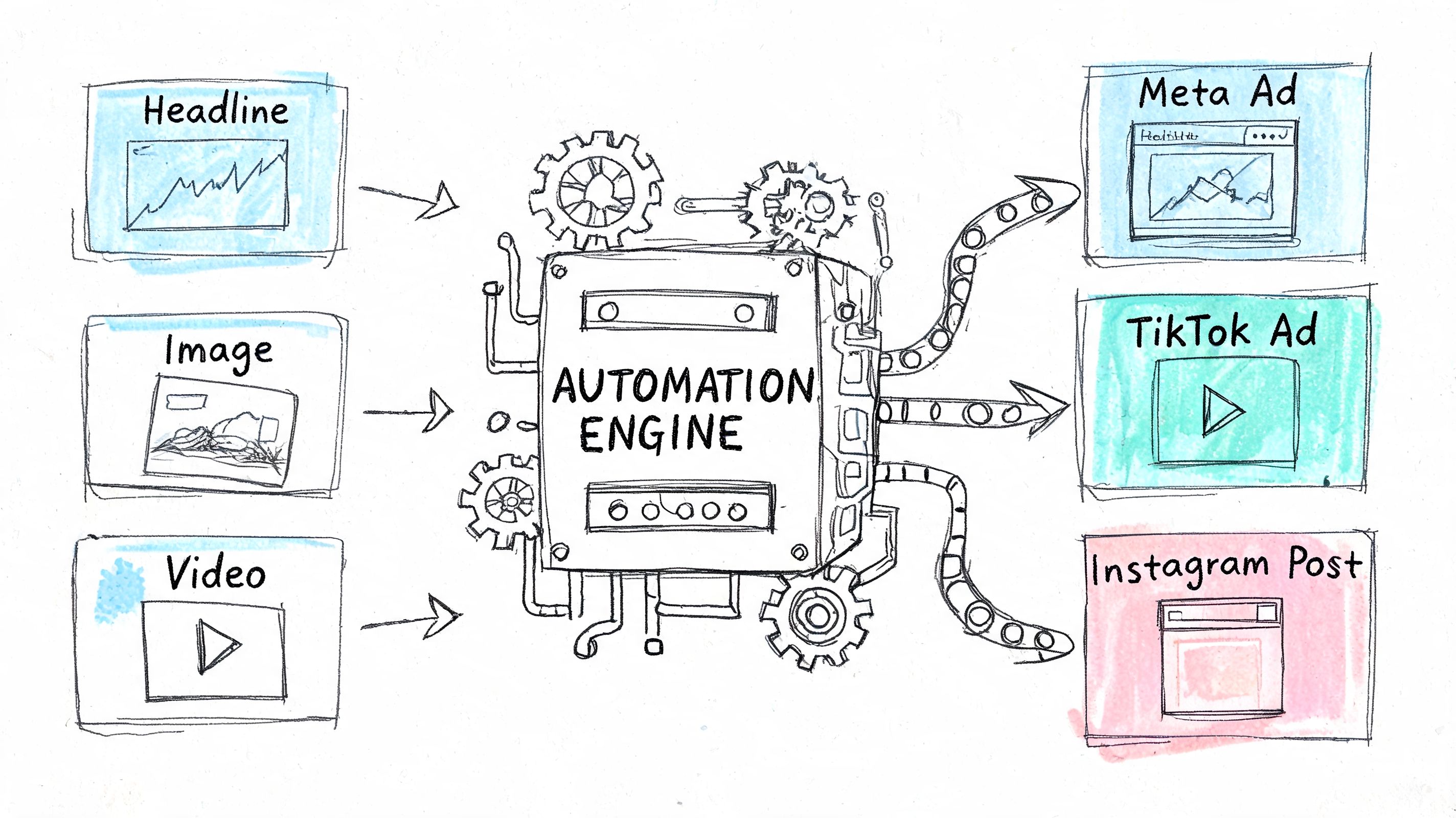

Later in the workflow, it helps to see the automation layer in action:

If every new variant needs human handling at the formatting layer, scaled testing breaks. Automation keeps output aligned with Meta's current production tempo.

Establish a High-Velocity Testing Workflow

More output doesn't help if the team can't explain why an ad won. Creative production and creative learning have to run as one system.

The mistake I see most often is uncontrolled testing. A team launches a batch with different hooks, different bodies, different offers, different creators, and different landing page contexts. Then they try to assign meaning to the result. You can't build a playbook from blended variables.

Test one variable at a time

Expert teams use structured multivariate testing to isolate variables. A common framework includes Hook Testing measured by CTR and thumb-stop rate, Message-Matrix Mapping measured by conversion rate and ROAS, and Creative Treatment Comparison measured by CPI and CPA. This workflow depends on a production pace that supports weekly or biweekly refresh cycles with 5-7 active creatives, as outlined in Motion's guide to scaling successful ad campaigns.

That framework works because each test has a job.

| Test type | What changes | What stays fixed | Primary read |

|---|---|---|---|

| Hook test | Opening visual or first line | Body, CTA, offer | CTR, thumb-stop |

| Message test | Core value proposition or objection handling | Hook style, CTA, format | CVR, ROAS |

| Treatment test | UGC vs polished, static vs video, vertical vs square | Message and offer | CPI, CPA |

A simple example makes this practical. If you have one strong body section and one CTA, create three versions that only change the first few seconds. If version A wins attention but doesn't convert, the hook did its job and the message needs work. If version C gets weaker attention but stronger conversion quality, you may have a narrower but better-aligned angle.

Build the feedback loop into the workflow

High-velocity testing isn't just launch cadence. It's decision cadence.

Use a workflow like this:

- Write a hypothesis before launch. "Problem-first hook will improve attention against solution-first hook."

- Keep the variable isolated. Don't let editors add unplanned copy changes across variants.

- Review by test type. Don't compare a hook test against a treatment test and call it a pattern.

- Log the insight in plain language. "Direct pain language outperformed feature-led openers for cold traffic."

- Feed the winner forward. Build the next round from the strongest component, not the strongest full ad.

Good testing doesn't produce more ads. It produces better instructions for the next ads.

What breaks most creative testing systems

Testing fails when workflow discipline fails. Usually that shows up in one of these forms:

- Overloaded batches where too many variables change at once

- Slow refresh cycles that let fatigue set in before the next read

- Weak naming conventions that make analysis messy

- No creative memory so the team repeats old mistakes in a new visual style

One fix is to create a shared experiment log between creative and media buying. Every test should capture the hypothesis, the assets used, the variable changed, the result, and the next action. That sounds simple because it is. However, consistent application of this practice remains uncommon.

Another fix is to centralize experimentation in a workflow designed for paid social, not generic project management. A purpose-built ad creative experimentation tool can help teams keep variant lineage, performance context, and next-step decisions in one place, which matters when volume increases.

Read outcomes by component, not by ego

The fastest teams don't get attached to concepts. They get attached to signals.

If a founder ad loses but the testimonial body inside it performs well across multiple edits, keep the body and retire the wrapper. If a polished brand cut underperforms but its opening frame repeatedly earns attention, extract the hook and reuse it elsewhere. That's how scaled teams compound learning instead of starting over.

You don't need a giant lab for this. You need enough production discipline to know what changed, enough launch speed to keep tests moving, and enough honesty to kill assets that look good in review meetings but don't carry paid performance.

Structuring Teams for Scaled Creative Production

A slow team with good tools still moves slowly. At scale, org design becomes a performance lever.

The old structure puts creative in one lane and media buying in another. The buyer asks for "new ads." The creative team disappears for a week. Files come back polished but detached from the actual account signal. That model can't support the pace high-performing Meta accounts now require.

High-performing Meta ad accounts maintain 8-12 active creative variations per campaign and refresh 25-30% of the library monthly to fight fatigue, according to Admetrics' guide to creative scaling on Meta. That cadence needs an integrated team that can deliver different formats consistently every week.

The pod model works because accountability is local

The setup I trust most is a small creative pod around one growth goal:

- Media buyer owns performance signal and testing priority

- Creative strategist translates signal into angles, briefs, and iteration plans

- Editor or designer turns modular assets into launch-ready variants

- Optional producer or coordinator keeps approvals, creator inputs, and asset flow on track

This structure removes the handoff gap. The buyer doesn't send abstract requests. The buyer brings evidence. The strategist doesn't brief from taste. The strategist briefs from patterns. The editor doesn't wait for a giant campaign package. The editor works in rolling iteration cycles.

A scaled creative team isn't built around job titles. It's built around how fast insight becomes a live test.

What each role needs to do differently

The changes are practical, not philosophical.

The media buyer should stop sending generic requests like "need fresh concepts." A useful brief says which audience is fatiguing, which signal dropped, which component likely caused it, and what kind of variation should test next.

The creative strategist should stop writing only net-new concepts. The stronger move is to maintain a living map of hooks, messages, treatments, and offers that have already been validated or disqualified.

The editor or designer should stop thinking in finals. Their output should include reusable segments, alternate overlays, and clean exports that can feed the next cycle.

For teams evaluating how to support these roles with AI and workflow infrastructure, Donely's hosted AI platform comparison is a useful reference point when you're deciding which kinds of hosted tools belong inside an operating stack versus outside it.

Approvals have to match the pace of testing

The hidden team bottleneck is usually review. If every variation needs the same full-chain approval as a brand campaign, velocity dies.

Set rules by asset type:

| Asset type | Review intensity |

|---|---|

| Net-new claim or offer | Full review |

| New hook using approved language | Light review |

| Format adaptation of approved ad | Minimal review |

| CTA swap from approved library | Minimal review |

This is also why a documented workflow for scaling a performance creative team matters. The structure has to define who decides, who reviews, and which variations can ship without restarting the whole process.

The teams that scale best aren't more "creative" in the abstract. They're tighter. They speak the same measurement language, work from the same asset system, and treat creative production like a compounding operating discipline instead of a series of special projects.

If your team is trying to keep up with Meta's Andromeda-driven environment, Sovran is built for that workflow. It helps performance marketers turn existing footage into modular ad variations, manage assets with AI-assisted tagging and search, adapt formats, and push launch-ready creative into Ads Manager without the usual manual setup drag.

Manson Chen

Founder, Sovran

Related Articles

How to Turn UGC Into Multiple Ads: A Scalable Playbook

A Faster Way to Make Facebook Ad Videos for 2026