How to Mass Produce Facebook Ad Creatives: A 2026 Playbook

Jump to a section

Your Meta account probably looks familiar right now. Spend is concentrating into a few ads, the winners are tiring out, and your team is still debating the next angle in a Slack thread while delivery slips. Meanwhile, folders are full of half-labeled clips, old UGC, exports named “final_v7,” and a pile of ads that never got a clean test.

That isn’t a creative inspiration problem. It’s an operations problem.

Many teams trying to mass produce facebook ad creatives still work like a boutique studio. They brainstorm one concept at a time, edit one timeline at a time, and launch one batch at a time. That model breaks fast when Meta rewards testing velocity, format adaptation, and faster refresh cycles. The teams that scale don’t rely on more ideas alone. They build a system that turns raw footage into organized components, components into variations, and variations into structured tests.

Why Your Creative Process Is Broken (And How to Fix It)

The usual workflow fails in the same place every time. A media buyer asks for fresh ads. A strategist writes briefs from scratch. An editor assembles custom cuts manually. Then the account spends heavily on a few winners until fatigue shows up, and the cycle repeats.

That approach can’t keep up with the way Meta allocates delivery. Active Facebook ad accounts typically test between 8–20 creatives per month, while top-performing accounts that refresh weekly see a 5–18% performance lift compared with bi-weekly rotations. The same benchmark notes that 55–80% of ad spend often concentrates in the top three creatives, which makes replacement speed a real operating constraint, not a nice-to-have (Uproas Facebook ad benchmarks).

The real bottleneck is production engineering

If one or two ads carry most of the account, your process has to answer one question fast. Can you replace winners before they fade without lowering quality?

Many teams are unable to, because they’re still treating every ad as a one-off deliverable. That creates three predictable problems:

- Creative fatigue outruns production: Winners die before the next batch is ready.

- Testing gets polluted: Variations aren’t isolated cleanly because each ad changes too many things at once.

- Good assets disappear: Clips, testimonials, product shots, and old winners sit in shared drives with no reusable structure.

Practical rule: Stop asking, “What ad should we make next?” Start asking, “What system turns one shoot into a month of testable variants?”

A better model is a creative factory. Not a soulless one. A disciplined one.

That means standard inputs, repeatable assembly, naming conventions, quality control, and a clear handoff from strategy to editing to launch. Teams already use this logic in email, lifecycle, and orchestration tooling. If you’re refining your broader stack, lists of best marketing automation tools are useful because they show the same underlying principle: scale comes from systems, not heroics.

What fixing it actually looks like

The shift is operational:

- Break ads into reusable parts instead of building full custom edits every time.

- Store assets in a searchable library instead of loose folders.

- Assemble variants in batches with a repeatable workflow.

- Test under controlled conditions so the account learns from signal, not noise.

- Feed learnings back into production at the component level.

If your team structure is part of the bottleneck, this guide on scaling a performance creative team is worth reading because process design usually matters more than adding another editor.

Designing Your Modular Creative Blueprint

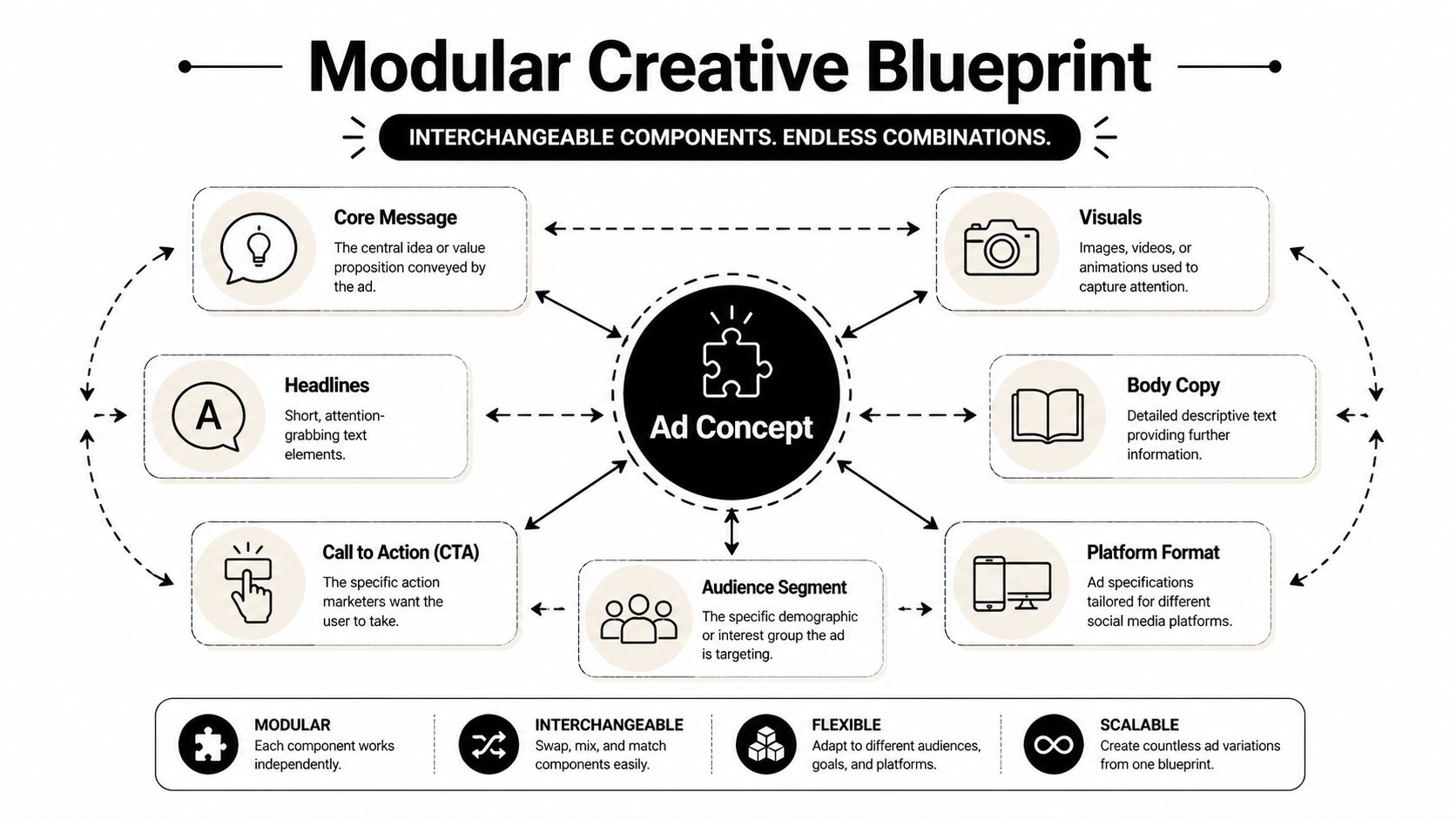

A scalable ad system starts with one decision. You stop scripting “an ad” and start planning modules.

The simplest useful structure is Hook, Body, CTA. Many teams know those terms from storytelling. Few use them as production architecture. That difference matters because the point isn’t only to write better ads. It’s to create interchangeable parts you can recombine without rebuilding the whole thing.

Think in modules, not finished videos

Take a simple DTC example: a coffee alternative.

You don’t plan one polished concept called “Morning Energy Ad.” You plan a library of parts.

- Hooks: “Coffee makes me crash,” “I switched for calmer focus,” “My morning routine changed,” “Watch this mix in five seconds.”

- Bodies: ingredient explanation, product demo, taste reaction, testimonial montage, before-and-after routine.

- CTAs: shop now, try the starter pack, switch your routine, get the bundle.

That gives you controlled variation. If a hook underperforms, you can replace the opening while preserving the body and CTA. If a body works but the CTA doesn’t, you don’t need a reshoot. You need a swap.

Plan the shoot around recombination

A frequent misstep involves aiming for a single edit, followed by attempts to “make variants” afterward. This often results in weak derivatives because the source footage wasn’t captured for modular use.

Instead, brief your creator, editor, or production team to collect assets by component:

| Component | Variation 1 | Variation 2 | Variation 3 |

|---|---|---|---|

| Hook | Problem-led opener | Testimonial-led opener | Visual curiosity opener |

| Body | Product demo | Mechanism explanation | Social proof montage |

| CTA | Shop now | Try the bundle | Switch today |

During production, capture more than the hero take:

- For hooks: record several opening lines with different emotional tones.

- For bodies: get both talking-head and hands-on footage.

- For CTAs: film direct asks, softer prompts, and offer-led closes.

Don’t ask an editor to invent modularity from a single polished take. Capture modularity on set.

Add metadata to the blueprint itself

The blueprint should include more than creative ideas. It should also define how the team will classify components later. For each planned module, assign tags such as persona, pain point, product feature, visual style, and intended placement.

That makes the blueprint usable inside tooling later. If you’re developing a repeatable structure, a dedicated modular video ad framework is the right reference point because it forces you to design for reuse before editing begins.

A good blueprint feels slightly rigid at first. That’s fine. Rigid inputs create flexible outputs.

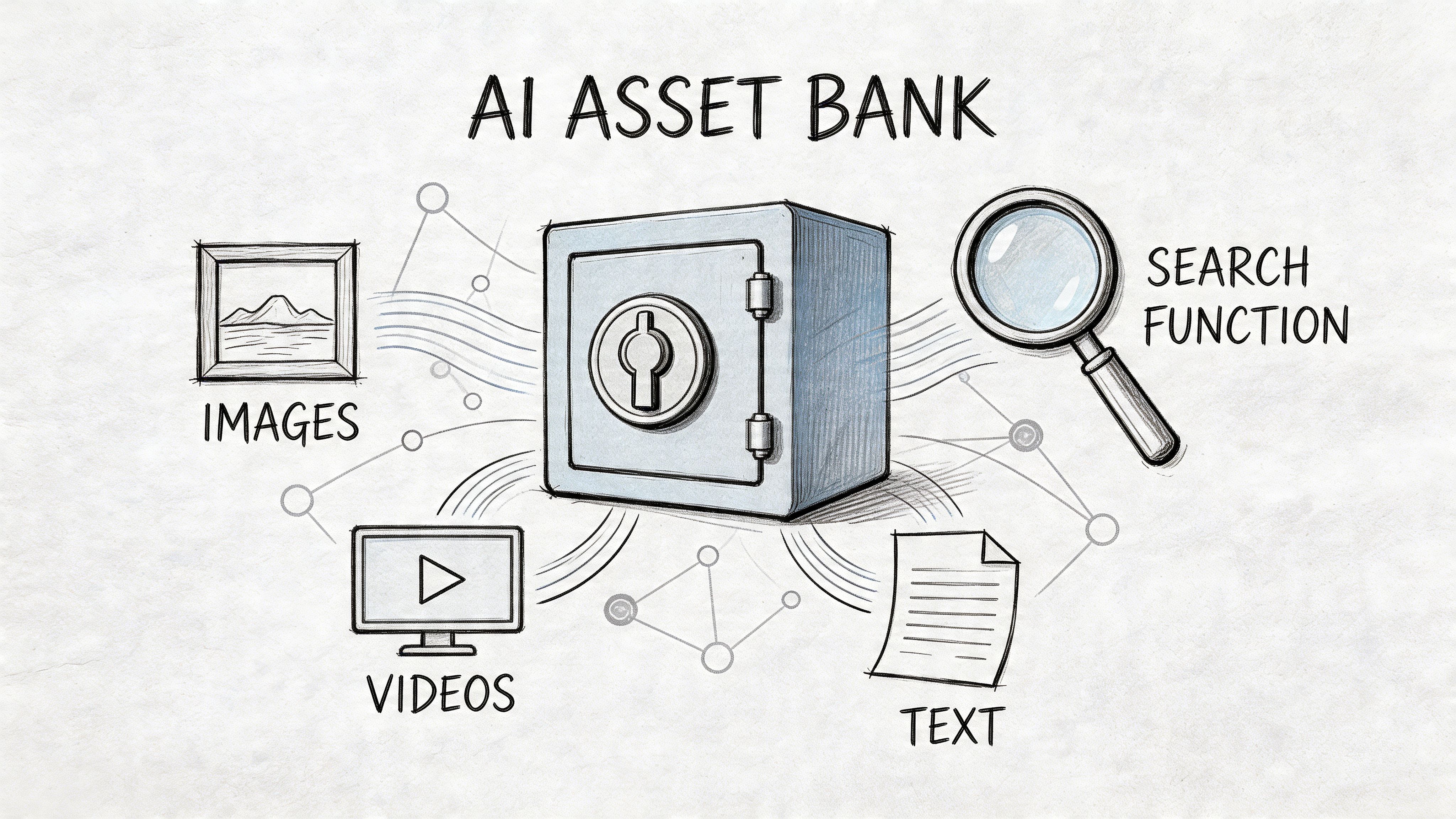

Building Your Centralized AI-Powered Asset Bank

Once you start producing modular footage, your next problem appears fast. You now have more usable material, but also more chaos.

Shared drives don’t scale well for creative testing. They become archives, not systems. The fix is a centralized Asset Bank that stores footage, transcripts, scenes, stills, old winners, UGC, and rendered exports in a structure the team can search.

Recent Meta data from Q4 2025 reported that campaigns using modular video recombination achieved a 2.7x ROAS uplift versus static creatives, yet only 18% of performance marketers reported using AI asset banks for tagging and search, which shows how much of the bottleneck is still operational rather than strategic (IndustryBenchmark.ai summary via YouTube).

What belongs in the Asset Bank

Treat the bank like production infrastructure, not cloud storage. It should hold:

- Raw footage: creator files, product demos, interviews, b-roll, screen recordings.

- Derived assets: trimmed clips, isolated hooks, still frames, captions, voiceovers.

- Context data: transcript, scene tags, product references, audience notes, usage history.

- Performance context: whether the clip appeared in a winner, loser, or untested variation.

This is also the right place to keep AI-generated support assets. For example, if your team needs clean lifestyle stills or concept visuals to support testing, an AI Photo Generator can help create supplementary image assets that fit into the same tagged system instead of living in a separate folder nobody searches.

The tagging standard matters more than the folder tree

Folders still help, but search wins. Build tags around how strategists and editors retrieve material.

Use practical labels such as:

- Asset type: hook, body, CTA, b-roll, testimonial, demo

- Message angle: fatigue, convenience, ingredients, price objection

- Visual type: talking head, unboxing, product in use, app screen, motion graphic

- Platform fit: feed, stories, reels

- Compliance markers: captions present, voiceover present, text-heavy, claims-sensitive

A searchable library beats a “perfect” folder structure every time because your team thinks in use cases, not in nested directories.

AI helps here because scene detection, transcription, and auto-tagging remove the manual logging work that usually kills adoption. A proper video asset management system should make clips queryable by what’s said, what’s shown, and where the asset belongs in the modular stack.

How teams usually break this

They centralize files but skip governance. Then everyone uploads their own way.

Set a few hard rules:

- Every upload gets tagged before it enters active production.

- Every exported winner is linked back to source modules.

- No “misc” folders.

- No final files without naming conventions.

- No unlabeled UGC accepted into the bank.

If the library can’t answer “find every hook about energy slump with captions and product close-up,” it’s not an asset bank. It’s storage.

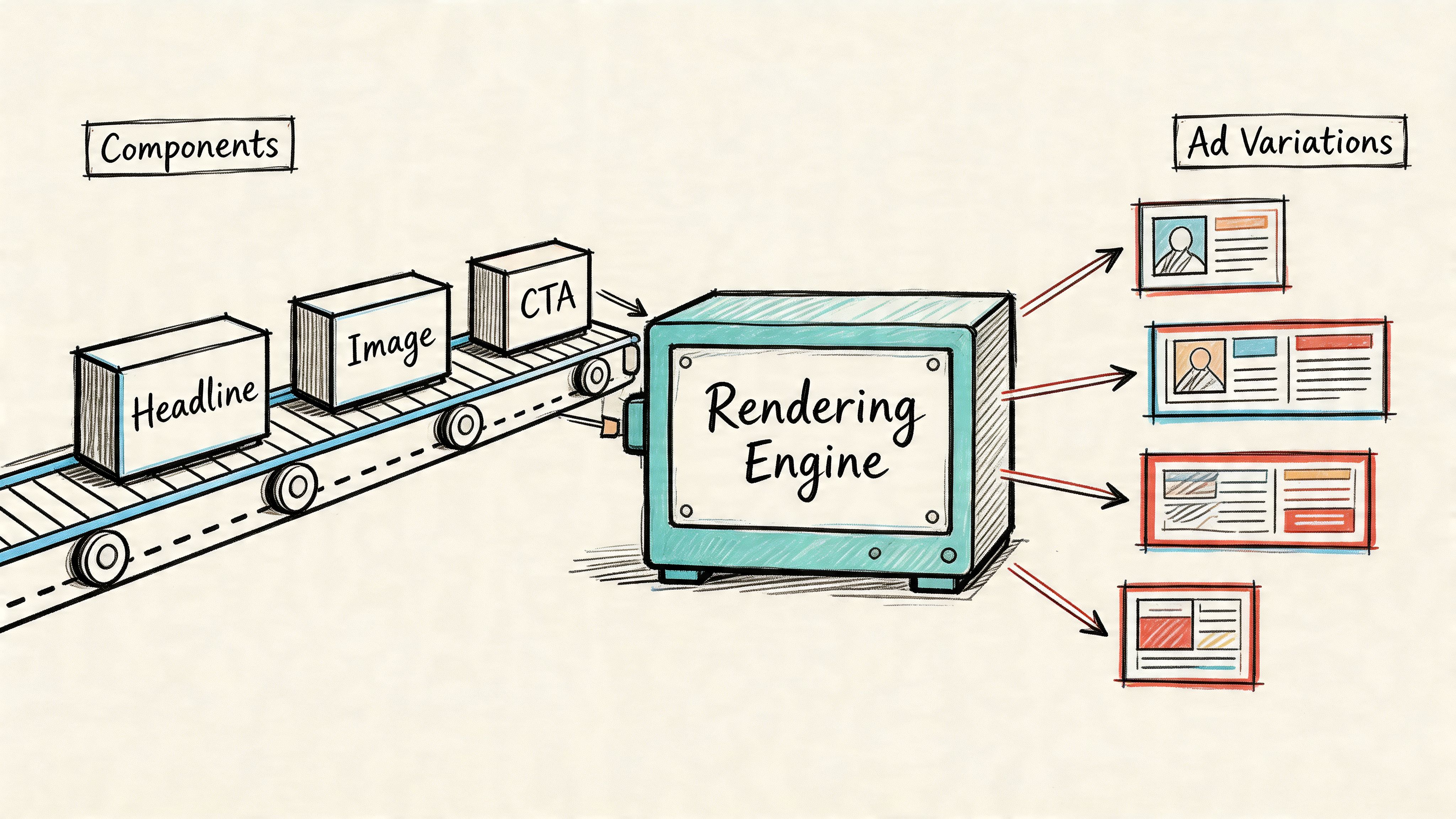

Assembling and Rendering Ad Variations at Scale

Now, the system starts paying off. Once components are planned and assets are searchable, editing stops being custom craftsmanship for every single ad. It becomes structured assembly.

The fastest teams don’t open a blank timeline for every concept. They use templates with locked positions for intros, body segments, supers, end cards, and CTA cards. Then they swap approved components into those slots and render in batches.

Build timeline templates for families of ads

You need a small number of editing templates, not endless custom projects. A practical setup might include:

- UGC direct response template

- Founder explainer template

- Product demo template

- Testimonial montage template

Within each template, define which sections are fixed and which are variable. For example, your body structure may stay constant while the hook, headline overlay, and CTA rotate.

One tool in this category is Sovran’s rendering workflow for modular video ads, which is built around recombining hooks, bodies, and CTAs into multiple exports without rebuilding every edit manually.

Use AI where repetition is expensive

Post-Andromeda, Meta’s stricter dynamic creative rules rejected 32% of high-volume submissions that lacked auto-generated captions or voiceovers. The same benchmark set also reported that AI-driven b-roll generation cut production costs 41% for DTC brands in H2 2025 (AdsPower and Sovran references via YouTube).

That has two practical implications.

First, captions and voiceovers aren’t optional cleanup tasks anymore. They belong inside the production pipeline. Second, synthetic b-roll isn’t just a convenience tool. It fills coverage gaps without forcing reshoots.

Use AI to handle repetitive layers such as:

- Captioning: timed subtitles for all rendered versions

- Voice variation: alternate reads from one approved script

- B-roll generation: supplementary scenes where original footage is thin

- Format adaptation: resizing and safe-zone adjustment for vertical and square placements

- Bulk text overlays: swapping hooks, offers, or proof points across a full batch

A visual walkthrough helps if your team is still assembling manually:

Protect speed with constraints

The trap at this stage is unlimited combinations. Just because you can create hundreds of permutations doesn’t mean you should launch every possible one.

Use constraints:

| Decision area | Good constraint |

|---|---|

| Hook testing | Change one opening idea family at a time |

| Body testing | Keep proof structure consistent inside a batch |

| CTA testing | Limit to a few clear asks |

| Formatting | Use approved layout systems only |

Fast production comes from fewer decisions per render, not more freedom per file.

When teams try to scale by improvising every overlay, every subtitle style, and every aspect ratio manually, output collapses. Assembly at scale works when design rules are boring enough to be repeatable.

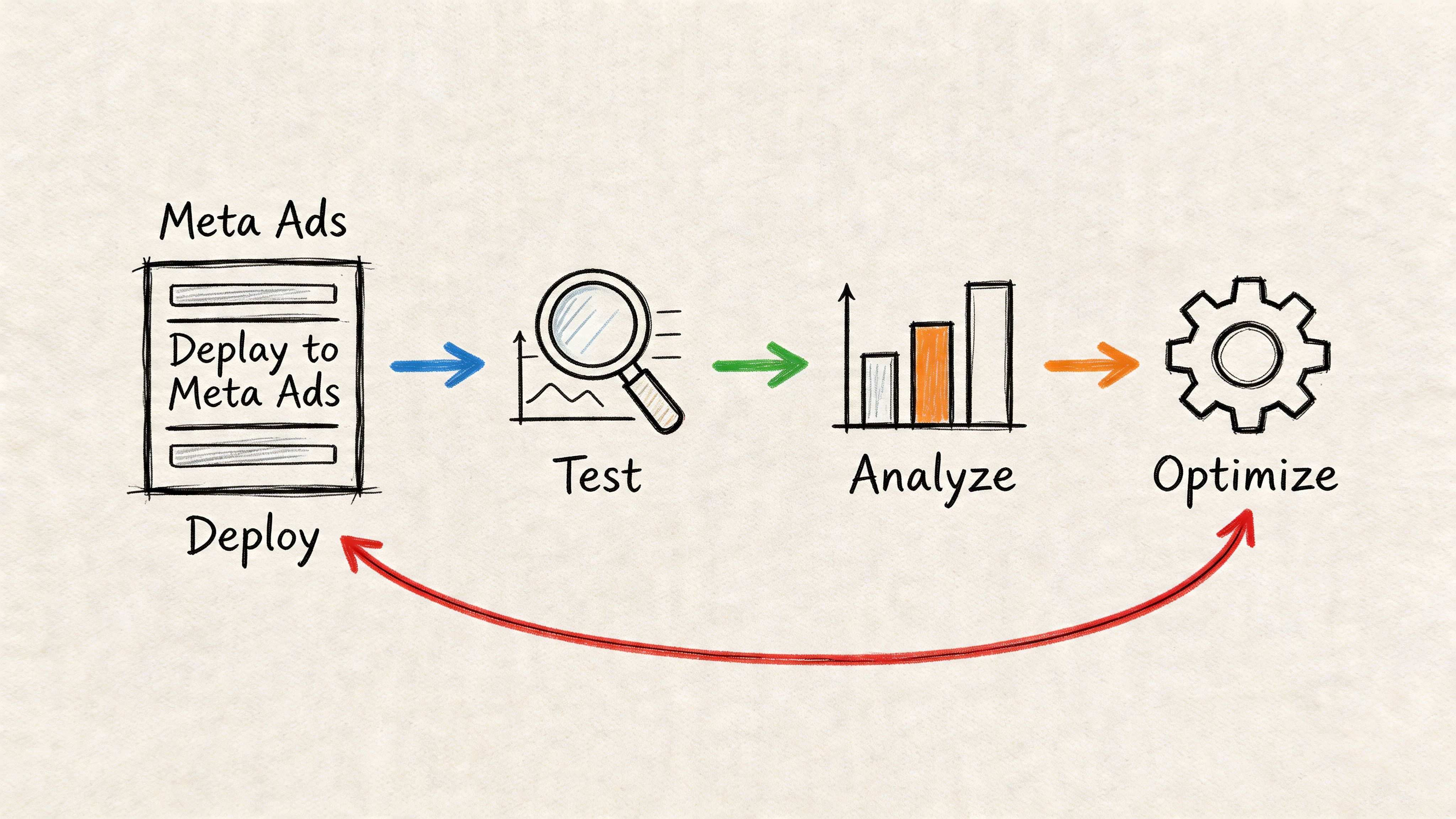

Deploying and Testing Creatives Systematically

Most creative teams waste the output they worked so hard to produce. They launch a batch, check early results, then kill ads based on weak signal or let Meta optimize in ways that make comparison messy.

A scalable testing system needs control. That’s why hold-out ABO setups are so useful for creative testing.

According to AdStellar’s breakdown of Facebook ad creative testing, teams should use hold-out ABO structures with a minimum of 50 conversions per variant to reach 95% statistical significance. The same source says systematic pipelines achieve 2–3x higher ROAS than ad-hoc testing, while 80% of advertisers end tests too early and make decisions on noise.

Why ABO is better for clean creative reads

If the goal is to compare creative, not audience or budget logic, isolate the variable. In hold-out ABO testing:

- Group A sees creative A

- Group B sees creative B

- There’s no crossover

- Budgets stay controlled

- Audience conditions match as closely as possible

That setup won’t remove every real-world variable, but it gives your team a far cleaner read than loose duplication and gut-checking after a day of spend.

A practical deployment workflow

Use a checklist, not intuition.

Create a naming system first

Name ads by component set, not by vague concept titles. You need to know which hook, body, and CTA each ad contains without opening the file.Launch in paused review state

Check captions, destination URLs, aspect ratios, and CTA mapping before anything spends.Keep batches coherent

Don’t test a founder video against a heavily animated UGC cut, an offer card, and a static image in the same learning question. Keep the family tight.Wait for enough conversion data

If a variant hasn’t reached the conversion threshold, you don’t know enough yet.Record the test question explicitly

Example: “Does problem-led hook outperform testimonial-led hook against the same body and CTA?”

A deeper walkthrough of that process sits in this guide to Facebook ad creative testing.

The point of testing isn’t to prove your favorite concept right. It’s to remove your opinion from the decision.

What doesn’t work

A few habits repeatedly ruin otherwise solid testing:

- Changing too many variables at once

- Stopping based on early CTR alone

- Letting campaign structure blur creative comparisons

- Launching so many variants that none gets a fair read

- Failing to document what each ad changed

Good testing feels slower at the decision point and faster over time. You spend less energy arguing because the system made the comparison legible.

Closing the Loop with Component-Level Analysis

Finding a winning ad is useful. Knowing why it won is what compounds.

Analysis often stops too early. They mark one ad as the winner, duplicate it, and move on. That creates short-term lift but weak long-term learning. If you want to mass produce facebook ad creatives without drowning in random output, you need analysis at the component level.

Read results by module, not just by ad

A modular workflow gives you better questions.

Instead of asking, “Which ad won?” ask:

- Which hook family created the strongest early engagement?

- Which body structure held attention and converted?

- Which CTA closed efficiently without weakening click intent?

- Which combinations failed repeatedly across different batches?

This changes reporting. Your spreadsheet, dashboard, or BI layer should map each ad back to its ingredients. Then you can compare hooks across multiple bodies, or CTAs across multiple openings, instead of treating every ad as an isolated event.

What useful analysis looks like

Say you ran several coffee alternative variations. The “calm focus” angle worked in more than one ad. But when you break the ads down, you notice something more specific:

- Problem-led hooks consistently pulled stronger initial interest.

- Taste-reaction bodies didn’t carry as well as routine-demo bodies.

- Softer CTAs underperformed direct product asks in this audience.

That’s a production instruction, not just a reporting note. The next batch should contain more problem-led hooks, more routine-demo bodies, and fewer taste-reaction edits.

Winning ads are temporary. Winning patterns are reusable.

Build feedback into the production cycle

Component-level analysis only matters if it changes the next production brief. Make that handoff explicit.

A simple feedback loop looks like this:

| Stage | What gets recorded |

|---|---|

| Blueprint | Planned hook, body, CTA themes |

| Assembly | Exact module combination in each render |

| Test results | Outcome by rendered ad |

| Analysis | Performance by module type |

| Next batch | Modules expanded, cut, or reworked |

This is also where teams separate signal from false certainty. One winning hook doesn’t prove a universal truth. But repeated strength from the same hook family across multiple controlled tests gives you something operationally useful.

The discipline most teams skip

They don’t maintain a component ledger. They track ads, but not ingredients.

Without that ledger, you can’t answer basic strategic questions. Did the body carry the ad, or did the opening do all the work? Did the CTA improve conversion, or was the result mostly audience fit? Did one visual style fatigue faster than another? You’ll end up making the next batch based on memory and preference.

The creative factory model only becomes durable when learnings flow back into planning. That’s what keeps output from becoming volume for its own sake. More ads isn’t the goal. Better learning per batch is.

If your team needs a system for modular assembly, asset organization, and direct ad variation rendering for Meta, Sovran is built for that workflow. It helps teams structure footage into reusable components, generate variations at volume, and keep creative testing operational instead of chaotic.

Manson Chen

Founder, Sovran

Related Articles

A Faster Way to Make Facebook Ad Videos for 2026

How to Turn UGC Into Multiple Ads: A Scalable Playbook