How to Scale a Performance Creative Team: A Practical Guide

Jump to a section

Teams often hit the same wall at the same point.

Creative demand jumps. Meta and TikTok need more variants, more testing, and faster refresh cycles. The team responds the obvious way. Hire another editor. Add a designer. Pull in a freelancer. Start more Slack threads. Build more spreadsheets. Output goes up for a minute, then chaos catches up. Files go missing, approvals stall, naming breaks, learnings disappear, and the people holding the system together become the bottleneck.

That’s why learning how to scale a performance creative team has less to do with managing artists and more to do with designing a production system. If your operation depends on memory, hustle, and a few high-agency people saving the week, you don’t have scale. You have fragile throughput.

The teams that keep growing treat creative like an engineered testing factory. Not soulless. Not low-quality. Just structured enough that good ideas move quickly, weak ideas die fast, and nobody needs heroics to ship.

The Scaling Trap and the Mindset Shift

The most dangerous stage isn’t the early scramble. It’s the middle.

You have enough budget to feel pressure, enough people to create coordination problems, and enough campaign complexity that every inefficiency gets amplified. This is the Phase 2 Danger Zone, where costs scale faster than revenue. In agency contexts, operators often watch utilisation with a target of 65-75% billable hours and revenue per head of £80-120k/person/year to see whether the team structure is paying for itself, as noted in this agency scaling analysis. In-house teams usually don’t track equivalent operational health until delivery starts slipping.

The symptoms are easy to recognize:

- Key-person dependency: One strategist knows the testing logic. One editor knows the file structure. One producer can untangle the calendar.

- Throughput theater: Everyone looks busy, but shipping still depends on late nights and exceptions.

- Stagnant efficiency: More people enter the system, but output quality and learning speed don’t rise with headcount.

Practical rule: If one person taking a vacation causes launches to wobble, the problem isn’t talent. The problem is system design.

The mindset shift is simple and uncomfortable. Stop thinking of the team as a studio that occasionally produces winning ads. Start thinking of it as an engine built to generate, package, test, and learn from creative at high frequency.

That changes how you evaluate work. Great teams don’t just ask whether an ad is good. They ask whether the workflow that produced it is repeatable, diagnosable, and fast enough for paid media reality. The best creative leaders I’ve seen think a lot like operators. They define constraints, document decisions, and remove failure points before they hire around them.

If you need a better lens for that shift, these mental models for creative strategy are useful because they force creative decisions into systems thinking instead of taste debates.

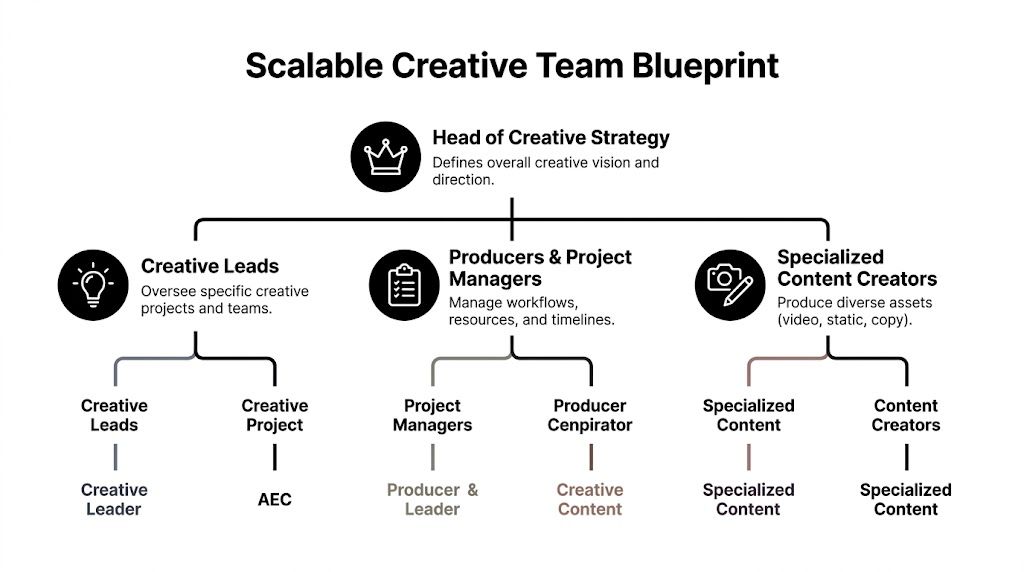

Designing Your Scalable Team Structure and Roles

A scaling team doesn’t need more titles. It needs clearer responsibilities.

Most creative orgs default into one of two structures. Neither is universally right. The right choice depends on your volume, channel mix, and approval complexity.

The two structures that usually work

Functional teams group people by craft. Designers sit together. Editors sit together. Copywriters sit together. This works well when you need strong quality control, shared craft standards, and flexible staffing across many campaigns.

Cross-functional pods group people around a business goal or channel. A pod might include a strategist, editor, designer, and producer focused on Meta prospecting, or on TikTok app installs, or on retargeting. This works when speed, ownership, and fast feedback matter more than perfect specialization.

A simple comparison helps:

| Model | Best for | Main risk |

|---|---|---|

| Functional team | Strong craft consistency, centralized review, shared resource pool | Slow handoffs and too many queues |

| Cross-functional pod | Faster iteration, tighter ownership, channel-specific learning | Duplicated effort and drifting standards |

For many teams, the durable answer is hybrid. Keep craft standards and asset governance centralized. Run testing and launch velocity through pods.

The roles that matter most

Titles vary. The responsibilities don’t.

- Head of Creative Strategy sets testing direction, defines the decision criteria, and protects the balance between brand constraints and performance reality.

- Creative Strategist turns market insight into hypotheses. This role should write test briefs, define variables, and interpret why an ad won or lost.

- Producer or Project Manager keeps the machine moving. Intake, deadlines, dependencies, approvals, resourcing. This person prevents creative energy from getting wasted in operational fog.

- Editor and Motion Designer build the variants. In high-velocity teams, they shouldn’t spend half their week deciphering requests.

- Copywriter writes hooks, angles, overlays, and CTA variants. Good teams don’t hide copy inside design requests.

- Creative Ops Manager owns systems. Naming conventions, asset libraries, version control, QA, and workflow documentation usually live here.

The fastest-growing teams don’t treat ops as admin support. They treat it as throughput infrastructure.

What not to do

The most common failure is building the team around talent instead of flow. You hire a great editor, then route everything through them. You hire a strong strategist, then make them the approval layer for every concept. You add freelancers, then make internal staff translate every brief manually.

That structure collapses under volume.

A better standard is role design around handoff quality. Every role should know:

- what enters their queue,

- what “ready” looks like,

- what they own,

- where their work goes next.

If you’re tightening production handoffs, this guide to video production project management is a good operational reference because it focuses on workflow clarity instead of generic collaboration advice.

Engineering High-Velocity Modular Workflows

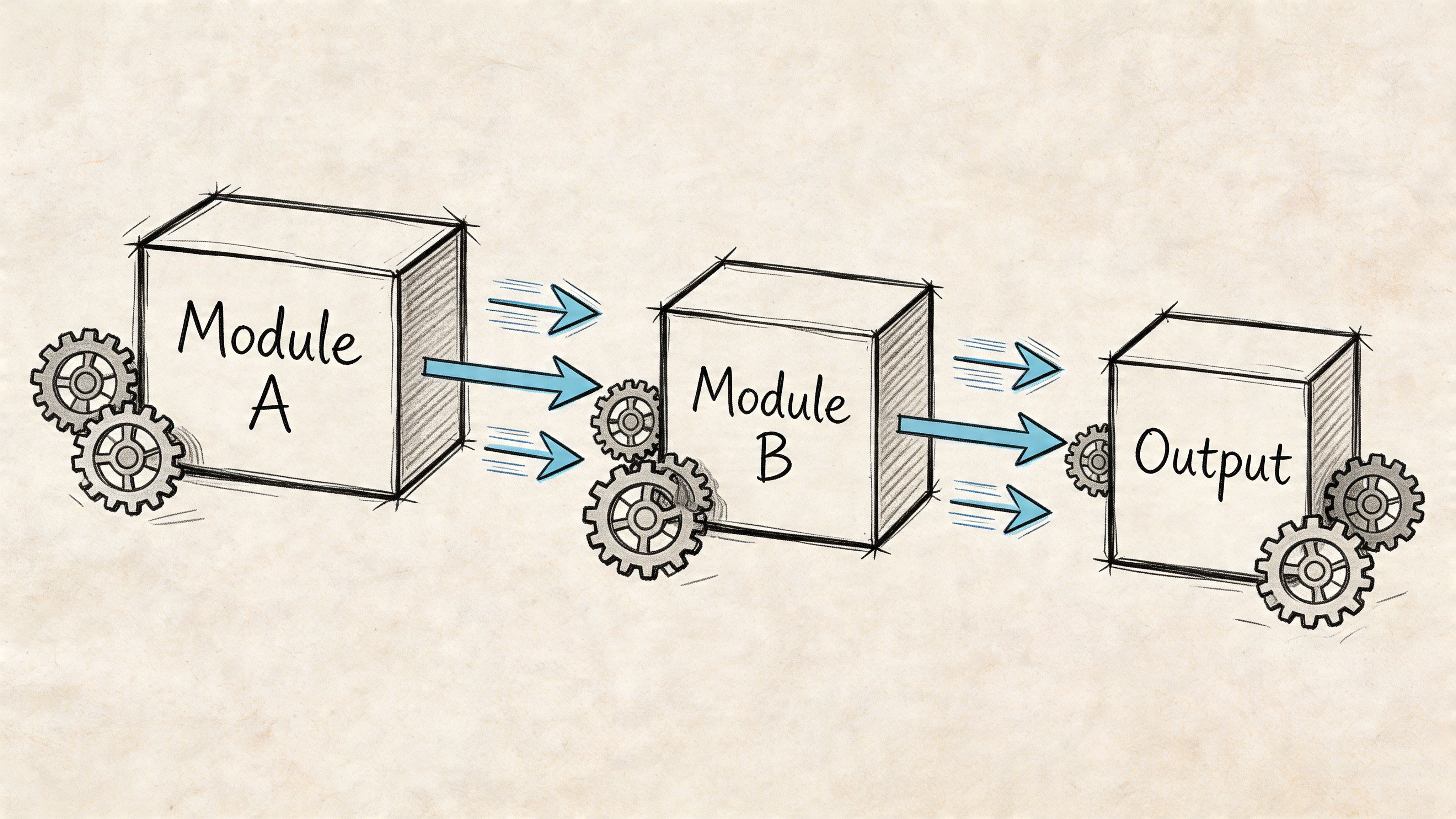

Creative scale happens when you stop producing ads as one-off artifacts.

Teams often brief and build complete ads from scratch. That approach breaks as soon as testing volume rises. The fix is a modular workflow. You break the ad into reusable parts, define how those parts combine, and build production around recombination instead of reinvention.

Teams using modular creative systems report 30-50% output increases while reducing cost per asset, and that matters because 51.8% of marketers admit they aren’t producing enough audience-specific variations, according to this breakdown of modular creative operations. That’s the operational argument for modularity. You don’t scale by asking people to move faster. You scale by reducing how much must be rebuilt each time.

Build around components, not finished ads

The simplest useful structure is hook, body, CTA.

A performance team should store and review those as separate components:

- Hooks are openings built to stop the scroll or frame the problem.

- Bodies carry proof, product context, demonstration, or objection handling.

- CTAs convert attention into a next action.

Once you work this way, a single source clip stops being “one ad.” It becomes raw material for many combinations. A creator testimonial might yield multiple hooks, several mid-sections, and different end cards depending on audience and offer.

That’s also why teams working on UGC or creator-heavy pipelines benefit from operational playbooks outside paid media. If you’re building a repeatable sourcing and repurposing process, a guide to scaling creator programs is useful because it addresses how to turn creator content into a dependable input, not just occasional campaign fuel.

The workflow that holds up under pressure

A scalable modular system usually has these stages:

Structured brief creation

The brief should define the hypothesis, audience, core angle, variable being tested, asset requirements, and approval constraints. “Make three new ads” isn’t a brief.Centralized intake

Raw footage, brand assets, creator clips, transcripts, and claims should enter one queue. If intake happens in Slack, email, and private folders at the same time, the team will lose speed every week.Component tagging

Hooks, scenes, product demos, objections, proof points, and CTAs need tags that people can search. This turns the library into a production system instead of an archive.Sprint-based production

Build in batches. Teams that batch edits by test variable, format, or audience usually move faster than teams chasing custom requests one at a time.QA before launch

The preflight check should cover copy, aspect ratio, subtitles, compliance, destination, and naming. Catching those errors after launch poisons both performance and learning.

If your editor has to ask what changed between version three and version four, the process is under-documented.

A clear modular system also makes reviews less subjective. Stakeholders can comment on the variable under test instead of relitigating the whole ad.

What a good production cadence looks like

You don’t need a giant team. You need a rhythm that people can trust.

A healthy weekly cadence often includes:

- A planning block for new hypotheses and production priorities

- A build block for variant creation and revisions

- A launch block for QA and trafficking

- A review block for performance analysis and next-step decisions

Later in the week, teams can use a deeper framework like this one on the modular video ad framework to standardize what gets built and how component libraries evolve over time.

For a practical walkthrough of modular ad construction, this short video is worth watching:

The point isn’t to mechanize creativity. It’s to remove the waste around it. When teams stop rebuilding the same foundation every week, they finally have time to improve the variables that matter.

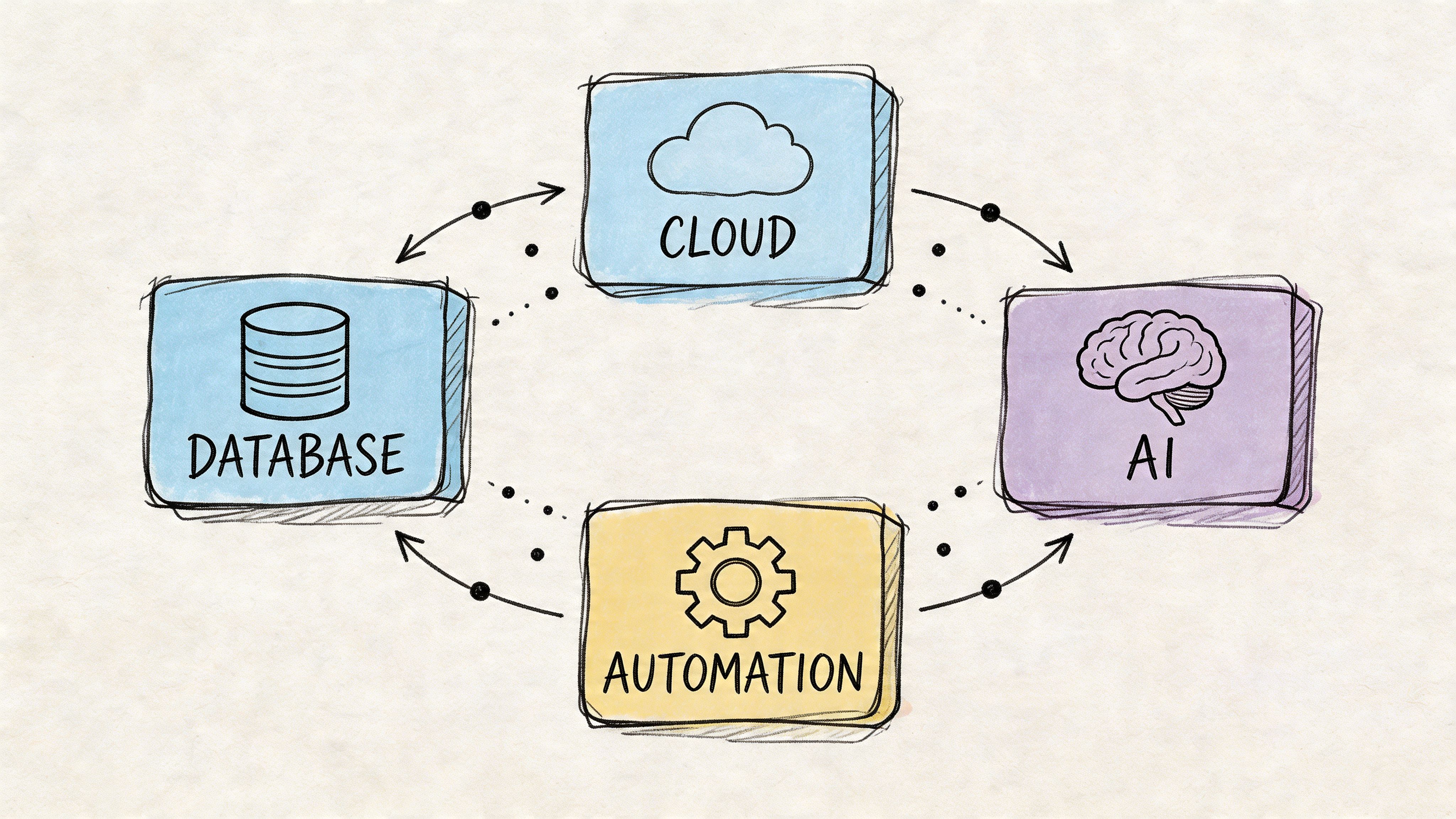

The Automation Stack That Powers Scale

You can’t out-discipline a broken tool stack.

A lot of creative leaders still treat software as overhead and labor as the flexible variable. That logic falls apart once demand rises. People end up doing expensive manual work that software should handle by default.

A 2025 Monotype survey found that 57% of creative teams spend more than a quarter of their time on non-creative tasks like asset management and workflow bottlenecks, and that automation can reclaim up to 35% of creative time, according to Monotype’s scaling creative operations report. That’s not a minor efficiency issue. It’s a structural tax on your team.

The stack categories that actually matter

Project management tools such as Asana, Monday.com, or ClickUp give you visible queues, owners, deadlines, and approval states. Their real value isn’t task assignment. It’s reducing ambiguity at handoff points.

Digital asset management is where many teams underinvest. Google Drive alone isn’t a DAM. Once you’re managing multiple versions, channels, and variants, you need searchable metadata, usage controls, and a clear source of truth.

Communication tools like Slack or Microsoft Teams should carry discussion, not become the archive. If final decisions live only in chat, they’re gone.

Creative automation and assembly tools handle repetitive production work. That includes formatting, captioning, versioning, and modular recombination. If you want a broader perspective on where this category is heading, this piece on enterprise creative automation is a useful reference for evaluating workflow-level automation rather than just asset generation.

What to look for when evaluating tools

Don’t evaluate software by feature count. Evaluate it by bottleneck removal.

Use a checklist like this:

- Searchability: Can the team find clips, transcripts, and approved assets fast?

- Workflow visibility: Can anyone see what’s blocked, waiting, or ready to launch?

- Version control: Is there one approved file path, not five competing “final” exports?

- Integration: Can your stack pass assets and decisions cleanly without manual copying?

- Repeatability: Does the tool help standardize recurring tasks?

One practical option in this category is Sovran’s AI creative automation platform, which is built for modular video workflows, asset banking, and direct production support for high-variation testing. It’s one example of the kind of system that reduces manual assembly work when teams need to ship many variants from existing footage.

Good tooling doesn’t replace creative judgment. It protects it from admin work.

A significant mistake is waiting too long. Teams often delay stack changes until people are overloaded, then roll out tools during a period of delivery stress. It’s better to build the stack while the system still works, so you can standardize before chaos hardens into habit.

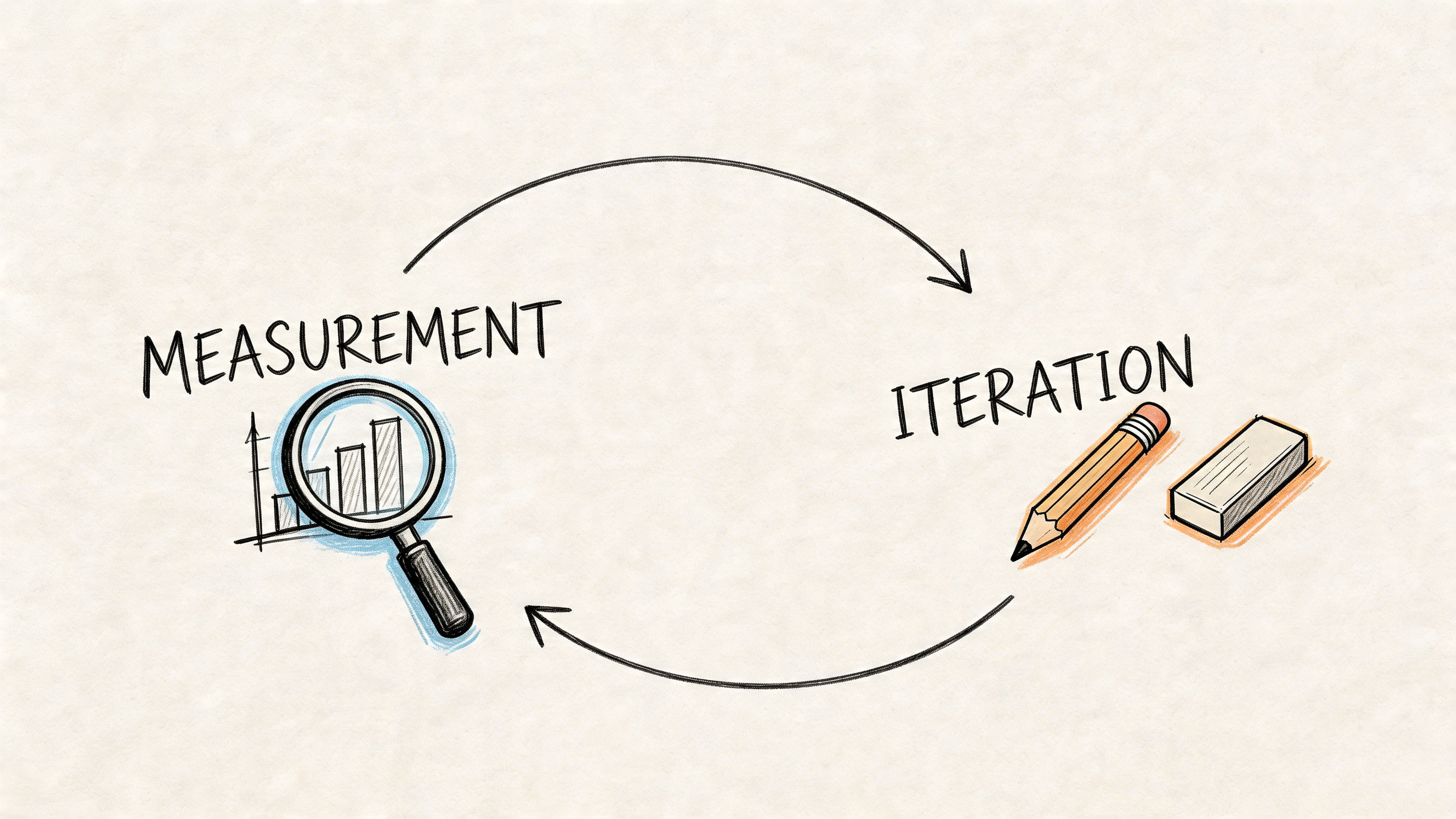

Closing the Loop with Measurement and Iteration

A scaled team without a measurement loop is just a larger content department.

Performance creative gets better when the team can connect output to outcomes, isolate what changed, and decide quickly what to do next. That loop has to be built on cadence, not instinct.

Meta research shows that advertisers running at least 15 creative experiments per year improve results by up to 30%, with gains reaching 45% after two years of consistent testing, and that new creatives often peak within two to three weeks before fatiguing, according to this analysis of testing volume and creative scale. That’s the key operating reality. Winning creative is temporary. The system has to keep learning.

What to measure at the creative level

Many teams say they’re data-driven but only review account-level outcomes. That’s too blunt for iteration.

The creative team needs a narrower lens. At minimum, review:

- CAC by concept, angle, and audience

- ROAS by creative family, not just campaign

- LTV by cohort where the business can support it

- Conversion behavior tied to the variable being tested

- Fatigue timing so refreshes happen before performance slides too far

This isn’t about turning editors into analysts. It’s about making sure the team can answer specific questions. Did the stronger result come from the hook, the proof sequence, the offer framing, or the CTA? If you can’t answer that, the test was too messy.

A practical decision framework

Every test should end in one of three decisions.

| Outcome | What it means | Next move |

|---|---|---|

| Winner | The variable beat the control clearly enough to justify rollout | Scale and build follow-up variants |

| Promising | Some signal is positive, but the result is incomplete or mixed | Iterate one variable and retest |

| Loser | The concept failed the business threshold | Kill it and document why |

High-velocity teams also act fast once a winner emerges. The same Meta-linked analysis notes that teams often scale winners with budget increases of 25-50% daily until CAC thresholds are hit, while cutting losers when CAC exceeds target by 20% or more after day 3. Those rules aren’t universal, but they reflect a useful principle. Creative review should end in an operating decision, not a vague conclusion.

Review meetings should produce actions, not observations.

The cadence that keeps learning alive

The fastest teams I’ve worked with keep review light and frequent. A long monthly recap is too slow for paid social. The same source notes that many teams use daily reviews lasting 15-25 minutes to monitor CAC, ROAS, and cohort behavior while testing at speed.

That only works if the meeting is disciplined. Keep it tight:

- What launched

- What’s winning

- What’s fading

- What gets scaled

- What gets revised

- What gets killed

- What gets logged

The hidden advantage is organizational memory. You stop relying on whoever “remembers what happened last quarter.”

If your team needs a more rigorous operating model for this loop, this resource on video ad iteration strategy is useful because it frames iteration as a system of decisions rather than a pile of ad hoc revisions.

Sustaining Scale Through Governance and Continuous Improvement

The final failure point is drift.

A team builds speed, output rises, then standards loosen. Naming slips. Approvals become informal. Learnings sit in decks. New hires recreate old tests because nobody can find prior decisions. Scale starts to erode from the edges.

That’s why governance matters. Not heavy process. Just enough structure to preserve speed. As this guide on creative scaling cadence points out, inconsistent launch velocity hurts performance, while a structured testing calendar creates more stable patterns and preserves learning that would otherwise be lost.

The governance layer that keeps the machine healthy

A durable system usually has four controls:

- A live brand and compliance repository so reviewers aren’t repeating the same feedback.

- Preflight QA checklists before launch, especially for naming, links, subtitles, claims, and formats.

- Clear approval ownership so no asset waits in a vague “pending” state.

- A searchable learning library that stores wins, losses, hypotheses, and why the team made each decision.

Speed without governance creates noise. Governance without speed creates drag. You need the narrow middle.

The most resilient teams also document losing tests. That’s where a lot of the compounding value sits. A failed angle can still teach you what objection didn’t matter, what opening didn’t land, or what audience framing fell flat.

If you want scale that lasts, build a system that gets smarter every week. That’s the main job. Not squeezing more effort from the team, but creating an operation that can produce, test, learn, and repeat without burning out the people inside it.

If your team is trying to turn existing footage into a repeatable high-velocity testing pipeline, Sovran is built for that workflow. It helps performance marketers organize assets, assemble modular video variants, and push more testable creative into market without relying on manual editing for every version.

Manson Chen

Founder, Sovran

Related Articles

AI UGC Video: A Guide to Scale Ads on Meta & TikTok

Create a Winning Instagram Ad Template in 2026