Your Video Ad Iteration Strategy: A 2026 Playbook

Jump to a section

Your top ad is dying again.

CPM is still workable. Spend is there. The problem is creative decay. You launch a few fast edits, swap a headline, trim a second off the intro, maybe change the music, and hope one catches. Most of them don’t. The account starts looking busy, but the testing isn’t teaching you much.

Teams often get stuck there. They aren’t short on ideas. They’re short on structure.

A strong video ad iteration strategy isn’t a list of hook ideas. It’s an operating system for producing, testing, diagnosing, and rebuilding ads quickly enough to stay ahead of fatigue. In 2026, the teams winning on Meta and TikTok aren’t just making more ads. They’re running a creative factory built on modular assets, clear test logic, and automation that removes manual editing bottlenecks.

Why Your Ad Creative Wins Feel So Random

Most accounts don’t have a testing problem. They have a process problem.

A winning ad lands, scales, then fades. The response is usually reactive. Teams brief a designer for a few edits, ask a creator for another take, duplicate the ad into fresh ad sets, and call that iteration. Sometimes it works. Often it doesn’t.

That randomness is expensive because creative discovery is already hard. In video ad campaigns on Meta and TikTok, only 1 out of every 10 to 20 creative tests typically emerges as a winner according to AppAgent’s breakdown of mobile video ad iteration. If your workflow is unstructured, you’re wasting budget on noise instead of learning.

Random testing feels productive, but it isn’t

The usual pattern looks like this:

- A winner appears: Everyone treats the ad as a finished asset instead of a source file for future variants.

- Performance softens: The team makes surface-level edits because they’re fast.

- Tests pile up: Multiple variables change at once, so nobody knows what moved the metric.

- Learnings vanish: Results sit inside ad names, Slack threads, and someone's memory.

That’s why “creative testing” often turns into random acts of editing.

Practical rule: If a team can’t explain what single variable changed in a test, it isn’t running a test. It’s just shipping another ad.

The real issue isn’t ideation

Teams often have enough raw material. They have UGC clips, product demos, testimonials, founder footage, reviews, screenshots, comments, and old winners. What they lack is a way to turn those assets into repeatable combinations.

That’s the shift. Stop treating each ad as a one-off masterpiece. Start treating it as a system of parts.

If your current workflow still depends on manually rebuilding every variation in CapCut or Premiere, you’ll keep moving too slowly to exploit what the account is telling you. A more disciplined approach starts with a real creative testing framework for Meta ads, then extends into modular production and decision rules.

Winning creative will never be fully predictable. But it should stop feeling like luck.

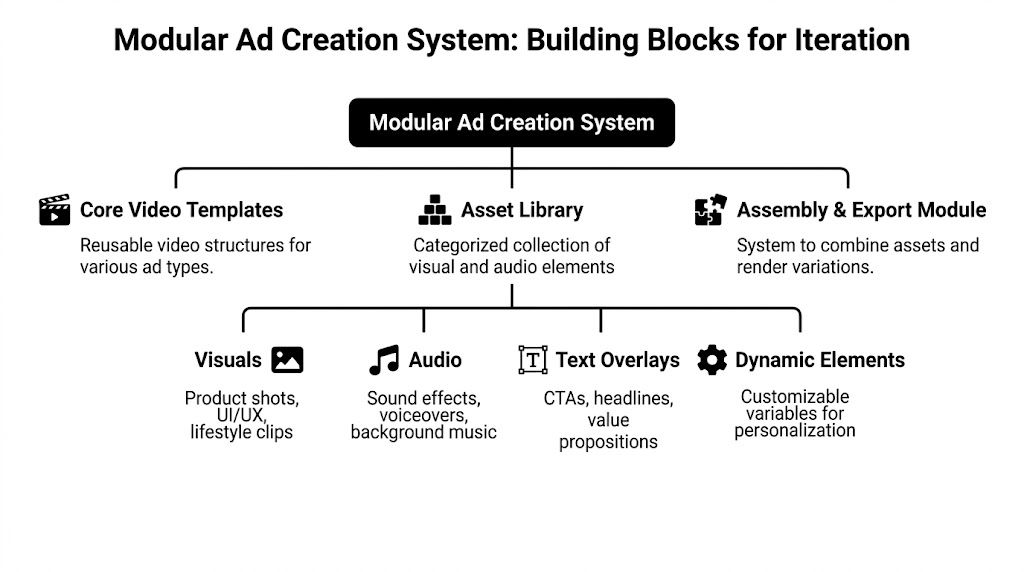

Building Your Modular Ad Creation System

The best creative teams don’t store “ads.” They store components.

That sounds small, but it changes everything. Instead of keeping a folder full of exported MP4s called final_v3_reel_new_use_this, build a library of reusable blocks that can be reassembled across audiences, offers, formats, and platforms.

Start with Hook Body CTA

Every performance video ad can be broken into three functional layers:

| Module | Job in the ad | Typical examples |

|---|---|---|

| Hook | Stop the scroll | Bold claim, pain point, visual disruption, creator reaction, question |

| Body | Build belief | Demo, before/after, proof, explanation, testimonial, objection handling |

| CTA | Convert intent | Offer, urgency, next step, direct ask, app install push |

This structure matters because each part solves a different problem.

A hook earns attention.

A body justifies the click.

A CTA turns interest into action.

When teams ignore those roles, they make bad iteration decisions. They try to fix a weak hook by rewriting the CTA. They patch a poor value proposition by changing captions. The result is more output, not better testing.

Tag assets by function, not by file type

A raw clip should never live in your library as “creator_17_take2.mov” and nothing else. That’s a storage system, not a production system.

Use tags that describe how the asset functions inside an ad:

- Hook tags:

hook-pain,hook-curiosity,hook-social-proof,hook-demo-open - Body tags:

body-problem-agitate,body-feature-demo,body-objection,body-testimonial - CTA tags:

cta-scarcity,cta-trial,cta-download-now,cta-founder-close

Then layer in production tags:

- Format tags:

9x16,1x1,16x9 - Platform tags:

meta-feed,reels,tiktok - Style tags:

ugc,founder,screen-recording,b-roll,voiceover - Audience tags:

new-user,retargeting,skeptical-buyer,high-intent

That tagging discipline is what makes high-velocity iteration possible later.

Build templates before you need them

Teams often template too late. They wait until a winner emerges, then scramble to remake it in other formats.

A better workflow is to maintain a small set of reusable structures:

- Problem Agitate Solution

- UGC testimonial mashup

- Listicle

- Demo first

- Before and after

- Founder explanation

- Comment response ad

Each template should have placeholders for hook, body proof block, and CTA. That way, when a message works, you can port it across structures without starting from zero.

Good iteration protects the core message and changes the delivery. Bad iteration changes everything and calls the result a learning.

Treat formatting as part of the system

A modular setup also needs export rules. Platform fit matters, especially once you’re moving variants across placements.

If your team is still guessing dimensions, safe zones, or output settings, keep a reference for technical specifications for Instagram Reels close to production. It saves rework and prevents small formatting mistakes from poisoning test results.

What the asset library should contain

A practical modular library usually includes:

- Raw creator footage: Multiple intros, reactions, product handling, voice lines

- Proof assets: Reviews, UGC testimonials, PR mentions, ratings screenshots

- Demo clips: Product use, app flows, onboarding moments, outcomes

- Visual support: B-roll, product closeups, packaging, lifestyle context

- Text systems: Headlines, overlays, subtitles, value props, CTA endings

- Audio layers: Voiceovers, trend-safe music options, sound effects

The goal isn’t aesthetic neatness. It’s operational speed.

A modular workflow also makes collaboration easier. Creative strategy can specify which message block needs replacing. Editors don’t need to rebuild the whole ad. Buyers can request variants at the level of hook, body, or CTA instead of asking for “something fresh.”

That’s the point of a modular video ad framework. It reduces production waste and makes each new test cheaper to launch.

The mindset shift that matters

A finished ad is not the end product. It’s evidence.

If an ad wins, you don’t just scale it. You break it apart and log what likely made it work:

- Was the opening line pain-led or aspirational?

- Did the body lean on demo, proof, or authority?

- Was the CTA soft, direct, or urgent?

- Did the creator tone feel polished or native?

Once your team starts archiving those answers inside the asset system, iteration stops being a guessing game and starts acting like compounding R&D.

Designing a High-Velocity Test Matrix

Once you have modular assets, you can stop launching “fresh creatives” and start launching controlled experiments.

The test matrix is where most performance teams either get disciplined or stay sloppy. A good matrix isolates variables, keeps naming clean, and gives the buyer a real hypothesis before spend goes live.

Start from a control, not a blank page

Use one ad as the control. Ideally it’s a clear winner or the current best performer. Don’t begin by testing ten unrelated concepts in one burst unless you’re specifically doing net-new concept discovery.

For iteration, the rule is simple. Hold two components steady and change one.

That can mean:

- Same body and CTA, different hooks

- Same hook and CTA, different body angles

- Same hook and body, different CTA framing

Attribution gets muddy fast inside platform delivery. If you swap the creator, value prop, pacing, thumbnail, and CTA all at once, you won’t know what earned the result.

Use a matrix that buyers can actually manage

Below is a practical structure for hook testing at the ad set level.

Sample Hook Testing Matrix (Ad Set Level)

| Ad Set Name | Variable Tested | Hook Version | Body Version | CTA Version | Hypothesis |

|---|---|---|---|---|---|

| Control Winner | None | Hook A original | Body A winner | CTA A winner | Baseline for all comparisons |

| Hook Test 1 | Hook | UGC pain opener | Body A winner | CTA A winner | Native creator framing will improve initial attention |

| Hook Test 2 | Hook | Demo-first visual opener | Body A winner | CTA A winner | Immediate product proof will filter for higher intent users |

| Hook Test 3 | Hook | Question-led opener | Body A winner | CTA A winner | Direct problem identification will increase qualified clicks |

| Hook Test 4 | Hook | Social proof opener | Body A winner | CTA A winner | Early credibility will improve trust before the pitch |

Simple beats clever here. If the matrix can’t be understood by the buyer, editor, and creative strategist in under a minute, it’s too messy.

Bring structure to creative variation

One useful framework for generating variants is the 12-Combination System. It uses Valence, Intensity, and Self-Discrepancy Theory to create targeted variations, and brands using that structure have reported 2-3x longer ad lifespans in the source material from the 12-Combination System walkthrough.

That framework is useful because it stops teams from making only cosmetic changes.

Instead of asking for “three new versions,” ask better questions:

- Does this audience respond better to positive framing or negative consequence framing?

- Should this message feel high energy or more grounded?

- Is the appeal tied to the user’s actual self or their ideal self?

Those are real variables. They change how the ad lands.

A practical sequence for testing

I like to stack iterations in this order because it mirrors impact:

Hooks first

Hooks decide whether the rest of the ad gets a chance.

Test contrasts, not tiny edits:

- UGC direct-to-camera against polished branded footage

- Pain statement against aspiration statement

- Visual demo first against verbal opener

- Question opener against declarative statement

Then body messaging

Once a hook works, don’t overreact and start replacing everything. Keep the hook and isolate the middle.

Useful body tests include:

- Problem-solution framing

- Testimonial-led proof

- Product walkthrough

- Objection handling

- Comparison framing

CTAs last

CTA testing matters, but it usually matters less than teams think unless the top and middle of the ad are already working.

Good CTA tests tend to be framing differences:

- Direct install ask

- Trial-oriented ask

- Scarcity close

- Outcome-driven close

The fastest path to a better account isn’t more variation. It’s better isolation.

What to avoid in a fast test cycle

A few patterns waste time over and over:

- Micro-edits too early: Subtitle styling, tiny color changes, minor motion tweaks

- Mixed hypotheses: Testing a new creator and new offer in the same version

- No naming discipline: Variants impossible to analyze later

- Platform silos: TikTok insights not carried into Meta tests

If a test wins, log the winning mechanism, not just the file name. “Hook B won” isn’t enough. Write down why you believe it won. That note becomes the seed for the next matrix.

For teams that want a clean starting point, a dedicated creative testing matrix tool helps standardize naming and variant planning before launch. The key is the discipline behind it, not the sheet itself.

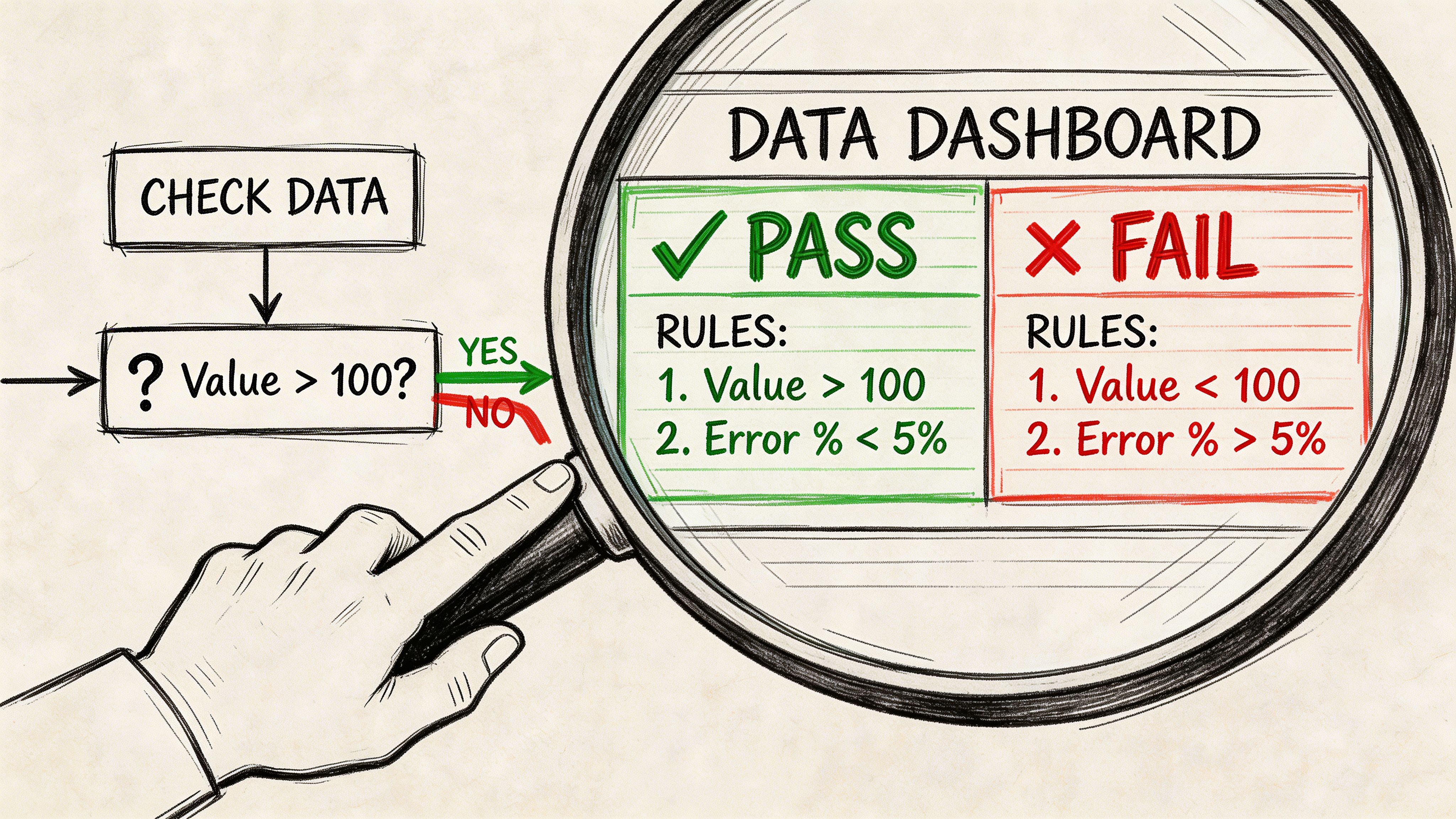

Reading the Data and Setting Decision Rules

Most ad teams look at results after the fact. Better teams define the read before launch.

That means knowing which metric belongs to which creative layer, and what action you’ll take when that metric breaks.

Use the Thumbstop to CTR to V100 funnel

A practical diagnostic model is the Thumbstop-to-CTR-to-V100 Funnel Iteration approach. In that model, low thumbstop suggests iterating the hook or thumbnail, while high thumbstop but low CTR points to reworking the body with stronger problem-solution framing or social proof, as outlined in Motion’s guide to creative iterations for winning ads.

That sequence matters because it tells you where the ad is failing.

Here’s the plain-language read:

| Metric pattern | Likely issue | What to iterate |

|---|---|---|

| Low thumbstop | The ad isn’t earning attention | Hook, first frame, thumbnail, opening visual |

| High thumbstop, low CTR | The ad catches attention but loses intent | Body structure, value prop, proof, offer framing |

| High CTR, weak post-click conversion | The click is strong but the journey breaks | Landing page cohesion, offer continuity, message match |

| High video completion, weak click volume | The ad is watchable but not persuasive enough | CTA framing, urgency, exclusivity, next-step clarity |

Set rules before spend goes live

Decision rules prevent emotional analysis.

Without them, teams keep borderline tests alive because they like the creative, or kill useful tests too early because a buyer wants to move on. The ad account ends up governed by opinion.

Use simple if-then logic:

- If thumbstop is weak: Replace the hook before touching the offer

- If thumbstop is strong but CTR is soft: Rewrite the body, not the opening

- If clicks are strong but purchases lag: Audit landing page message match

- If a variant beats the control across the key stage it was built to improve: Promote it to the next round as the new control

This sounds obvious, but teams often skip it. They look at blended outcome metrics and start changing the wrong layer.

Don’t confuse attention with persuasion

A flashy opener can help thumbstop and still be a bad ad. That’s common on TikTok. The creative looks native, earns views, but qualifies the wrong person or fails to transition into proof.

That’s why metric combinations matter more than isolated reads.

A hook that wins attention but sends weak traffic is not a winner. It’s an expensive teaser.

The reverse can also happen. Some ads don’t look exciting on the surface but send qualified clicks and convert well. Those often deserve another round of hook testing rather than a full rebuild.

Build a review rhythm that teams can sustain

A useful operating rhythm looks like this:

Daily buyer check

Focus on directional signals:

- Spend delivery

- Thumbstop

- CTR

- Early conversion quality

- Obvious breakpoints

Mid-cycle creative review

Pull the actual assets back up and compare the winning and losing variants side by side. Buyers often miss creative patterns if they stay inside dashboards only.

End-of-test readout

Document:

- What changed

- Which metric moved

- What likely caused the movement

- What should be tested next

The point of analytics isn’t to prove what happened. It’s to decide what to make next. A structured Facebook ad creative testing workflow helps when multiple people touch production, buying, and reporting.

The more explicit your rules are, the less your team argues over taste and the faster you can move to the next informed test.

How to Automate Your Iteration Workflow with AI

Manual iteration breaks first at volume.

A buyer can manage a few variants by hand. An editor can rebuild a handful of cuts in CapCut or Premiere. But once the team is running multiple offers, audiences, creators, and placements, manual production becomes the bottleneck. That’s where the workflow needs automation, not more hustle.

An important shift in the market is AI-driven automation in video ad iteration for Meta and TikTok. According to Sovran’s write-up on video ad iterations, AI video tools boosted ad testing velocity by 8x for performance marketers in 2025-2026, though integration friction still slows adoption. This is the primary opportunity. Not just generating clips, but connecting generation, assembly, naming, and launch into one operating flow.

What automation should actually do

A lot of AI tooling is still novelty software. It makes one flashy asset and leaves your team to clean up the workflow after.

Useful automation should handle operational work like this:

- Asset tagging: Detect hook, testimonial, demo, objection handling, CTA close

- Search: Find clips with natural-language prompts instead of folder digging

- Script variation: Generate controlled message variants using stored brand context

- Bulk assembly: Combine Hook-Body-CTA blocks into many export-ready cuts

- Naming conventions: Keep test variants organized before they hit the ad account

- Platform delivery: Push completed variants into Meta or TikTok workflows with less manual transfer

If a tool can generate an ad but can’t help you organize, compare, and relaunch variants, it’s not solving the full iteration problem.

A practical AI-augmented workflow

Here’s what an efficient pipeline looks like in practice.

Ingest and tag the raw library

Upload creator footage, demos, testimonials, and b-roll once. The system should tag clips by function so your team can search for “pain-led UGC hook” or “demo clip with product closeup” instead of scrubbing timelines manually.

Store message context

This part holds greater importance than teams often realize. If you’re generating scripts or text overlays with AI, you need a persistent source of truth. That usually includes:

- Brand claims the team is allowed to make

- Customer review language

- Approved value props

- Top-performing historical hooks

- Offer constraints

- Channel-specific tone notes

Without that, output drifts fast. You get generic scripts, off-brand framing, or ad variants that sound persuasive but don’t match the product.

Assemble variants from modules

Once assets and message blocks are tagged, you can build test sets from structured combinations instead of editing from scratch.

For example:

| Variant group | Hook source | Body source | CTA source |

|---|---|---|---|

| Hook test set | 4 creator intros | Same product demo | Same install CTA |

| Proof test set | Same winning hook | 3 proof blocks | Same CTA |

| CTA test set | Same winning hook | Same body | 3 closing asks |

Modularity's benefits are clear. The team isn’t “making new ads.” It’s recombining proven and experimental blocks with intent.

A platform like Sovran’s AI creative automation workflow fits here because it tags uploaded clips into reusable modules, stores brand and review context, supports natural-language asset search, and bulk-renders variants for Meta and TikTok testing. That’s one option in a broader stack that might also include CapCut, Premiere, Sora, Veo, and your reporting layer.

Automation changes who does what

With the right setup, the buyer spends less time chasing edits. The strategist spends less time writing repetitive briefs. The editor spends less time resizing the same ad six ways.

That doesn’t remove creative judgment. It removes repetitive labor.

The biggest gain from AI isn’t “better ideas.” It’s faster execution on ideas you can already justify.

That’s also why adjacent workflow thinking matters. If your team is evaluating broader categories of software for process reduction, this roundup of AI tools for streamlining workflows is useful as a framing exercise. The category matters because creative ops is now a systems problem, not just an editing problem.

A quick demo helps make the shift concrete:

Where AI helps most, and where it doesn’t

AI is strongest when the task is repetitive, modular, and constrained:

- Resizing and reformatting

- Script variation within approved messaging

- Asset retrieval

- Overlay generation

- Bulk export

- Variant naming and packaging

It’s weaker when the team hasn’t defined the strategy:

- No clear audience angle

- No control ad

- No testing hierarchy

- No naming discipline

- No measurement rules

Automation won’t rescue a bad process. It multiplies the quality of the process you already have.

That’s why the teams benefiting from AI aren’t the ones prompting for random “viral ad ideas.” They’re the ones feeding a strong system with structured assets, test logic, and clean feedback loops.

Establishing Your Long-Term Testing Cadence

A single winner doesn’t create an edge. A repeatable cadence does.

That’s the difference between accounts that spike and accounts that compound. The strong teams don’t celebrate one good ad for too long. They immediately turn the win into a learning object, a variant tree, and a set of next tests.

That matters because iterative video ad strategies can deliver 2–5× higher engagement rates than static formats, and U.S. social commerce sales were projected to exceed $85 billion in 2025 in the source material from AI Digital’s analysis of interactive video ads. In practice, that means teams that maintain a real iteration cadence stay in the game longer and extract more value from each concept.

Use a cadence that matches team size

An indie app team and a multi-account agency shouldn’t run the same production rhythm.

Lean in-house team

A smaller team usually does better with a tight loop:

- Weekly: Review active winners, choose one control per offer, launch a focused batch of iterations

- Midweek: Cut losers quickly and note why

- End of week: Save the top learnings into a central log

That workflow works because it limits chaos. Fewer tests, better reasoning.

Agency or larger growth team

A larger team can split responsibilities:

| Cadence layer | Owner | Focus |

|---|---|---|

| Daily | Media buyer | Delivery, performance anomalies, early signals |

| Twice weekly | Creative strategist | Variant review, new hypotheses, fatigue reads |

| Weekly | Editor or creative ops | Build and package next test batches |

| Monthly | Team lead | Pattern review across offers, creators, personas, and formats |

The key is continuity. Every review should produce a next action.

Keep a Creative Learnings Log

Most organizations lose their best insights because they only save exported assets, not reasoning.

Your log doesn’t need to be fancy. A simple doc, spreadsheet, or Notion database works if it captures the right fields:

- Ad ID or variant name

- Audience or funnel stage

- Hook type

- Body angle

- CTA style

- Platform and placement

- Observed strength

- Observed weakness

- Next iteration idea

This mechanism builds institutional memory.

When a new buyer joins, they shouldn’t have to rediscover that a pain-led creator hook works for cold traffic, while polished demos work better lower in funnel. The log should tell them.

Teams that document creative mechanisms get smarter. Teams that only archive files get busier.

Watch for fatigue, but don’t panic-edit

A lot of teams respond to fatigue by replacing a whole ad too early.

A better move is to ask which layer has likely worn out:

- Is the hook stale because the audience has seen the opener too many times?

- Has the body stopped persuading because the proof feels exhausted?

- Is the CTA underperforming because the offer needs reframing?

That’s where modular production keeps the cadence sustainable. You don’t need a net-new concept every time. You need a disciplined sequence of refreshes.

Build the loop into the culture

The long-term win is not “more creatives.” It’s a team habit:

- Launch from a clear control.

- Isolate one variable.

- Read the right metric combination.

- Log the mechanism behind the outcome.

- Feed that insight into the next batch.

Do that consistently and your video ad iteration strategy stops being a rescue tactic. It becomes one of the few real moats available in paid social.

If your team wants to turn scattered edits into a repeatable production system, Sovran is built for that workflow. It helps teams tag raw clips into reusable modules, store brand and customer context, bulk-render structured variants, and move faster from creative idea to launch without rebuilding every ad by hand.

Manson Chen

Founder, Sovran

Related Articles

Create Video Ads with AI That Perform in 2026

AI UGC Video: A Guide to Scale Ads on Meta & TikTok