Win Big: Creative Testing Framework Meta Ads

Jump to a section

- Beyond A/B Testing The New Rules of Creative on Meta

- Laying the Foundation for Scalable Testing

- Building and Launching Your Test Matrix

- Measuring What Matters and Making Confident Decisions

- Scaling Winners and Preventing Creative Fatigue

- Supercharge Your Framework with Automation and AI

- Frequently Asked Questions

You probably know the feeling. The account is active, spend is moving, and there’s no shortage of ads in market, but when someone asks what you learned this week, the answer gets fuzzy fast.

One hook got decent CTR. A carousel spent oddly well for two days. A founder video looked promising, then died when budget increased. The campaign names are messy, assets live across folders, Slack threads, and editors, and every new test feels like starting from scratch.

That’s why a solid creative testing framework Meta ads setup matters now more than ever. Winning on Meta isn’t about running random A/B tests until something sticks. It’s about building a repeatable engine that turns creative into signals, signals into insight, and insight into the next round of better ads.

Beyond A/B Testing The New Rules of Creative on Meta

A common failure pattern on Meta looks like this. The team is shipping plenty of ads, spend is active, and weekly reporting still ends with opinions instead of conclusions. The problem usually is not effort. It is a testing system that mixes variables, records weak notes, and makes it hard to separate signal from noise.

One ad launches with a new hook, a new offer frame, a new edit, and a different CTA. Meta sends early spend to the asset that gets the fastest click response. The team pauses the rest, calls a winner, and briefs the next batch on incomplete evidence. After a few cycles, performance stalls and nobody can explain what worked.

That process was always messy. With higher CPMs and faster fatigue, it now burns budget and slows learning.

Creative is now a targeting input

On Meta, creative does more than persuade. It helps the system decide who is likely to respond. The platform's analysis of your creative is significant because it goes beyond your audience settings. It evaluates the opening frames, pacing, copy, on-screen text, product cues, and angle, then uses those signals in delivery.

That changes how testing should work. If a video opens with a pain point and another opens with social proof, those are not minor stylistic differences. They can pull in different pockets of demand even when the campaign setup looks identical.

Teams that understand this stop treating creative as decoration around media buying. They build assets to produce clear signals the algorithm can work with, then read those signals across hooks, formats, and message angles.

Why ad-hoc testing stops teaching you anything

Ad-hoc testing creates contaminated reads.

If multiple variables change at once, results are hard to trust. If delivery skews too early, comparisons get distorted. If naming conventions are loose, the team cannot aggregate lessons across tests. You end up with a winning ad and no usable pattern behind it.

Practical rule: If your test cannot isolate the change, it cannot tell you what to produce next.

This is also why creative variety needs structure. The goal is not to launch more assets for the sake of volume. The goal is to give Meta distinct, intentional inputs it can match to different audience pockets. For a stronger framework on asset range, review this guide to creative diversity for Facebook ads.

The old playbook underweights the asset itself

A lot of teams still optimize Meta accounts as if targeting and bidding do the heavy lifting and creative packages the message. That model is outdated.

The accounts that keep finding winners usually run a repeatable creative engine. They test modular components, document each variable, and feed the result into the next round. Instead of asking which single ad won, they ask which hook, proof point, format, and edit pattern earned attention from a specific buyer stage. That is how you build a system that keeps generating insight after the first few wins.

Production matters here too. If your team cannot create enough variation without dragging timelines, the framework breaks before analysis even starts. That is why it helps to study how to make Facebook video that grows your business. Better inputs make better tests, and better tests make scaling much less random.

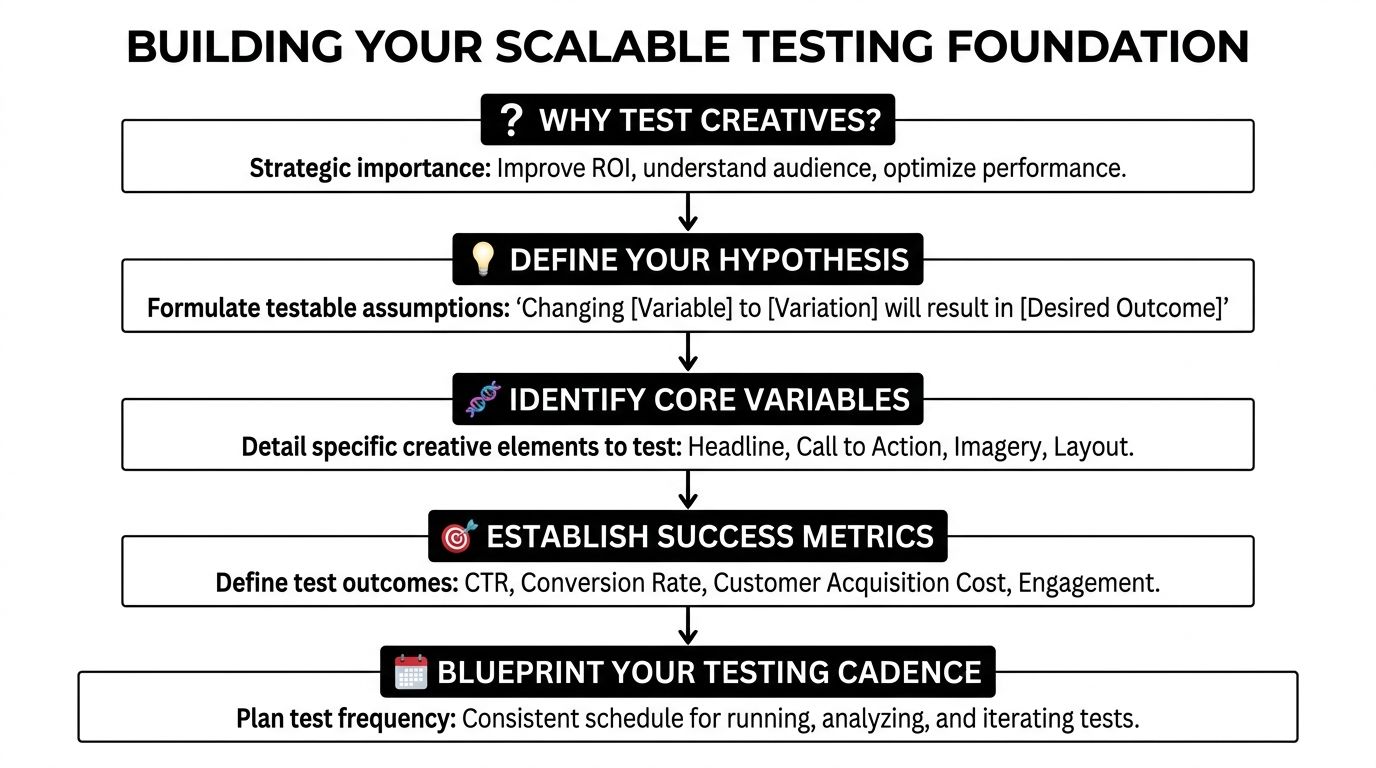

Laying the Foundation for Scalable Testing

A testing program usually breaks long before the ads go live. The common failure point is not creative quality. It is messy setup. Files are hard to find, variables are undocumented, naming is inconsistent, and after two or three rounds the team cannot tell whether a result came from the hook, the offer, the edit, or the creator.

That is why scalable testing starts with operating rules.

Write hypotheses that are narrow enough to fail

Loose ideas create noisy tests. A hypothesis should identify one variable, one audience context, and one expected outcome. If it cannot be proven wrong, it will not help the next production cycle.

Weak hypothesis:

- Unclear variable: UGC may outperform polished creative.

Usable hypothesis:

- Specific variable: A problem-first UGC hook will outperform a benefit-led studio hook for first-purchase acquisition.

- Controlled scope: Body copy, CTA, offer, and landing page stay the same.

- Clear action: If the hook wins, produce more problem-first intros for the same stage of traffic.

The goal is not to find a single winning ad. The goal is to learn which component deserves more production time. Teams that treat hypotheses this way build a repeatable testing engine. Teams that skip this step usually end up arguing over anecdotes in Slack.

Build a modular asset library

A common mistake is storing only finished ads. A better practice is to store reusable components.

Break each asset into parts your team can test again:

- Hooks: first visual beat, opening line, first text card

- Bodies: demo, testimonial, founder explanation, objection handling

- CTAs: direct offer close, urgency close, softer conversion prompt

That structure changes production economics. Editors can swap one opening without rebuilding the whole ad. Strategists can request three new proof segments without asking for a full reshoot. Media buyers can review patterns across hooks, angles, creators, and formats instead of judging assets as one-off winners.

A practical taxonomy usually includes:

- Angle tag such as problem, benefit, comparison, social proof

- Format tag such as static, Reel, UGC, carousel, short demo

- Awareness tag such as cold, consideration, decision

- Offer tag tied to the promise, bundle, or promo

- Source tag for the creator, customer clip, brand shoot, or stock footage set

If asset sprawl is already slowing your team down, these asset management best practices for creative teams are useful for setting up folders, naming logic, and version control.

Use names that make reporting usable

Naming conventions are not admin overhead. They are part of the testing system.

A good name should tell the buyer what changed, what stayed fixed, and where the ad belongs in the funnel without opening the asset. That becomes more important as the account grows, because historical learnings are only useful if someone can find them six weeks later.

A workable structure looks like this:

- Campaign: TEST | Purchase | Broad | Hook-Test | Problem-Angle

- Ad set: UGC | Female-Creator | Reels+Feed | ABO

- Ad: HK_ProblemLaundryPain | BD_DemoV2 | CTA_FreeShip

This is what makes the account queryable. If a team wants to check whether founder-led bodies underperform customer demo bodies for cold traffic, the naming system should make that answer easy to pull.

The account should function like a searchable testing archive, not a pile of old ad names.

Set decision rules before spend starts

Good operators decide the rules before results create bias.

Answer these questions before launch:

- What single variable are we isolating first?

- What metric will determine the call?

- What minimum spend or conversion threshold is required before judging?

- What happens if a variant wins?

- What counts as a new angle versus a light edit?

This step sounds simple, but it is where a lot of teams lose discipline. A weak ad gets labeled as a bad concept when the problem was the opening frame. A marginal winner gets scaled too early because the team wants an answer fast. A cosmetic edit gets logged as a fresh test and pollutes the learning backlog.

The teams that keep producing winners are rarely the ones with the fanciest creative. They are the ones with a cleaner system for storing assets, isolating variables, and documenting what each test was supposed to prove.

Building and Launching Your Test Matrix

Monday starts with three new ads in review. By Friday, nobody can explain why one spent, one stalled, and one looked promising but never got enough delivery to mean anything. The problem usually isn’t a lack of ideas. It’s a weak test matrix.

A good matrix gives each creative variant a fair read and produces a learning you can reuse. We recommend isolating the hook first because it is the fastest variable to test, the cheapest to produce at volume, and the easiest place to confuse a bad opening with a bad concept if you bundle too many changes together.

Build the first matrix around one body and one CTA

Use a body that has already cleared a basic quality bar. It should communicate the offer clearly, fit the format, and feel stable enough that it won’t distort the result. Then pair multiple hooks against that same body and CTA.

That keeps the question clean.

For ecommerce, the first round often looks like:

- Hook A: direct problem statement

- Hook B: product-in-use opening

- Hook C: customer proof or testimonial opener

For mobile apps, a practical first round might be:

- Hook A: frustration with the current workflow

- Hook B: visible outcome inside the product

- Hook C: creator-style surprise or skepticism opener

The point is not creative variety for its own sake. The point is controlled variation.

Sample Hook Test Matrix

| Ad Name | Hook | Body | CTA | Hypothesis |

|---|---|---|---|---|

| HK_Problem_01 | Problem-focused UGC intro | Product demo body | Direct purchase CTA | Problem-first opening will drive stronger qualified clicks |

| HK_Benefit_01 | Benefit-led studio intro | Product demo body | Direct purchase CTA | Clear benefit framing will improve conversion efficiency |

| HK_Testimonial_01 | Social proof opener | Product demo body | Direct purchase CTA | Testimonial-led trust cue will reduce hesitation |

If you want a faster way to structure this, use a creative testing matrix template.

Start with ABO if the goal is diagnosis

ABO is usually the cleaner setup for early testing because it gives each ad set its own budget. That matters when the objective is to compare creative ideas, not to help Meta find a spender as quickly as possible.

In practice, ABO reduces one of the most common testing errors: declaring a winner after the system gave one ad a head start and starved the rest. If a variant wins in ABO, there is a better chance it earned the result.

Test campaigns exist to answer a question. Scaling campaigns exist to spend behind a proven answer.

That separation keeps the account honest.

Use CBO later, once the matrix is doing its job

CBO has a place. Use it after the account has already identified a strong angle, message family, or creator format and the next question is about relative strength inside a tighter cluster.

A simple example: if problem-aware hooks have already beaten benefit-led hooks, a CBO test can help sort through five executions of that same problem angle faster than a rigid diagnostic setup. You give up some clarity, but you gain speed. That trade-off is usually worth it only after the first layer of learning is already in place.

Keep audience and placement choices from contaminating the read

Creative tests break when targeting and placements introduce extra variables.

Broad audiences usually produce the cleanest read for prospecting creative because they give the system enough room to match the asset to likely responders. Tight interest stacks can still work, but they make interpretation harder. A weak result may come from the creative, the audience definition, or poor delivery room. That is not a useful test outcome.

Placements need the same discipline. If the test is about a vertical video hook, compare assets that belong in the same format family. Don’t mix a feed-style static with a Reels-native video and call it a hook test. That result says more about format fit than message strength.

Bring in dynamic creative only after you have a usable component library

Dynamic creative helps once the team has enough proven ingredients to combine intelligently. It is a poor substitute for clear creative thinking.

The failure mode is predictable. Teams upload a random batch of hooks, bodies, headlines, and CTAs, then call the output a framework. That produces noisy combinations and weak learnings. Dynamic creative works better when the asset library is modular, tagged, and built from components that already showed promise in controlled tests.

Use manual matrices to find signal first. Use dynamic assembly to expand on signal after that.

Launch checklist

Before anything goes live, confirm:

- One variable per matrix: hook, body, CTA, angle, or format

- Fair budget distribution: use a structure that gives each variant enough delivery to compete

- Consistent creative architecture: same landing page, same offer, same conversion event

- Format alignment: placements match the asset type being tested

- Clear promotion path: winners move into scaling campaigns without being renamed or rebuilt

- Logging discipline: every asset should map back to a hypothesis the team can review later

The goal is not to get a winner out of every batch. The goal is to build a testing engine that compounds insight. Clean matrices do that. Messy ones just burn budget and create arguments.

Measuring What Matters and Making Confident Decisions

Launch is the easy part. Interpretation is where teams can start lying to themselves.

An ad can have a strong thumb-stop and weak commercial intent. Another can look average at first glance but drive better downstream performance. If you judge too early or on the wrong metric, you don’t just pick the wrong winner. You train the whole creative team to chase the wrong patterns.

Match the KPI to the question

The metric should fit the job.

If you’re testing hooks, early attention metrics matter first. If you’re testing bodies, you care more about whether people stay engaged long enough to absorb the core message. If you’re testing offers or CTAs, lower-funnel efficiency matters more.

A practical hierarchy looks like this:

- Top-of-ad attention: Did the opening earn the stop?

- Mid-ad engagement: Did the body hold interest?

- Business outcome: Did the click or conversion quality justify scale?

This sounds obvious, but a lot of teams test hooks and then declare winners based only on purchases, even when the sample is still thin. Others do the reverse and scale a flashy hook that drives cheap clicks but low purchase intent.

Don’t call winners too early

Discipline matters at this stage.

According to the Pilothouse framework, Meta creative tests need 30 to 50 conversions per variant to distinguish real winners from noise, and underspending creates false negatives because the algorithm never gets enough room to find the right audience pockets for that concept within its Meta testing approach.

That should change how you judge performance.

If a creative spent a little, got an encouraging early result, and then stalled, you still may not know much. Likewise, a concept that starts slowly may need enough delivery for Meta to map it correctly.

Decision filter: Don’t ask whether the ad looked good early. Ask whether it had enough data to earn a real decision.

Use practical kill and scale rules

You don’t need academic purity. You need consistency.

A practical decision system often looks like this:

- Kill for weakness: If a creative is clearly lagging on the primary metric and the gap isn’t narrowing, stop feeding it.

- Hold for more data: If signal is mixed, keep it running until it reaches your preset threshold.

- Promote for pattern strength: If it wins on the target KPI and the result aligns with other signs of quality, move it into a scaling environment.

The point is to make decisions from prewritten rules, not mood.

Watch for false confidence from dirty setups

Segwise’s methodology flags several common statistical traps: uncontrolled variables can cause 60% false positives, auction favoritism can skew 50% of ad-level tests, and low volume under 1,000 impressions per variant creates unreliable reads with heavy noise, all detailed in their best practices article.

That lines up with what most experienced buyers already know. Messy setups often produce very confident-sounding conclusions.

This reporting guide can help tighten your process: https://sovran.ai/blog/how-to-measure-your-creative-tests-in-facebook-ads-reporting

The best analysis asks why, not just who won

A useful readout doesn’t end at “Ad 3 won.”

It answers:

- What exact element changed

- What audience behavior likely matched with it

- Whether the result should be remixed, reformatted, or scaled as-is

- Whether the win came from attention, trust, clarity, or offer resonance

That’s how a test becomes part of a system rather than a one-off success.

Scaling Winners and Preventing Creative Fatigue

A winning ad is not a finish line. It’s raw material for the next round.

The right move after a test is usually simple. Graduate the proven creative into your BAU structure, keep the post ID if that fits your workflow, and watch whether performance holds in the less protected environment where stronger budget pressure and more delivery variation exist.

Promote the lesson, not just the asset

If a test winner came from a creator-style opening, don’t just scale that exact ad. Break apart why it worked.

Was it:

- the first line

- the visual authenticity

- the pace of the opening cut

- the way the product problem was framed

- the trust signal from a human face

That analysis should feed the next batch. One winner should create a family of related tests, not a single dependency.

The goal isn’t to find one hero ad. The goal is to identify repeatable creative traits you can keep compounding.

Fatigue is operational, not mysterious

Creative fatigue isn’t subtle once you know what to watch for. The same audience sees the same message too many times, response quality drops, and your once-reliable ad starts dragging account efficiency.

AdManage’s framework notes that purchase likelihood declines substantially once users see an ad 6 to 10 times versus 2 to 5 exposures, and high-velocity systems that test 30+ creatives weekly are designed to keep fresh assets flowing and reduce that decay, as outlined in their creative testing framework.

That’s why strong teams build a pipeline, not a hero-shot culture.

Keep a simple weekly creative rhythm

A practical cadence often includes:

- Early week: Review winners and losers from the prior batch

- Midweek: Brief and build next modular variants

- Late week: Launch the next controlled round

- Ongoing: Move validated winners into BAU and retire stale assets

As noted in the earlier framework discussion, runtime discipline matters too. If you cut every test too quickly, you create noise. If you leave tired ads in market too long, you pay fatigue tax.

The healthiest Meta accounts usually feel boring behind the scenes. Fresh inputs arrive on schedule, learnings are logged, and no one panics because one ad cooled off. The system is doing its job.

Supercharge Your Framework with Automation and AI

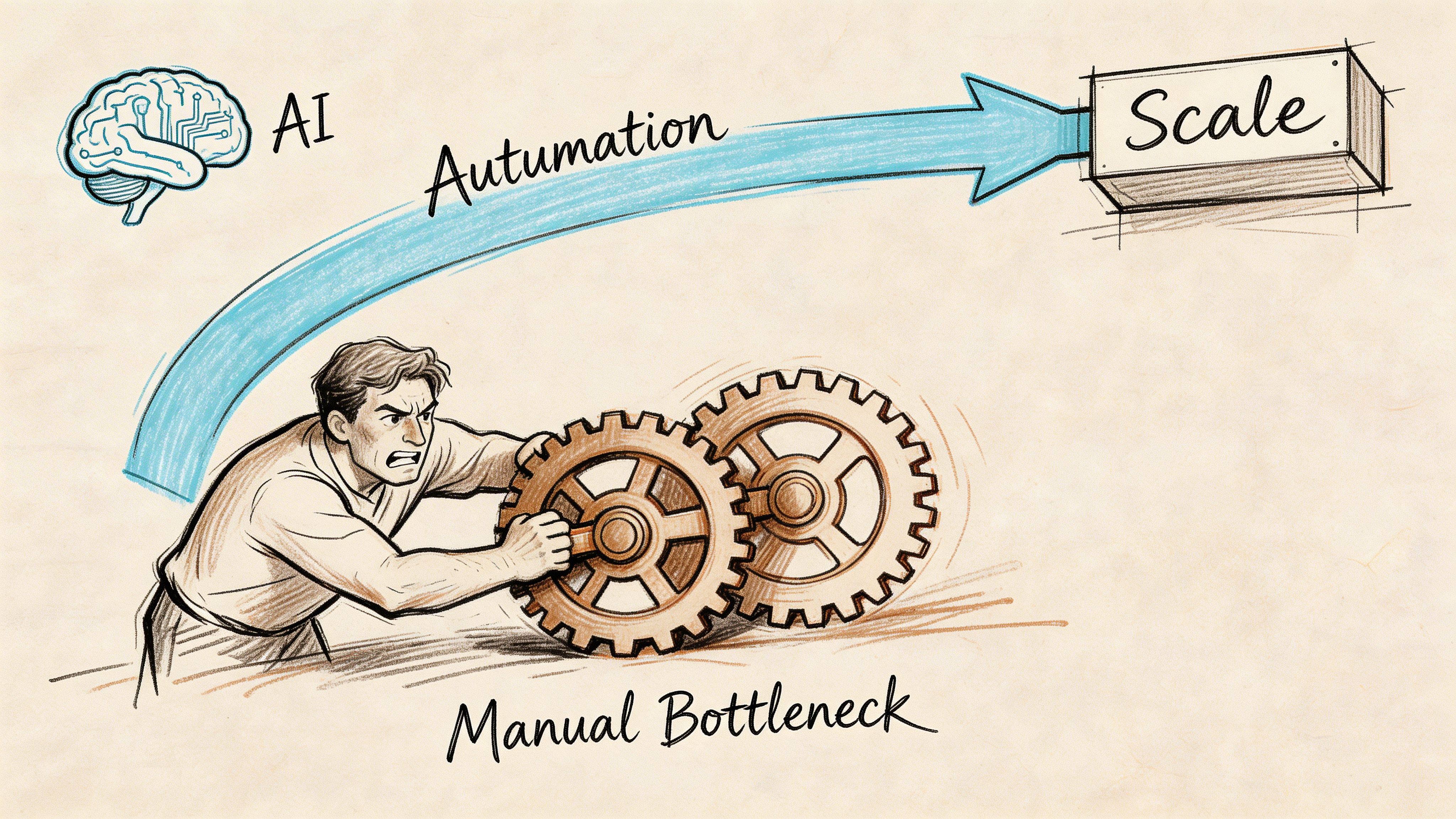

Manual execution is where most creative systems break.

The strategy may be sound. The hypotheses may be clear. The naming logic may even be documented. Then practical bottlenecks emerge. Editors have to duplicate timelines, resize versions, swap hooks, export dozens of files, rename them carefully, upload them, and map them into the right campaign structure.

That’s where velocity dies.

Production ops is the primary bottleneck

Teams often don’t need more ideas. They need a faster path from idea to live test.

The highest-friction tasks are usually:

- Asset tagging: Knowing what each clip contains

- Variant assembly: Turning one concept into many combinations

- Rendering: Exporting all versions without endless editor time

- Publishing: Pushing variants into Meta with naming consistency intact

If you’re comparing tools across the wider creator stack, this roundup of best AI tools for content creators is a useful starting point because it frames where automation helps across ideation, editing, and output.

What automation should do

Useful automation doesn’t replace strategy. It removes repetitive labor around strategy.

The best workflow improvements usually come from:

- Tagging clips into modules so hooks, bodies, and CTAs become searchable building blocks

- Generating combinations quickly so teams can test message families, not just single ads

- Applying consistent overlays and subtitles without hand-editing each variation

- Publishing directly to ad platforms with the naming structure already embedded

That’s the logic behind platforms built for performance creative operations. For example, https://sovran.ai/blog/ai-creative-automation-platform outlines a workflow where uploaded clips are tagged into reusable units, remixed into variants, and pushed to platforms with naming conventions applied. That matters when your issue is no longer “what should we test?” but “how do we operationalize enough testing volume without drowning the team?”

A quick walkthrough helps make that process more concrete:

Automation changes the shape of the team

Once repetitive production tasks shrink, buyers and strategists can spend more time on the work that compounds:

- spotting creative patterns

- briefing better angles

- reviewing test integrity

- promoting the right winners

- building the next matrix from evidence instead of instinct

That’s the advantage. Not fewer people thinking. More of their time spent on judgment instead of file handling.

A creative testing framework Meta ads program becomes much more durable when the machine around it is built for throughput.

Frequently Asked Questions

How much should I budget for a single creative test?

Use the thresholds from the frameworks already covered rather than guessing. Segwise’s methodology recommends $20 to $50 per variant and a 7 to 14 day test window in ABO for structured testing, while Pilothouse’s framework says lower-funnel reads need 30 to 50 conversions per variant before you can confidently separate signal from noise. The right number depends on your event cost, but the principle is consistent. Don’t judge an ad before it has enough room to be understood.

How do I test creative if my audience is very niche?

Consolidate more than you segment. If your audience pool is tight, putting every idea into its own tiny targeting pocket usually makes the read worse. Build one larger, relevant test audience where possible, keep the creative variable clean, and let the asset do more of the targeting work. The core logic of the framework doesn’t change. You just have less room for fragmentation.

Should I test statics, videos, and carousels in the same ad set?

Usually no, not if the goal is diagnosis. Format itself is a major variable. If you mix formats in one read, you can’t always tell whether the result came from message, visual style, or placement fit. Keep format comparisons clean. Test video against video, static against static, then compare learnings at a higher level once each format family has been evaluated fairly.

My winning test creative failed in my scaling campaign. What happened?

That’s common. Two usual causes show up.

First, the scaling environment is different. The test may have run in a more controlled structure, then moved into a setup with different budget behavior, audience dynamics, or neighboring ads. Second, the original win may not have been as durable as it looked. If scale data says the ad can’t hold, trust the larger environment and move on. Treat the failed graduation as a useful negative signal, then go back to the pattern behind the ad rather than forcing the exact asset.

If your team already has the creative raw material but struggles to turn it into a reliable testing engine, Sovran is built for that production and launch layer. It helps teams tag clips into modular assets, generate Hook-Body-CTA variants, batch render versions, and publish into Meta with consistent naming so testing moves faster and learnings stay organized.

Manson Chen

Founder, Sovran

Related Articles

Create Video Ads with AI That Perform in 2026

Mastering AI B Roll for Meta & TikTok Ads