Ad Creative Refresh Strategy: A Tactical Playbook

Jump to a section

- Reading the Signs When Your Ad Creative Goes Stale

- Planning Your Ad Refresh Cadence and Test Design

- Using Creative Frameworks for High-Velocity Production

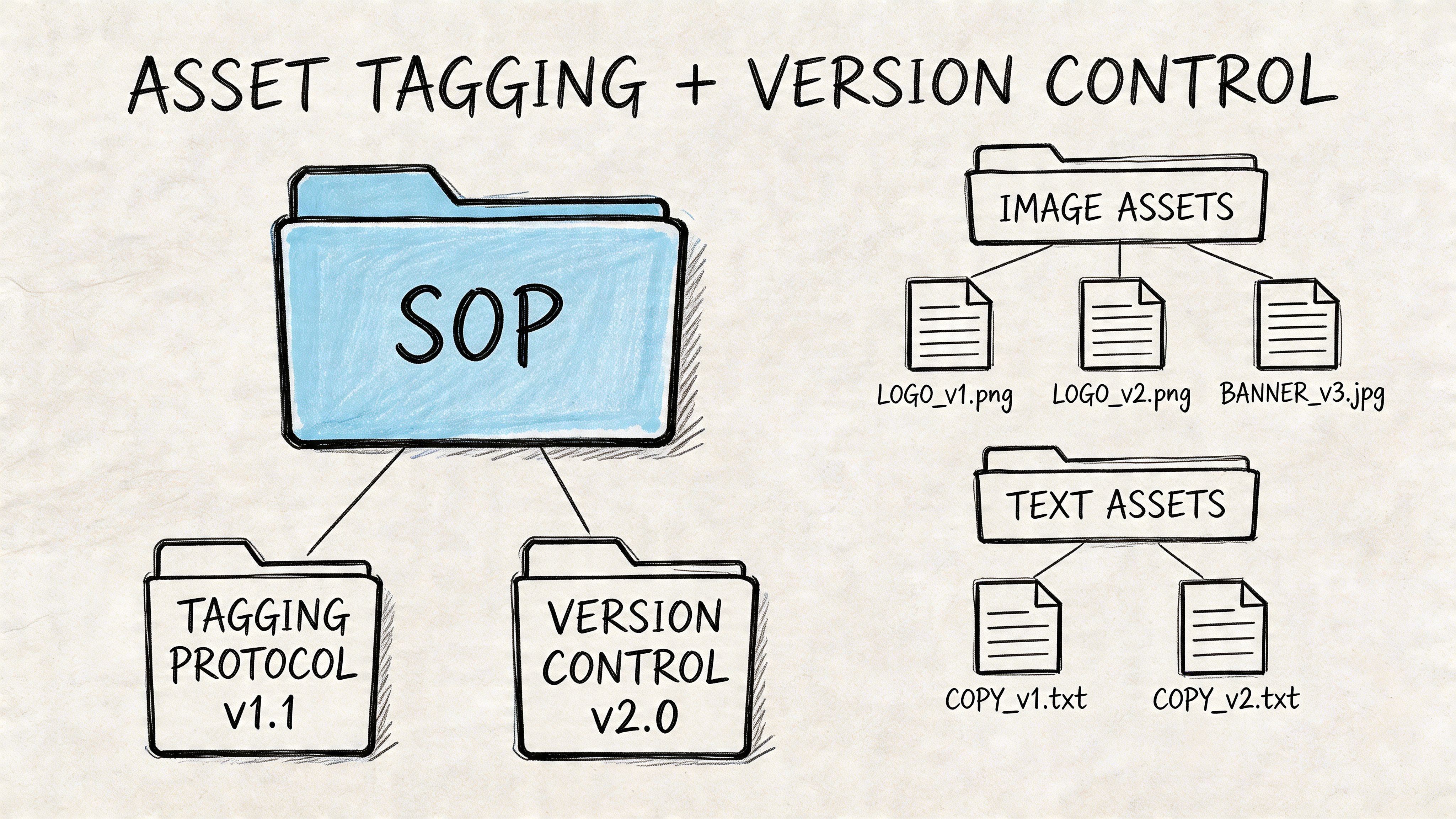

- An SOP for Ad Asset Tagging and Version Control

- Example Refresh Playbooks for Meta and TikTok

- How to Scale Your Refresh Strategy with Automation

- Frequently Asked Questions About Ad Creative Refreshes

You launch a campaign on Meta or TikTok, find a winner, scale spend, and then performance starts slipping in a way that feels annoyingly familiar. CTR softens first. CPC gets less forgiving. Conversion quality starts to wobble. A few days later, the media buyer blames the audience, the creative strategist wants more concepts, and nobody agrees on whether the ad is tired or just having a bad week.

That’s where the issue frequently arises.

A solid ad creative refresh strategy fixes that by turning creative replacement into an operating system instead of a scramble. You need clear fatigue signals, a refresh cadence tied to platform behavior, a production framework that can generate variations without wrecking your team, and a clean asset-management process so learnings don’t disappear into folders called “final_v2_final_REAL.”

This is the playbook performance teams end up building after enough wasted spend. It’s practical, platform-specific, and built for the reality that Meta and TikTok reward testing velocity but punish sloppy creative operations.

Reading the Signs When Your Ad Creative Goes Stale

A Meta ad can look fine in Ads Manager right up until it stops being efficient. Spend holds. Delivery looks steady. Then your click-through rate slips, CPC climbs, and the account starts asking one ad to do too much work.

That is usually the point where teams react too late.

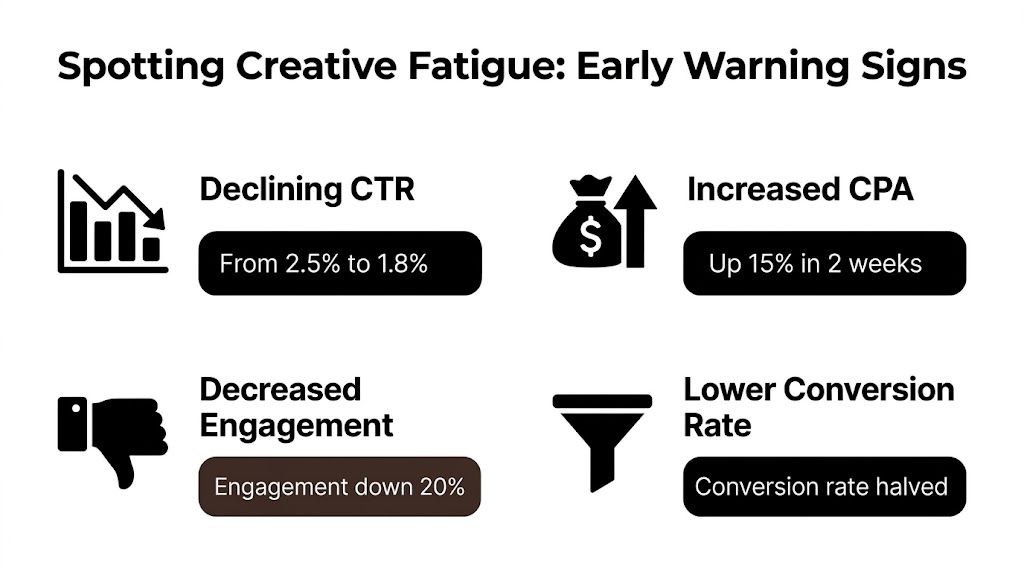

Creative fatigue is easier to manage when you define it as a repeatable operating signal instead of a vague feeling in the account. One useful benchmark, from this creative fatigue evaluation framework, is a week-over-week CTR drop in the 10 to 15% range. The same source recommends setting metric guardrails around CTR, CVR, CPI, and ROAS, then treating a refresh as actionable when two or more indicators move outside your tolerance band at the same time.

Build your dashboard around leading indicators

If you only watch CPA, you find fatigue after it has already hit margin. Meta and TikTok both give you earlier clues, but you need to track them against the ad’s own baseline, not in isolation.

These are the signals worth keeping on the dashboard:

- CTR trend: Compare the last 3 to 7 days to the ad’s stronger period after stabilization.

- CVR drift: Steady click volume with weaker conversion often means the message is attracting lower-intent traffic.

- Frequency acceleration: Rising exposure usually shows up before efficiency breaks, especially in narrower audiences.

- Hook performance: On TikTok and Reels, weak opening seconds can drag the whole ad down before CPA fully reflects it.

- Post-click quality: For app and lead gen accounts, weaker onboarding or lead quality can signal message fatigue before top-line platform metrics collapse.

The same source notes that lower hook rates often call for fresh opening frames, and that weaker onboarding completion versus cohort baselines can expose ad-product mismatch in app campaigns. It also notes that frequency pressure often appears several days before broader deterioration, especially in smaller geos and competitive auction periods.

Use a rule your team can apply fast. If two leading indicators soften together, queue a refresh review. If three move at once, replace or iterate the asset.

A lot of teams talk about creative fatigue as if it is just audience boredom. In live accounts, it is usually a mix of repetition, reduced novelty, and the platform finding a slightly different pocket of users than the ad originally won with.

Watch the pattern across days, not one ugly report

One bad day is not a signal. Promo timing, learning resets, pay cycles, and placement mix can all distort short windows.

A cleaner setup is to classify ads into operating states:

| State | What you’re seeing | What to do |

|---|---|---|

| Healthy | Core metrics are stable or improving | Keep spending, keep testing variations in the background |

| At risk | Two metrics are drifting toward your thresholds | Prepare replacement assets and reduce reliance on one winner |

| Fatigued | Multiple trigger conditions are hit across the same window | Swap creative, then review whether the angle, hook, or format failed |

Creative refresh decisions break down when every stakeholder uses a different definition. The media buyer sees CPA. The strategist sees thumb-stop rate. The founder sees blended revenue. A shared fatigue framework keeps those conversations grounded in the same evidence.

If you want a more operational way to diagnose and respond, this guide on creative fatigue solutions is useful because it treats fatigue as a workflow issue tied to testing, replacement speed, and asset management.

What stale creative looks like on-platform

On Meta, fatigue usually looks gradual. One ad absorbs more and more delivery, frequency rises, and efficiency compresses even though the ad still wins enough auctions to keep spending. That is why many accounts hold volume longer than they hold profitability.

On TikTok, the drop is often sharper. The first seconds stop earning attention, the comment section can still look active, and the ad keeps spending just long enough to fool the team into waiting another few days.

The practical mistake is blaming the audience first. In many accounts, the audience is still usable. The hook, message, or visual treatment is what stopped matching the current auction and user behavior.

That distinction matters because the fix is different. You do not just need a new ad. You need a system that tells you which metric triggered the refresh, which component likely failed, and what version should replace it.

Planning Your Ad Refresh Cadence and Test Design

A useful refresh cadence starts with a real account constraint.

You are scaling a Meta prospecting campaign, one ad is taking most of the spend, and results are still passable enough that nobody wants to touch it. Then CPA starts drifting, frequency keeps climbing, and the replacement queue is empty. At that point, cadence is no longer a planning exercise. It is an operations problem.

A good refresh plan solves that before performance slips. It ties creative replacement to clear triggers, a production schedule, and a test structure your team can repeat every week.

Set cadence by platform pressure and spend concentration

Meta and TikTok do not burn through creative at the same pace. Meta often gives you a slower decline, especially in broader audiences where one strong ad can keep winning auctions longer than it should. TikTok usually punishes stale openings faster. If the first seconds stop earning attention, the ad can fall off quickly even when the offer has not changed.

Spend concentration matters just as much as platform behavior. If one ad or one creator is absorbing a disproportionate share of delivery, refresh sooner. If delivery is spread across a wider pool of assets, you have more room to rotate on a planned schedule instead of reacting late.

In practice, many teams set two layers of cadence:

- Planned review cadence: weekly on Meta, two to three times per week on TikTok

- Triggered refresh cadence: immediate replacement when lead metrics or efficiency metrics cross your threshold

That distinction keeps the team honest. You still review on a schedule, but you do not wait for Monday if a top-spend TikTok creative is clearly rolling over on Thursday.

Build trigger thresholds before you need them

Refresh decisions get messy when every stakeholder has a different tolerance for decline. Set thresholds in advance at the ad level and use them consistently.

A practical setup looks like this:

- Meta early-warning trigger: CTR starts softening, hook rate drops, frequency rises, and CPA is drifting but not broken

- Meta replacement trigger: CPA is outside target for multiple days at meaningful spend, or the ad is taking too much budget relative to the rest of the creative pool

- TikTok early-warning trigger: hold rate or thumb-stop behavior weakens fast, spend remains active, and conversion rate starts compressing

- TikTok replacement trigger: the ad loses attention early and no longer justifies learning spend

These do not need to be universal benchmarks across every account. A subscription app, a high-AOV ecommerce brand, and a B2B lead gen campaign should not use the same cutoffs. What matters is that your team agrees on the trigger, the owner, and the replacement path.

Design tests to answer one question at a time

Refreshing creative without a test design usually creates noise. The account gets new ads, but the team does not know why the winner won.

The fix is simple. Change one major variable per test batch whenever possible.

Use a structure like this:

- Hook test: same body and CTA, different opening line or first visual

- Angle test: same product and offer, different promise or problem framing

- Proof test: same angle, different evidence such as UGC, demo, testimonial, or founder clip

- CTA test: same message, different closing ask matched to user intent

This is the part many teams skip. They brief a “new version” that changes creator, script, edit style, headline, and offer framing all at once. That can still produce a winner, but it produces weak learning. If you are trying to build a repeatable refresh machine, clean reads matter more than creative chaos.

Match the size of the refresh to the fatigue level

Not every decline calls for a full rebuild. Teams waste time when they remake the whole ad for a small problem, and they waste budget when they make cosmetic edits to a creative that is already exhausted.

Use this operating rule:

| Fatigue level | What to change | Typical use case |

|---|---|---|

| Early fatigue | Replace the first seconds, headline framing, opening visual pattern, or caption hook | The angle still works, but attention is slipping |

| Mid-stage fatigue | Change the hook and proof layer, keep the core message | The promise still resonates, but the delivery feels overexposed |

| Advanced fatigue | Rebuild the concept with a new angle, structure, and proof style | The audience has seen the message enough times that minor edits no longer register |

This approach gives production a clear brief. It also keeps media buying aligned with creative. The buyer knows whether to expect an iteration that protects existing learning or a net-new concept that needs more room to stabilize.

Use a repeatable variant matrix

The strongest teams do not ask, “What should we make next?” every time a creative fades. They work from a controlled matrix tied to the account’s current needs.

A simple matrix might include:

- 3 hook variants for the current winning angle

- 2 proof variants for the same angle

- 1 CTA variant for lower-intent traffic

- 2 net-new angles entering the next test cycle

That gives you both defense and upside. You are protecting performance with iterations while still opening paths to the next winner.

If you need a tighter planning model, this guide on how many ad variations to test is a useful reference before you set your next sprint.

Separate meaningful change from cosmetic change

A lot of “new” creative is not new from the platform or user perspective. A different crop, updated subtitle styling, or a new thumbnail can help at the margin. It usually does not carry a fatigued ad on its own.

Use this filter before approving a refresh:

| Refresh type | Usually strong | Usually weak |

|---|---|---|

| Opening | New first scene, new spoken hook, different visual pattern break | Same script with a new text treatment |

| Message | Different pain point, benefit order, or claim framing | Minor copy edits to the same claim |

| Proof | New creator, new demo context, different testimonial format | Same asset sequence in a different order |

| CTA | New close matched to awareness or intent level | Generic button-copy changes |

The goal is not volume for its own sake. The goal is a refresh system that tells you when to ship an iteration, when to replace the concept, and what learning each batch should produce. That is how you keep Meta and TikTok fed without turning creative testing into random output.

Using Creative Frameworks for High-Velocity Production

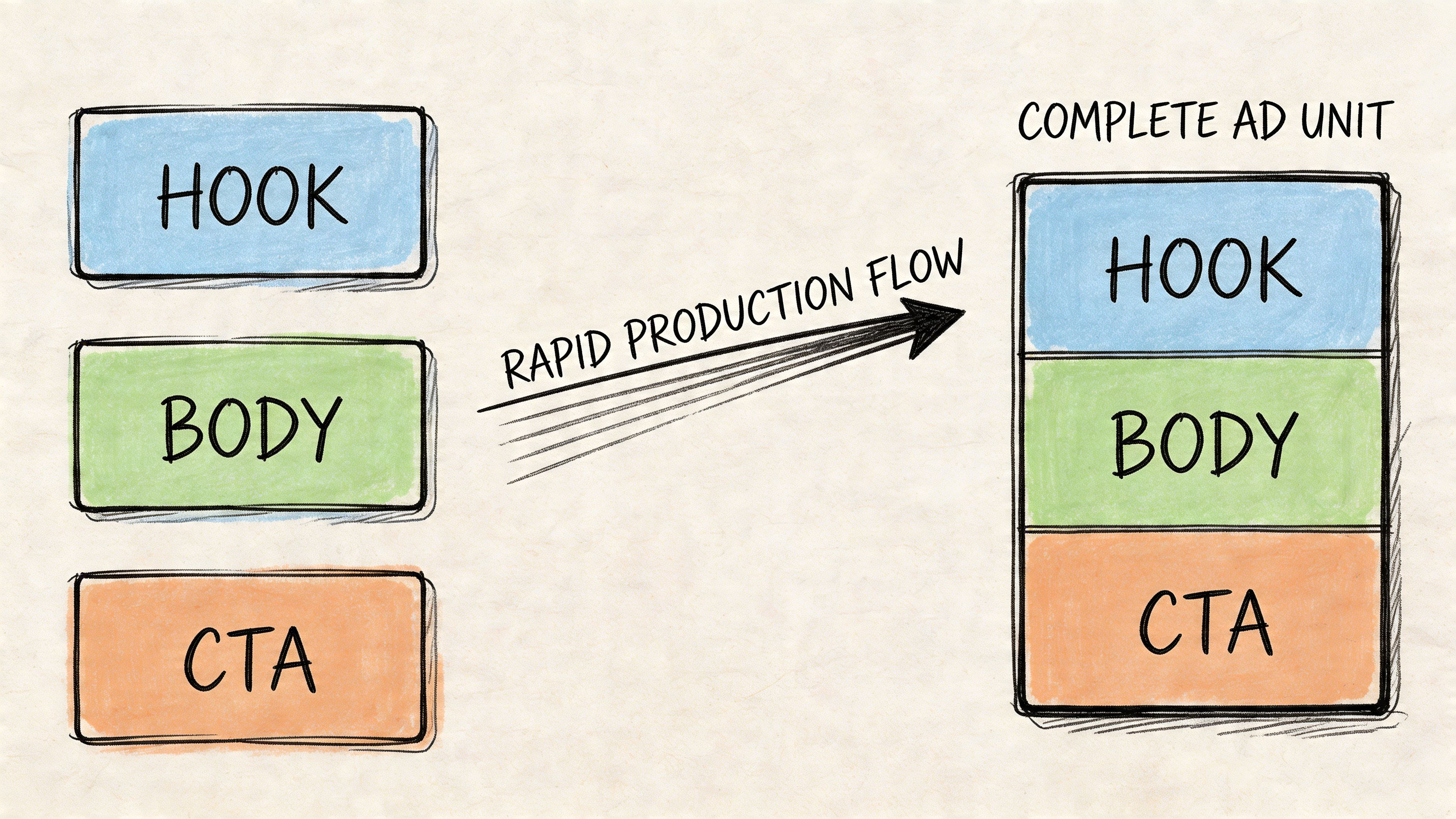

If your refresh strategy depends on inventing a brand-new concept every time an ad slows down, you’ll lose the production battle. The teams that keep Meta and TikTok healthy don’t create from scratch for every refresh. They build from modules.

The most durable framework is still Hook, Body, CTA.

It works because those are the three layers most performance teams need to manipulate. You can rewrite the opening without throwing away the sales argument. You can keep the same CTA while swapping the proof. You can preserve the core message while changing how the audience enters the story.

The modular creative stack

Think of your ad library as a parts inventory, not a gallery of finished videos.

A practical modular system usually includes:

- Hooks: problem-first lines, curiosity openers, blunt benefit statements, creator-led confessions, direct comparisons

- Bodies: product demo clips, UGC testimonials, screen recordings, before-and-after explanations, feature walkthroughs

- CTAs: soft trial asks, urgency-driven closes, social proof closes, app-install pushes, product-detail prompts

Once you start working this way, refreshing gets faster and smarter. You stop asking for “five new videos” and start asking for “three new cold-audience hooks, two alternative proof sections, and one stronger closing ask for high-intent users.”

That sounds operational because it is. Creative velocity is a production problem before it’s a design problem.

Where brand teams and performance teams collide

High-volume testing creates a real tension. Brand systems are usually built for a small number of polished hero assets. Performance systems need many more variants. Existing guidance often doesn’t reconcile that conflict, especially when modular assembly is required at scale, as noted in this discussion of refreshing creatives without losing consistency.

Teams require an on-brand variation framework. Not a vague instruction to “stay consistent.”

Separate your creative elements into two groups:

Non-negotiable brand markers

These should remain stable across variations.

- Visual identity: logo treatment, type system, recurring color logic

- Message boundaries: claims you can make, claims you can’t make

- Tone guardrails: playful, expert, premium, plainspoken

- Product truth: the core promise the ad must not distort

Flexible performance variables

These should move constantly.

- Who delivers the message

- How the problem is framed

- Which proof format you use

- How fast the pacing feels

- What the first three seconds emphasize

Brand consistency doesn’t require every ad to look the same. It requires every ad to feel recognizably true to the offer.

That distinction gives creative strategists room to produce volume without turning the account into a collage of mismatched experiments.

A practical production brief

If you want output fast, stop briefing full ads and start briefing components.

A useful brief for a weekly sprint includes:

| Component | What to request |

|---|---|

| Hooks | New openings by audience pain point, awareness stage, and creator style |

| Bodies | Demo sections, testimonial segments, objection handling, feature-led proof |

| CTAs | Different closes mapped to install, purchase, lead, or trial intent |

| Formats | Feed video, Story cut, Reels-safe version, TikTok-native cut |

| Constraints | Brand markers that must stay fixed |

After you define those parts, your editors or automation stack can assemble many combinations without restarting the concept process each time. This piece on a modular video ad framework is a strong reference if you’re trying to formalize that workflow inside an in-house team or agency.

A useful walkthrough of modular assembly and production thinking is below.

What works and what doesn’t

Here’s the blunt version.

What works:

- Recombining proven elements into new perceived ads

- Refreshing hooks first when attention is fading

- Keeping a backlog of alternate proof assets so you’re not stuck with one testimonial style

- Letting platform context shape the edit, especially on TikTok

What doesn’t:

- Treating every winner as untouchable

- Forcing brand-polished edits onto native environments

- Asking designers to invent endlessly without a module system

- Calling tiny cosmetic edits a refresh

The teams that sustain velocity aren’t more inspired. They’re more organized.

An SOP for Ad Asset Tagging and Version Control

Creative operations usually break in the same place. The team can make ads, but it can’t find anything later.

Someone asks for “that UGC clip where the creator opens with the problem and then shows the product in a bathroom mirror.” Three people search Drive. One editor checks Dropbox. A strategist posts a Slack message. Ten minutes later, somebody exports the wrong cut and names it final_v4.

That chaos kills refresh speed.

Use a naming convention that encodes meaning

Every asset name should tell you what it is without opening the file.

A practical pattern looks like this:

[Brand][Platform][Audience][Angle][Format][Creator][Date]_[Version]

Example: Acme_Meta_Prospecting_SocialProof_UGC_Ana_2026-04-17_V03

That structure solves several problems at once. You can sort by platform, filter by audience intent, identify the angle, and know whether you’re looking at a rough cut or current approved version.

Add tags for how the asset behaves

File names are only part of the system. Tags make the library searchable in a way media buyers and creative strategists can use.

Tag at two levels.

Creative attributes

These describe what the asset contains.

- Style tags: UGC, founder, demo, testimonial, screen-recording, motion-graphic

- Hook tags: problem-first, curiosity, direct-benefit, comparison, objection-led

- Proof tags: review, feature-demo, social-proof, transformation, authority

- CTA tags: install-now, learn-more, try-it, shop-now, low-friction

Operational tags

These describe how the asset should be used.

- Status: draft, approved, live, paused, archived

- Channel fit: Meta Feed, Reels, Stories, TikTok

- Audience fit: cold, warm, retargeting

- Brand approval: cleared, restricted, legal-review

- Performance notes: winner-element, weak-hook, strong-body, reusable-cta

If your team can’t search by hook type, proof style, and audience fit, your library is a storage bin, not a performance asset.

Version control needs rules, not memory

A clean SOP prevents accidental overwrites and duplicate learning loss.

Use rules like these:

- Never replace an exported live file. Create a new version every time.

- Lock approved masters. Editors work from duplicates, not live finals.

- Keep edit files and exported deliverables separated.

- Store performance notes next to the asset record, not in someone’s head.

- Archive retired assets with tags intact. Fatigued ads still contain reusable parts.

A lightweight folder structure works well:

| Folder | Purpose |

|---|---|

| 01 Raw Assets | Original creator footage, product clips, screenshots, VO |

| 02 Cut Components | Isolated hooks, bodies, CTAs, captions, B-roll |

| 03 Assembly Files | Timeline projects and working edits |

| 04 Approved Exports | Ready-to-launch versions only |

| 05 Archive | Retired assets with tags and notes preserved |

The handoff standard that saves time

A significant payoff comes when media, creative, and production all use the same metadata language.

A handoff record for each launched ad should include:

- Campaign context

- Target audience

- Primary angle

- Hook type

- Proof type

- CTA used

- Launch date

- Current status

- Notable learnings

That way, when an ad starts to weaken, you don’t just replace it. You query the asset bank for adjacent variants that fit the same audience and angle.

If you’re building this discipline from scratch, a guide on asset management best practices can help turn the naming and tagging logic into a repeatable team process.

Example Refresh Playbooks for Meta and TikTok

Monday morning, Meta is still spending into last week’s winner, but CTR is drifting down and your new variants are barely taking delivery. TikTok is a different problem. The same product angle can feel tired within days because the opening no longer earns the first second of attention.

Both platforms want fresh creative. They define freshness differently, and your refresh system has to reflect that.

As noted earlier, TikTok usually needs a tighter replacement cycle than Meta. Meta can keep spending on a strong concept longer if you introduce meaningful variation around the core message. TikTok burns through openings, creator delivery, and visual context faster. If you run one shared production rhythm across both, one platform gets starved and the other gets cluttered.

Meta versus TikTok in practice

| Variable | Meta (Facebook/Instagram) | TikTok |

|---|---|---|

| Refresh pressure | Moderate but persistent | Fast and unforgiving |

| What usually fades first | CTR efficiency and similarity fatigue | Hook strength and native feel |

| Best first intervention | New hooks, fresh proposition framing, distinct visual treatment | New creator delivery, new opening pattern, faster cultural fit |

| Creative style that holds up | Clear offer, structured proof, polished but not sterile | Native, immediate, personality-led, less obviously “ad” |

| Operational risk | Over-relying on one winner, similarity across variants | Burning through concepts too slowly |

| Testing mindset | Controlled iteration around angle and proof | High-velocity variation around hook and delivery style |

A Meta refresh playbook

On Meta, stale creative often looks stable right before it slips. Spend still concentrates on the old winner, frequency creeps up, and the replacements look different to your team but not different enough to the auction.

Use a practical sequence:

- Start with the winning structure. Identify the angle, proof sequence, offer framing, and first-frame pattern that made the ad work.

- Decide what stays and what changes. Keep one or two proven components. Replace the rest with clearly different inputs.

- Batch-launch variants with real separation. Change more than the creator or headline. Change pacing, shot selection, text treatment, order of proof, or visual context.

- Judge refreshes by spend uptake and efficiency, not approval alone. A variant that launches but never exits learning is not a useful replacement.

The trade-off on Meta is straightforward. If you change too little, the platform treats your new ad like a close cousin of the old one and you learn very little. If you change too much, you may throw away a message architecture that still had room to run. Good teams preserve the core selling idea and rotate the expression.

A simple weekly Meta queue might include:

- 2 to 3 new hooks built on the same offer

- 2 proof-led edits that reorder testimonials, demos, or before-and-after framing

- 1 or 2 angle extensions for adjacent objections or use cases

That gives media buyers enough contrast to test without flooding the account with low-signal variations.

A TikTok refresh playbook

TikTok punishes repetition at the surface level first. The product claim may still be fine. The issue is that the delivery already feels seen.

That changes the refresh order.

- Replace the opening line first. New first seconds often do more than a full re-edit of the middle.

- Rotate the person, setting, or camera behavior. A new face in a new environment can reset attention faster than cleaner editing.

- Use comments, search terms, and organic posts as inputs. Audience language usually gives you the next hook faster than a blank brief.

- Cut more versions from the same concept before you abandon it. On TikTok, concept exhaustion and execution exhaustion are different problems.

For teams still tightening setup and workflow discipline, this guide to mastering TikTok Ads Manager is useful because weak campaign structure can hide whether the creative failed.

The biggest mistake on TikTok is overproducing the wrong thing. Teams spend time polishing motion graphics, captions, and transitions when the core issue is that the first line sounds like ad copy. TikTok usually responds better to a new human delivery pattern than to a prettier cut.

How to split production across both platforms

Do not divide output 50/50 by default. Allocate by decay rate and by how much variation each platform needs to stay competitive.

A working model looks like this:

- Meta: fewer total concepts, more deliberate variation within each concept

- TikTok: more openings, more creators, more environmental changes, more edit density

If the same source footage is feeding both channels, create platform-specific branches early. One branch should preserve a cleaner proof-led structure for Meta. The other should prioritize native hooks, faster cuts, and creator-led delivery for TikTok. Teams using an AI creative automation platform can speed up that branching process, especially when one approved concept needs multiple platform-specific versions in the same week.

The operating principle is simple. Meta rewards distinct relevance. TikTok rewards renewed attention. Build your refresh queue around that difference, and your team stops guessing which ads to remake and starts replacing fatigue with intent.

How to Scale Your Refresh Strategy with Automation

Manual refresh systems work up to a point. Then volume breaks them.

The pressure shows up in familiar ways. Analysts are manually checking fatigue signals. Editors rebuild the same ad in multiple aspect ratios. Strategists chase old clips through folders. Media buyers wait on exports. None of those tasks are strategic, but all of them slow down your response time.

Automation matters because a real ad creative refresh strategy is repetitive by design. You’re monitoring signals, recombining modules, tagging assets, generating variants, routing approvals, and pushing live files into platform workflows. If that entire chain depends on spreadsheets and Slack threads, scale gets messy fast.

What to automate first

Start with the tasks that are high-frequency and low-judgment:

- Asset tagging: scene detection, transcript parsing, hook identification, creator recognition

- Variant assembly: recombining hooks, bodies, and CTAs into approved templates

- Format adaptation: turning source edits into feed, Story, Reels, and TikTok-safe outputs

- Workflow triggers: alerting the team when fatigue conditions are met

- Platform delivery: moving approved ads into launch-ready structures faster

Tools begin to separate tactical teams from operationally mature ones. Some teams use a mix of Airtable, Frame.io, Google Drive, and manual editing systems. That can work. But once you’re running lots of variations, integrated creative automation becomes easier to justify.

One option in that category is AI creative automation platforms, which combine asset tagging, modular editing, and export workflows so teams can assemble and launch large batches without rebuilding every ad by hand. Sovran is one example. It’s built for modular video testing on Meta and can push large sets of variations from hooks, bodies, and CTAs into workflow-ready outputs.

The strategic shift that matters

The biggest change isn’t speed by itself. It’s how your team starts thinking.

Instead of asking, “Can we make fresh ads in time?” you start asking:

- Which creative elements are reusable?

- Which fatigue signals should trigger automated prep?

- Which audiences need separate variation pools?

- Which approvals can be templated instead of repeated?

The best automation doesn’t replace creative judgment. It removes the busywork that prevents creative judgment from being used where it matters.

That’s the key upgrade. You stop running refreshes as isolated rescue missions and start operating a creative system that’s designed for ongoing turnover.

Frequently Asked Questions About Ad Creative Refreshes

How often should you refresh ad creative

There isn’t a single universal answer. The industry still lacks clear frameworks that tie refresh timing tightly to variables like channel, audience size, and budget, and while reviewing creative every 4 to 6 weeks is often treated as a useful rule of thumb, that guidance falls short for teams running multivariate testing at scale, according to this analysis of refresh frequency gaps. In practice, you should use platform behavior and your own trigger thresholds rather than a fixed calendar alone.

What’s the difference between a refresh and an iteration

A refresh changes enough of the ad that the audience and platform perceive it as new. An iteration is a controlled variation based on an existing winner. Iterations are usually the better first move because they preserve learnings. Full refreshes make more sense when the ad is clearly exhausted or the message itself is no longer pulling.

Does a bigger budget always mean faster fatigue

Usually, yes in practical terms, because your ads cycle through reachable attention faster. But don’t reduce it to spend alone. Audience breadth, placement mix, and how many variations are in rotation all shape how long a creative stays productive.

Should you pause a fatigued winner immediately

Not always. If the ad is still contributing and you don’t have a stronger replacement ready, a partial reduction can be smarter than a hard pause. The key is not to let one aging winner carry the account while new variants are still missing.

Is changing the hook enough

Sometimes. On TikTok especially, a new opening can materially change how the same core message performs. On Meta, you often need a broader perceived difference, especially if several ads already share similar visual logic.

How many new ads should be in the queue before launch

Enough that you’re not forcing one ad to do all the work. The exact number depends on your spend level, platform mix, and production capacity. What matters is having a backlog of approved variants before fatigue becomes urgent.

Can you stay on-brand while producing at high volume

Yes, but only if you define what must stay fixed and what should stay flexible. Teams run into trouble when brand rules are too vague for editors or too rigid for performance testing. Clear guardrails solve most of that tension.

If your team is stuck refreshing ads manually, juggling edits across tools, and losing time every time a winner fades, Sovran is worth a look. It’s built for performance marketers who need to turn existing footage into modular test-ready variations, organize assets for fast reuse, and keep Meta creative pipelines moving without slowing down quality control.

Manson Chen

Founder, Sovran

Related Articles

Create Video Ads with AI That Perform in 2026

AI UGC Video: A Guide to Scale Ads on Meta & TikTok