Deepfake Video Makers Unmasked for Marketers in 2026

Uncover the risks and safe alternatives to a deepfake video maker. Learn to leverage ethical AI for video ads on Meta & TikTok without harming your brand.

Jump to a section

A deepfake video maker is a piece of AI software that creates hyper-realistic—but fake—video and audio. It works by taking existing media and mashing it up, replacing one person's face with another or even synthesizing someone's voice to say things they never said.

How a Deepfake Video Maker Actually Works

So, how does this tech pull off such convincing fakes? It all comes down to a clever AI setup.

Think of it like an expert art forger and a sharp-eyed critic locked in a digital workshop. The forger, which we'll call the generator, paints a fake. The critic, or discriminator, immediately calls out every tiny flaw. This forces the forger to go back and try again, getting better and better until the fake is so good that even the critic can't tell it apart from the real thing.

This constant back-and-forth is the whole idea behind a Generative Adversarial Network (GAN), the engine that powers most deepfake tools. It’s a competitive loop where one AI model generates content and another one judges it, pushing the quality higher and higher with every cycle. This process is what makes the final results so uncannily realistic.

The Key Technologies Behind the Magic

While GANs are the star of the show, they work alongside other specialized AI techniques. Each plays a specific role in convincingly mimicking a person.

Here's a quick breakdown of the core technologies you'll find in a modern deepfake video maker.

Technology | Function | Analogy |

|---|---|---|

Generative Adversarial Network (GAN) | Creates new, synthetic data (like a face) by having two neural networks compete against each other. | An art forger (Generator) trying to fool an art critic (Discriminator). |

Autoencoders | Compresses a person's facial features into a compact "code" and then reconstructs it. Essential for face-swapping. | Creating a simplified, unique blueprint of a face that can be stamped onto another video. |

Voice Cloning / Synthesis | Analyzes a person's speech patterns, pitch, and cadence to generate new audio in their voice. | A musician who can perfectly replicate another singer's voice after listening to just a few songs. |

These technologies don't work in isolation; they're woven together to create the final, seamless illusion of a person saying and doing things they never did.

The Ingredients for a Deepfake

You can't just press a button and create a deepfake from scratch. The AI needs a lot of data to learn what a person looks and sounds like. The final quality is a direct result of how good that source material is.

Here’s what the AI needs to be "fed":

Source Video/Images: The more high-resolution photos or video clips of the "target" person, the better. You need footage from different angles, with varied expressions and under different lighting, for the AI to truly learn their face.

Destination Video: This is the base video where the new, synthesized face will be placed. The AI keeps the body movements and background from this clip.

Voice Samples: For cloning a voice, the AI needs clean audio recordings of the person speaking. Just a few minutes of clear speech can be enough for it to generate new sentences with the same tone and rhythm.

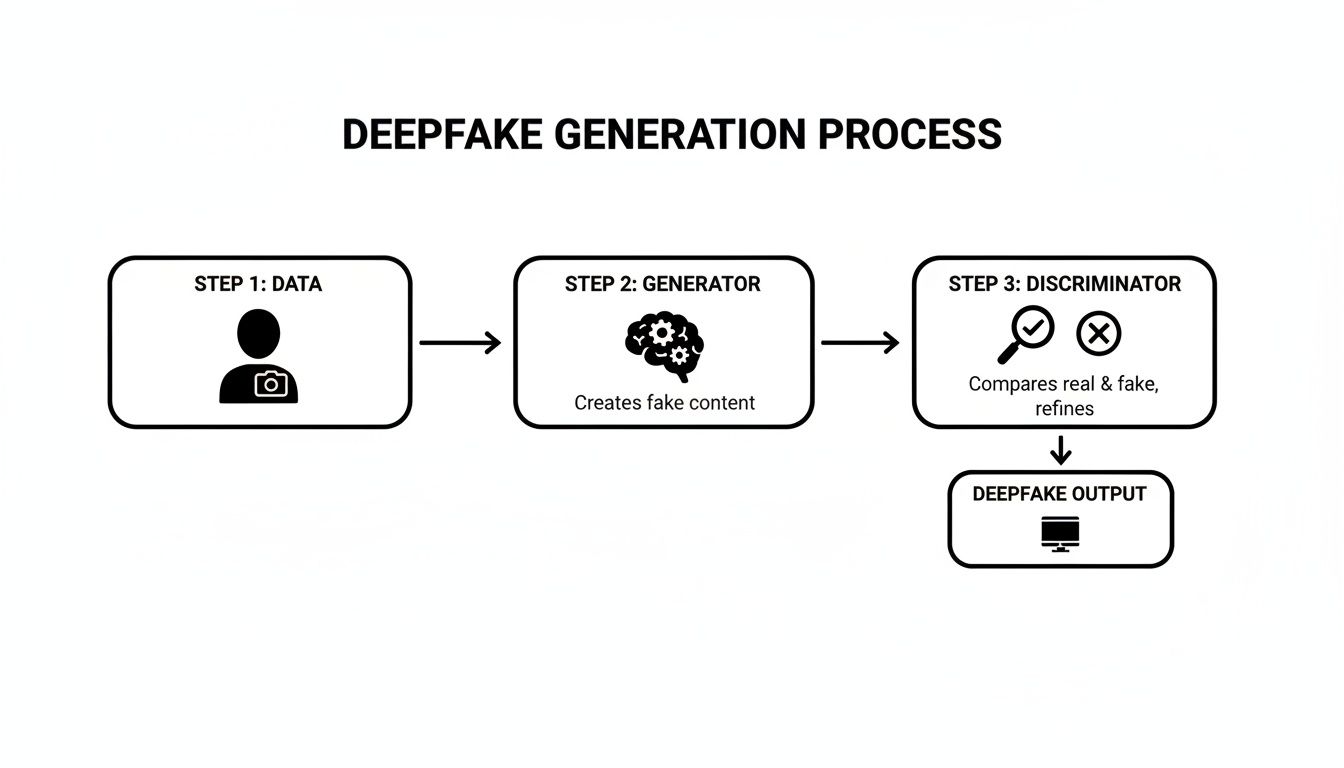

This flow chart gives you a simplified look at how these parts come together inside a GAN.

As you can see, it's a feedback loop. Data is fed to the generator, the discriminator judges it, and the generator keeps refining its work until the output is believable.

From Data to Final Render

Once the AI has been trained on all that source material, the deepfake maker starts the real work. It goes through the destination video frame by frame, meticulously replacing the original face with the new, AI-generated one. It aligns the eyes and mouth, matches the expressions, and even does its best to copy the lighting and shadows.

For marketers, knowing this process is key. It shows why deepfakes are so data-intensive and often have tell-tale glitches, like weird blinking patterns or flickering around the edges of the face. If you're curious, you can learn more about how brands are using other forms of AI-powered video creation that don't rely on these risky methods.

At its heart, a deepfake video maker isn't creating something from nothing. It's a sophisticated digital mimic, learning from real-world data to construct a believable illusion. The effectiveness of this illusion depends entirely on the data it's given.

This heavy reliance on a person's biometric data is exactly why the technology is a minefield of ethical and legal problems. Unlike tools that automate video editing using a brand's own assets, a deepfake video maker fundamentally works by hijacking someone's identity, often without their permission. This is the bright red line separating responsible AI automation from high-risk digital impersonation.

The Evolution from Lab Experiments to Viral Threats

To get why a deepfake video maker is such a minefield for brands today, you have to look at how the tech went from a niche academic project to a full-blown cultural phenomenon. Its journey from obscure labs to viral TikToks is a wild ride, and it explains why the technology carries so much reputational baggage. For marketers, this history is a critical warning.

The seeds of deepfake tech were sown long before the name was coined. You can trace its roots back to 1997 with a program called Video Rewrite. A few researchers—Christoph Bregler, Michele Covell, and Malcolm Slaney—built the first automated system for facial reanimation. Their goal was to sync a person's lip movements in a video to a totally new audio track. The idea was practical, aiming to simplify the costly process of dubbing foreign films, but it was the first real glimpse into AI-powered video manipulation. You can dig into the early history of this video manipulation technology to see just how academic it all started.

The GAN Breakthrough

For years, this kind of tech stayed locked away in computer science departments. The real turning point came in 2014 when Ian Goodfellow invented Generative Adversarial Networks (GANs). As we've touched on, this model is brilliant in its simplicity: it pits two neural networks against each other. One network—the generator—creates fakes, while the other—the discriminator—tries to spot them. They go back and forth, getting better and better with each round.

This constant competition was the key that unlocked hyper-realistic synthetic media. All of a sudden, it wasn't just about editing video; you could generate faces, voices, and entire videos from scratch that were often indistinguishable from the real thing. This single innovation laid the entire foundation for the modern deepfake video maker.

From Reddit to Global Threat

The technology didn't stay in the lab for long. It exploded into the public consciousness in 2017 when a Reddit user coined the term "deepfake" while sharing synthetic videos. An open-source community quickly sprang up around it.

This was a classic double-edged sword. On one hand, making the tech accessible spurred a ton of creative experiments. On the other, it unleashed tools like FakeApp and DeepFaceLab into the wild, putting the power of a deepfake video maker into the hands of anyone with a decent computer and, potentially, malicious intent.

This newfound accessibility had immediate and deeply disturbing consequences. The technology’s early life was dominated by misuse, cementing its association with harm and deception from the get-go.

The Dark Side of Virality

The rapid spread of deepfake tools led to two things happening at once, both of which define how we see the tech today. First was its shocking weaponization for malicious purposes. A 2019 analysis found a horrifying statistic: 96% of all deepfake videos online were non-consensual pornography, almost exclusively targeting women. This cemented the technology’s primary use case in the public eye as a tool for harassment and abuse.

At the same time, its creative side was on full display in viral stunts that blew people's minds. A series of incredibly realistic deepfakes of a Tom Cruise impersonator on TikTok showed just how convincing the technology had become. While these videos were made for fun, they were also a stark warning about the potential for widespread misinformation and undetectable digital impersonation.

This dual history—born from academic curiosity, supercharged by AI breakthroughs, and defined by both viral creativity and widespread abuse—is crucial context for any marketer. It explains why using a deepfake video maker isn't just a technical decision. It’s a move loaded with serious ethical landmines and major brand safety risks.

The Brand-Killing Risks of Deepfake Advertising

For a performance marketer, a deepfake video maker can look like a shortcut to the holy grail. Think about it: endless celebrity testimonials, perfectly localized ads with famous faces, or personalized shout-outs from top influencers—all without the crazy costs or logistical headaches. But when you pull back the curtain, you’ll find a minefield of brand-destroying risks that can absolutely cripple your company's reputation and bottom line.

Sure, the promise of fast, scalable video creative is tempting. But using this tech for advertising crosses a very dangerous line. The consequences aren't just theoretical. They show up as real, painful legal battles, ethical dumpster fires, and brand safety crises that make any creative benefit look tiny in comparison.

The Legal Nightmare of Digital Impersonation

The second you use a deepfake video maker for an ad with someone’s likeness—without their explicit, written consent—you’ve walked straight into a legal mess. The most obvious landmine is violating the right of publicity. This is a legal shield that stops people from using someone's name, image, or likeness for commercial gain without permission.

That fake celebrity endorsement isn’t just misleading your customers; it’s practically an invitation for a very expensive lawsuit from that celebrity. And the damages can be huge, covering not just the unauthorized use but also the harm done to their personal brand.

It doesn’t stop there. Here are some other legal monsters waiting for you:

Defamation: If a deepfake makes someone look like they’re saying or doing something false or negative, that’s libel. Imagine an ad where a public figure seems to say something offensive. The reputational and financial fallout would be catastrophic.

Copyright Infringement: Those source videos and images you need to train the AI? They’re probably copyrighted. Using them without a license is just another fast track to legal trouble.

Deceptive Advertising Regulations: Regulators like the FTC have very strict rules against false or misleading ads. An ad built on a fake persona is, by its very nature, deceptive.

The Ethical Fallout That Shatters Trust

Even if you could somehow sidestep all the legal problems, the ethical damage is just as bad. Modern marketing is all about authenticity and trust. People want to connect with brands that are real and honest. Pushing a deepfake ad is a direct betrayal of that trust.

When your customers find out they were tricked by a synthetic person, the backlash is swift and brutal. It instantly paints your brand as manipulative and dishonest, a stain that’s incredibly hard to wash off. This isn't some gray area. It’s a clean break of the unspoken promise between a brand and its audience.

Using a deepfake video maker for advertising is like building a relationship on a lie. The moment the truth comes out, the foundation crumbles, and any trust you've built is permanently lost. This isn't just bad PR—it's a fundamental breach of business ethics.

The technology’s dark history only makes things worse. Deepfake videos have exploded in volume, with the number online jumping tenfold from 2022 to 2023. This easy access has led to widespread misuse. One study found that 96% of over 14,000 deepfakes were non-consensual pornography. The tech has also been weaponized for political interference, like the 2024 incident where a robocall mimicking a presidential candidate's voice targeted over 20,000 voters. You can dig deeper in this comprehensive overview of deepfake history and misuse.

Brand Safety and Platform Penalties

Tying your brand to a technology known for malicious use is a massive brand safety blunder. Ad platforms like Meta and TikTok have increasingly tough policies against manipulated media. Running a deepfake ad is a surefire way to get your content rejected, your ad account suspended, or even banned for good.

Look at it from the platform's side: they have to protect their users from scams and misinformation. Your deepfake ad isn't just another creative; it's a policy violation that puts their whole ecosystem at risk. This leads to a domino effect:

Immediate Ad Rejection: Your campaigns will get shut down before they even have a chance to run.

Account Suspension: Keep trying, and your ad account will get flagged and suspended, freezing all of your marketing efforts.

Widespread Consumer Backlash: When your ad gets exposed, the public outcry on social media can do irreversible harm to your brand's image.

At the end of the day, the promise of a "perfect" ad from a deepfake video maker is just a mirage. The risks of lawsuits, ethical condemnation, and getting blacklisted by platforms aren't just possibilities—they're practically guaranteed. For performance marketers focused on sustainable growth, the takeaway is crystal clear: this is one tool that needs to stay far away from your advertising toolkit.

Finding the Ethical Path for Synthetic Media in Marketing

While using a deepfake video maker to impersonate someone is a clear ethical and legal train wreck, the AI technology itself isn't the villain. The real issue comes down to two things: consent and transparency. There's a responsible way forward for synthetic media in marketing, but it means ditching the deceptive stuff and being radically honest with your audience.

This ethical path shifts the focus from impersonation to consensual digital representation. Imagine, for example, hiring an actor who contractually agrees to have their digital likeness used to generate dozens of ad variations for a campaign. Here, the person is a willing, fairly-paid partner, and the brand gets incredible creative flexibility. Everybody wins.

Another solid approach is creating a brand new, AI-generated virtual ambassador. This digital persona is always—and I mean always—labeled as an AI creation. This ensures the audience is never tricked. It builds a unique kind of trust, where your brand is seen as innovating openly instead of hiding behind a digital mask.

The Unbreakable Rule: Consent and Disclosure

For any brand even thinking about using synthetic media, two principles are completely non-negotiable: explicit consent and clear disclosure. If you don't have both, you're playing in a high-risk sandbox that’s just begging for legal trouble and a PR nightmare.

The heart of ethical synthetic media is simple: never pretend an AI-generated person is real, and never use a real person’s likeness without their enthusiastic, written permission. This isn't just a best practice; it's the only way to innovate responsibly and protect your brand from catastrophic reputational damage.

This framework demands a fundamental change in how we, as marketers, see these AI tools. Instead of viewing a deepfake video maker as a shortcut to fake a celebrity endorsement, think of the tech as a way to supercharge the creative process with willing partners. You can learn more about how to ethically create AI avatar videos for your brand in our detailed guide.

Your Defensive Playbook for Brand Safety

Even if your brand vows to never touch a deceptive deepfake video maker, you still need a defensive strategy. The explosion of user-generated content (UGC) and the easy access to these tools mean your brand could be dragged into a controversy without ever asking for it. Protecting your brand requires a proactive, multi-layered approach.

Here’s a defensive playbook to keep your brand safe in the age of synthetic media:

Establish Strict Creative Guidelines: Your internal brand guidelines must explicitly forbid using any unethically sourced or deceptively presented synthetic media. This rule needs to apply to everyone: your internal team, your agencies, and any freelancers you work with.

Vet User-Generated and Influencer Content: Before you even think about resharing UGC or influencer content, run it through AI detection tools. A recent study found that people can only spot AI-generated faces with 62% accuracy, which makes technology-assisted verification a must-have.

Implement a Clear Disclosure Policy: If you do use consented synthetic media (like that virtual influencer we talked about), you need a clear, consistent, and impossible-to-miss disclosure. This could be a watermark, a text overlay, or a verbal mention, but it must be obvious to the average viewer.

Educate Your Team: Make sure your marketing, legal, and PR teams know the risks and can spot the tell-tale signs of a deepfake. Things like unnatural blinking, bad lip-syncing, or weird visual artifacts around the face are common red flags.

As you navigate the ethics of synthetic media, it’s also important to understand how content gets found and ranked by AI-driven systems. This is where concepts like Generative Engine Optimization (GEO) come into play. Staying ahead of these trends is all part of a solid defensive strategy.

Ultimately, the safest and most effective path for marketers isn’t about faking reality. It's about using AI to scale authentic, on-brand creative assets that truly connect with your audience.

The Safer AI Alternative for Scalable Video Ads

With all the risks on the table, the real question for performance marketers isn't how to use a deepfake video maker safely—it's what to use instead. The goal of scaling video ad production is completely valid; faking content to get there is not. The answer lies in a different kind of AI, one that automates and remixes your authentic, brand-owned assets instead of just creating fakes.

This safer approach shifts the focus from deception to efficiency. Instead of impersonating people, this ethical AI acts more like an army of hyper-efficient video editors. It works with the creative you already own—real video testimonials, authentic product shots, and genuine footage—to hit your production goals without torching your brand's integrity.

Deconstructing and Reassembling Authentic Creative

Think of your existing video library as a massive pile of LEGO bricks. You’ve got product shots, clips from customer testimonials, screen recordings, and a bunch of B-roll. Manually digging through all of that to build dozens of ad variations is a slow, painful grind.

An ethical AI platform flips this script. It ingests your entire creative library and breaks it down into smart, modular components.

Video Intelligence: The AI watches and tags every single clip based on its content, structure, and how it might be used in an ad. It automatically identifies potential hooks, body segments, and calls-to-action.

Asset Management: All your tagged clips are organized into a searchable, intelligent library. You can instantly find every customer testimonial that mentions "easy to use" or pull up every shot of a specific product feature.

Programmatic Assembly: The AI then reassembles these authentic pieces into hundreds of new ad variations, all based on proven marketing frameworks that you control.

This method gives marketers the speed and scale they want from AI, but its foundation is built on authenticity, not fabrication. It helps your team massively increase its testing velocity by cutting out the tedious manual editing, all while staying true to your brand's voice and values.

The crucial difference is one of intent. A deepfake video maker creates new, fabricated realities. An ethical AI video platform remixes and optimizes existing, authentic realities at scale. One is a forger; the other is a super-powered editor.

The Power of Modular Frameworks

This approach isn't about randomly mashing clips together. It uses structured, conversion-focused frameworks to systematically build high-performing ads. This is where the real magic happens for performance marketers.

Instead of spending hours in a video editor trying to piece together one version of an ad, you can instantly generate dozens of variations based on proven formulas:

Hook-Body-CTA: The AI can test 10 different hooks against 5 body segments and 3 calls-to-action, creating 150 unique ad variations in minutes. This lets you rapidly figure out which opening actually grabs attention.

Problem-Agitate-Solution (PAS): You can define clips that show the customer's problem, others that agitate that pain point, and more that present your product as the solution. The system will then mix and match these components to find the most persuasive story.

UGC Testimonial Mashups: The platform can pull the most powerful one-liners from multiple customer videos and weave them into a single, killer social proof ad.

This modularity provides an incredible speed for testing. You’re not just making more ads; you’re learning faster than ever about what truly resonates with your audience. For a deeper dive, check out our guide on how an AI video generator for ads can automate this entire workflow.

The end result is a creative engine that respects your brand’s authenticity while delivering the performance data you need to scale profitably. It’s the smart, safe, and sustainable way to use AI for video advertising—leaving the ethical nightmares of a deepfake video maker far, far behind.

Frequently Asked Questions About Deepfake Video Makers

As synthetic media gets easier to access, marketers are, understandably, full of questions. Walking the line between innovation and irresponsibility means you need clear answers. This section dives into the most common questions about using a deepfake video maker, helping you make smart decisions that protect your brand.

We’ll cut through the hype and give you concise, practical answers that reinforce the article's main points on risk, ethics, and using AI responsibly.

Are Deepfake Video Makers Legal for Marketing?

Using a deepfake video maker for marketing is incredibly risky and, in many situations, flat-out illegal. The second you create a video using someone's likeness without their explicit, written consent, you’re violating their “right of publicity.” This is a fast track to expensive lawsuits, especially if the video implies an endorsement they never agreed to.

On top of that, major ad platforms like Meta and Google have increasingly tough policies against deceptive or manipulated media. Running an ad with a deepfake is a surefire way to get your content rejected. Even worse, it could get your entire ad account suspended.

While there might be some extremely niche, fully consented uses that could hold up in court, the high chance of legal trouble and severe brand damage makes it a terrible strategy for almost any brand. The legal lines are pretty clear: using someone's digital identity for commercial gain without permission is a direct path to a courtroom.

How Can I Spot a Deepfake Video?

While deepfakes are getting more sophisticated, there are still a handful of tell-tale signs you can look for. It’s all about paying attention to the subtle details that AI often messes up.

Unnatural Blinking: The AI might make someone blink way too often, too slowly, or not at all. Real human blinking is more random.

Awkward Positioning: Watch for head and body movements that look stiff or don't quite line up naturally. The physics can feel a bit off.

Visual Glitches: You might spot "artifacting" or flickering around the edges of the face, where the digital mask is layered over the real video.

Poor Lip-Syncing: The mouth movements may not sync up perfectly with the words being spoken.

Unusual Skin Texture: The person's skin might look overly smooth and waxy, or it might lack the natural imperfections everyone has.

But as the tech gets better, just using your eyes is becoming less reliable. For anyone who needs more certainty, exploring the available tools for detecting deepfakes can offer some real help. These specialized tools analyze videos for the digital fingerprints left behind during the AI generation process, providing a much more dependable way to verify a video's authenticity.

What Is the Difference Between a Deepfake and an Ethical AI Tool?

The main difference between a deepfake video maker and an ethical AI tool boils down to authenticity and intent. It’s about fabrication versus automation.

A deepfake video maker is built to create deceptive content. Its whole point is to fabricate a reality—synthesizing a person's likeness to make them appear to say or do something they never did. The entire process is based on creating something fake.

An ethical AI video platform is designed for augmentation and automation. It works exclusively with your brand’s authentic assets—real video footage, genuine customer testimonials, and actual product shots. Its AI helps scale the creative process, not fake it.

This ethical alternative uses AI to programmatically edit, remix, and version these authentic assets into hundreds of ad variations. It's about speeding up the real work of your creative team, not generating content out of thin air.

Think of it this way: a deepfake video maker is like hiring a forger to create a counterfeit masterpiece. An ethical AI video tool is like hiring an army of incredibly fast, data-driven video editors who only work with your original art. One creates illusions; the other amplifies reality.

Ready to scale your video ads without the risks? Sovran uses ethical AI to automate video production from your authentic brand assets, helping you test 10x faster and find winning creative without compromising your integrity. Start your 7-day free trial today.

Manson Chen

Founder, Sovran

Related Articles

Your Ultimate Guide to UGC Style Video Ads

Master UGC style video ads for Meta and TikTok. This guide reveals proven frameworks, scripts, and scaling tactics to boost your ROAS in 2026.

Your Guide to a Social Media Ad Generator in 2026

Discover how a social media ad generator revolutionizes creative production. Learn to automate video ads, scale testing on Meta & TikTok, and drive performance.

10 Actionable Ad Creative Ideas That Convert in 2026

Discover 10 actionable ad creative ideas for Meta & TikTok. Get hooks, scripts, and testing frameworks to boost your ROAS and combat creative fatigue.