Expert Playbook: How to Test Video Ad Creatives

Jump to a section

- The Foundation of Winning Tests Hypothesis and Variables

- Designing a Statistically Sound Creative Experiment

- A Two-Phase Framework for Executing Tests

- Analyzing Results and Measuring Creative Performance

- Scaling Wins and Automating Creative Production

- Common Testing Pitfalls and Building an Optimization Cadence

- Conclusion From Testing to Winning

Many teams don’t have a testing problem. They have a throughput problem.

They know they should test more video ads on Meta and TikTok. They have ideas for new hooks, new creators, fresh UGC angles, sharper CTAs. Then production slows everything down. One editor is buried in CapCut. Media buyers launch a few variations, call it a test, and try to read signal from messy delivery. A week later, nobody is sure what won, or why.

That’s why most advice on how to test video ad creatives feels outdated. It assumes you’ll manually build a couple of variants, compare them one by one, and inch toward a winner. That method can work. It just doesn’t scale well enough for accounts that need constant creative refresh.

A better approach treats testing as a system. You start with a real hypothesis, isolate variables, design experiments that can survive statistical scrutiny, and then use automation to increase the number of learnings you get from each cycle. The goal isn’t to make more videos for the sake of volume. The goal is to learn faster than your competitors.

The Foundation of Winning Tests Hypothesis and Variables

Weak tests start with weak ideas.

A lot of advertisers still brief creative like this: “Let’s make three new TikTok-style videos and see what happens.” That’s not a testing plan. That’s paid guessing. If you want cleaner learnings, you need a clear belief about what you expect to happen and why.

Start with market-validated angles

The fastest way to waste budget is to test concepts the market has already rejected.

A better starting point is competitive intelligence filtered through spend signals. One useful rule from this YouTube breakdown on ad testing with spend-validated competitive data is to focus on ads running 30+ days instead of relying on ad libraries alone. The same source notes that testimonial angles can significantly outperform product spec angles on CPA in mobile app campaigns, and a majority of top performers reused validated angles in modular videos.

That doesn’t mean you copy competitors. It means you extract the underlying mechanism. If testimonial ads keep staying live, the market may be rewarding social proof. If founder-led direct response ads stay up, clarity and trust may be doing the heavy lifting.

Practical rule: Validate the angle before you validate the edit.

Build hypotheses that can be tested

A useful hypothesis has three parts:

- The variable

- The expected outcome

- The metric that will prove or disprove it

Bad hypothesis: “UGC should work better.”

Better hypothesis: “A testimonial-led hook will outperform a feature-led hook by improving early attention and downstream conversion quality.”

That framing matters because it forces you to decide what kind of win you’re looking for. Are you trying to improve thumb-stop behavior, click intent, install efficiency, or purchase economics?

Here are variables worth isolating in video ads:

- Hooks: Opening line, first visual, text overlay, facial expression, motion, or pacing in the first seconds.

- Core angle: Testimonial, pain point, aspiration, demo, before-and-after, comparison.

- Value proposition: Fast results, convenience, savings, status, ease of use.

- CTA framing: “Shop now,” “Learn more,” direct offer framing, urgency, softer education-first asks.

- Visual style: Native UGC, founder-style direct-to-camera, polished brand cut, meme format, screen recording.

- Message structure: Problem-Agitate-Solution, listicle, objection handling, review compilation.

Keep the variable count low at the start

If you change the hook, the creator, the offer framing, and the CTA all at once, you may find a winner but you won’t know why it won.

That’s the trap. Teams often confuse content variation with testing discipline. Variation is useful. Confounded variables are not.

Use a single learning question per test round. If the hook is the question, keep the body and CTA stable. If the CTA is the question, don’t swap the creator too.

A simple way to organize this is with a matrix. This creative testing matrix is a practical format for mapping angle, hook, format, and KPI before anything goes live.

Copy and creative should line up

Creative testing often breaks because the ad says one thing visually and another thing in text.

If your hook promises a transformation but your copy reads like a feature list, you’re not testing one idea. You’re testing a mixed message. Teams that want stronger message discipline should study frameworks like how to write ad copy that converts, then pair those copy structures with the visual angle being tested.

The best creative tests feel boring on paper. One idea. One variable. One reason for the result.

When you build tests this way, you stop producing random variants and start building a learning backlog. That backlog becomes more valuable than any single winning ad because it tells you what your market responds to, what it ignores, and what deserves another round.

Designing a Statistically Sound Creative Experiment

Good creative can lose in a bad test design.

Often, accounts encounter difficulties at this stage. Teams swap in a few ads, let the platform distribute unevenly, glance at early CTR, and declare a winner before enough data exists. That isn’t testing. That’s pattern recognition mixed with impatience.

A/B testing versus multivariate testing

A/B testing is the cleanest place to start. You compare two versions while changing one variable. Hook A versus Hook B. CTA A versus CTA B. Creator A versus Creator B.

Multivariate testing looks at combinations. It can help when you want to understand how visuals, copy, and audio interact together. The trade-off is simple. You get broader pattern discovery, but you need much more data because every added combination creates more possible explanations.

Use this rule of thumb:

| Method | Best for | Main trade-off |

|---|---|---|

| A/B test | Isolating one creative variable | Slower learning across many elements |

| Multivariate test | Exploring combinations at scale | Harder analysis and larger sample demands |

If your account doesn’t have consistent volume, start with A/B. Teams often move to multivariate too early.

What statistical significance means in practice

Think of creative performance like flipping coins.

If you flip a coin a few times, streaks happen. That doesn’t mean the coin is biased. Early ad results behave the same way. A creative can look dominant after a small burst of delivery and then flatten once enough impressions accumulate.

The baseline to keep in mind is this: an industry-standard minimum of 1,000 impressions per variation is required for 95% confidence, and some practitioners recommend 3,000 to 5,000 impressions per variant for stronger reliability, according to AdStellar’s breakdown of Facebook ad creative testing methods. The same source notes that tests should run for at least 1 to 2 weeks to normalize day-of-week effects and reduce bad decisions based on unstable early performance.

That single point changes behavior. It tells you not to panic on day one. It tells you not to crown a winner off tiny delivery. It also tells you that low-budget accounts need to narrow the number of variants they test at once.

The structure that keeps tests clean

A reliable experiment needs consistency everywhere except the variable being tested.

Keep these factors aligned:

- Audience: Use the same targeting setup across variants.

- Optimization event: Don’t compare one ad optimized for clicks and another for purchases.

- Placement mix: Keep placement logic stable if the goal is a pure creative read.

- Bid strategy: Use the same bidding setup across variants.

- Budget treatment: Give each variant a fair chance to accrue meaningful delivery.

That’s why many buyers prefer duplicated ad sets or formal experiments rather than dropping new ads into a mixed ad set and hoping the algorithm behaves neutrally.

A practical setup for Meta and TikTok

For most direct response teams, the cleanest approach looks like this:

- Duplicate the test structure so each variant gets comparable conditions.

- Change one major element only.

- Predefine the success metric based on campaign objective.

- Leave the test alone long enough to collect stable data.

- Review by segment after the overall result is in.

If you need help checking whether your test volume is even in the right range, this creative testing calculator is a useful planning tool.

Pick the metric before launch

Many tests fail at this point. The team launches on one goal and judges on another.

A clean setup usually maps like this:

- Awareness or top-of-funnel: CTR and video engagement signals

- Conversion-focused lead gen or app install: CPA or install efficiency

- E-commerce: ROAS and conversion efficiency

- Video-specific diagnosis: completion rate and other retention signals

If you decide what “winning” means after the test ends, you’ll almost always choose the result you wanted to see.

When people ask how to test video ad creatives properly, this is the answer they usually don’t want. The hard part isn’t creating variants. The hard part is resisting noise long enough to let the data become useful.

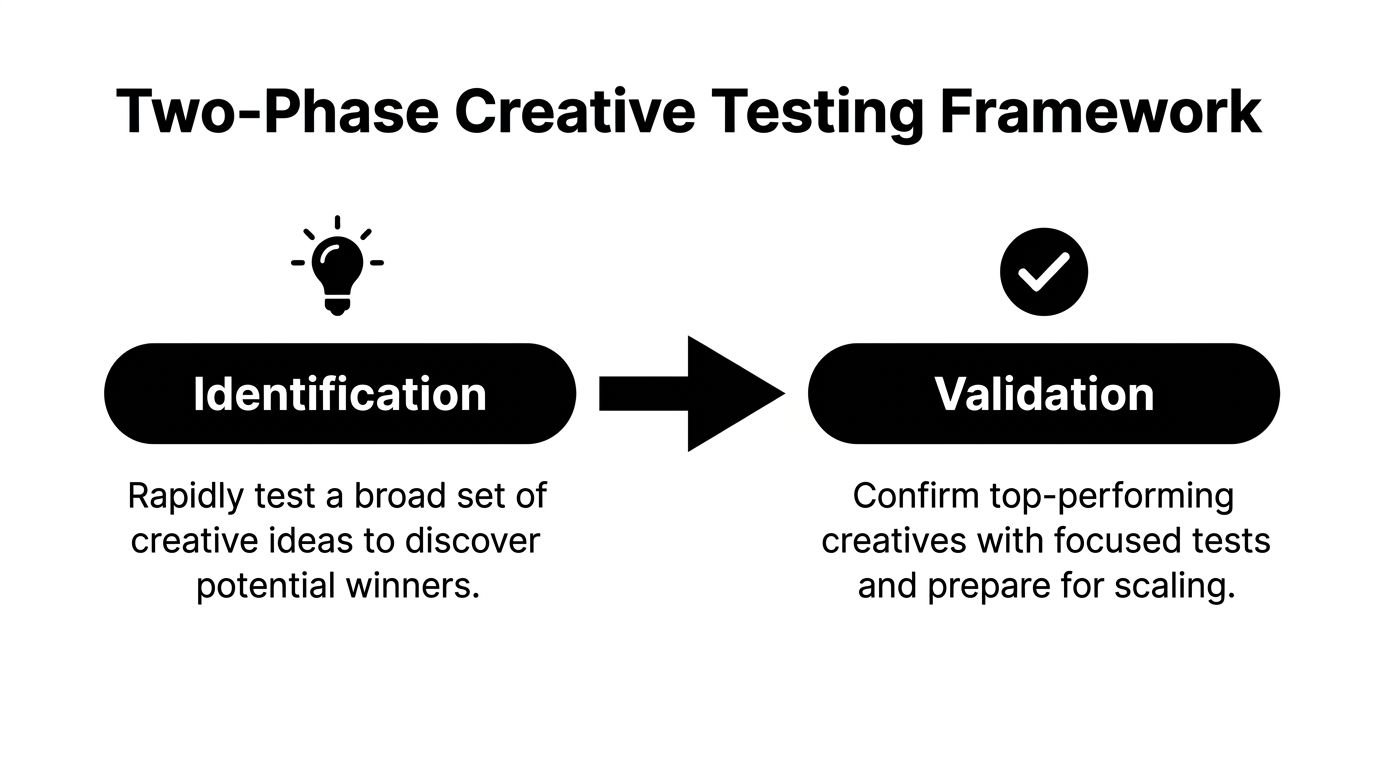

A Two-Phase Framework for Executing Tests

The easiest way to burn money is to ask one test to answer every question at once.

You don’t need every creative to prove full-scale profitability on day one. You need a screening layer first, then a validation layer. That’s the difference between exploratory testing and scale testing.

Phase 1 identifies promising creatives

The point of Phase 1 is not to prove that a creative can carry your entire account. The point is to find out which ideas deserve more budget.

A disciplined framework is to launch 5 to 6 creative variants and evaluate them on IPM, then keep them live until each creative reaches 50 to 100 installs, based on the two-phase method described in Popjam’s guide to high-impact ad creative testing. The same framework advances the top 1 to 2 winners when they beat the IPM baseline by more than 15%.

That’s a practical filter because it prevents you from dragging weak ideas into expensive bottom-funnel tests.

In app campaigns, IPM is often the cleanest early signal because it tells you whether the ad can attract the right user behavior before scale economics get complicated. In other accounts, the equivalent may be an early engagement metric or another leading indicator, but the principle stays the same. Use a screening KPI first.

How to run Phase 1 cleanly

Don’t overcomplicate this phase.

Use isolated ad sets, identical audiences, and stable bidding. Give each creative equal treatment. If you’re testing hooks, keep the rest of the asset stable. If you’re testing angles, avoid changing the offer and the CTA at the same time.

A practical checklist:

- Launch a broad set: Use several distinct ideas, not five minor edits of the same ad.

- Control your environment: Same audience, same optimization logic, same spend logic.

- Watch for signal quality: Early outliers can still reverse, so stay disciplined.

- Document the reason each variant exists: This matters when you brief the next round.

Don’t ask a screening test to tell you final ROAS truth. Ask it which creatives deserve a second interview.

Phase 2 validates whether the winner can scale

A lot of “winning creatives” collapse when they leave the lab.

That’s why the second phase matters. The Popjam framework recommends increasing budgets by 3 to 5x and then measuring CPA or ROAS scalability. It also notes that 60% of Phase 1 winners can see a ROAS drop of more than 20% at scale in this validation step.

That tracks with real account behavior. A creative can produce strong early engagement, then lose efficiency when the audience broadens or frequency climbs. Validation is where you find out whether the ad is a business asset or just a good test ad.

You can structure Phase 2 in one of two ways:

| Structure | When it helps | What to watch |

|---|---|---|

| Isolated winner ad sets | Cleaner read on each winner | Less platform optimization between creatives |

| Multi-creative validation ad set | More realistic algorithm behavior | Harder to isolate why one ad gets spend |

For teams that want a more complete operating model, this roadmap for winning Meta ads through creative testing lays out how to connect test rounds to broader campaign decisions.

Graduation rules matter

Set promotion rules before launch. If you don’t, every decent result turns into an argument.

A simple workflow looks like this:

- Screen for attention or install quality

- Advance only the strongest few

- Increase spend in validation

- Judge on business metrics

- Move validated winners into evergreen or scale campaigns

- Recycle the creative learnings into the next production batch

That loop is what makes testing efficient. Weak ads die cheaply. Strong ideas earn the right to face harder conditions.

Analyzing Results and Measuring Creative Performance

When reviewing reports, many teams ask, “Which ad won?”

That’s the wrong first question. Start with, “What behavior did each ad create?” A video can attract clicks and still attract the wrong clicks. Another can produce a weaker top-line CTR but convert better because it filters traffic more effectively.

Read the funnel, not a single metric

When analyzing video ads, the top-line metrics matter, but they don’t all tell the same story.

The Motion guide to analyzing creative performance points out that while CPA and ROAS measure efficiency and profitability, video-specific metrics matter because the first 3 to 5 seconds are critical and over 80% of viewer drop-off can happen early. The same source notes that testing variables such as customer testimonials or emotional messaging with clear hypotheses can produce measurable lifts, including a 10% conversion boost, and that poor creative optimization contributes to 70% of ad spend being wasted industry-wide.

That tells you two things. First, early attention is not optional. Second, final business metrics still decide whether attention was useful.

What each metric is really saying

Use a layered read instead of chasing one KPI.

- CTR: Relevance and click curiosity. Good for seeing whether the ad earns attention and interest.

- CPC: Cost efficiency for traffic generation. Useful, but it can reward low-quality curiosity if used alone.

- Conversion rate: Message-match and landing-page alignment. Helps identify whether the ad is attracting the right user.

- CPA: Acquisition efficiency. This is often where weak “high-click” creatives get exposed.

- ROAS: Revenue quality and scale-worthiness. Best for judging e-commerce durability.

- Completion rate and retention behavior: Creative strength as a video. Hooks, pacing, subtitles, and scene changes are apparent here.

Here’s a simple way to interpret common patterns:

| Pattern | Likely meaning |

|---|---|

| High CTR, weak CVR | The hook attracts attention but may oversell or misframe the offer |

| Low CTR, strong CVR | The ad filters better than it attracts. Good candidate for angle refinement |

| Strong completion, weak clicks | The video is watchable but doesn’t create intent |

| Weak retention early | The first seconds are missing urgency, clarity, or visual disruption |

A “winner” that only wins on CTR can still be a loser in the business.

Segment cuts often reveal deeper lessons

Overall performance can hide useful sub-results.

Break results down by age, gender, device, and placement when the data volume supports it. Sometimes one creative is average overall but excellent in a specific pocket of inventory or audience. That doesn’t make it the universal winner, but it can make it worth preserving for a narrower role.

If you need a primer on how significance testing works once you’ve got variant data, this guide on how to interpret t-test results is a good refresher for marketers who want more rigor in analysis.

For a platform-specific reporting workflow, this guide to measuring creative tests in Facebook ads reporting is a useful operational reference.

A short walkthrough helps if your team is trying to sharpen its post-test review process.

Turn the result into a creative brief

A test result should create the next brief.

If a testimonial hook improved early retention, ask which part did the work. Was it the face, the phrasing, the specificity, or the social proof itself? If emotional framing drove more qualified conversions, isolate that in the next round against a rational alternative.

That’s the difference between reporting and analysis. Reporting tells you what happened. Analysis tells your creative team what to make next.

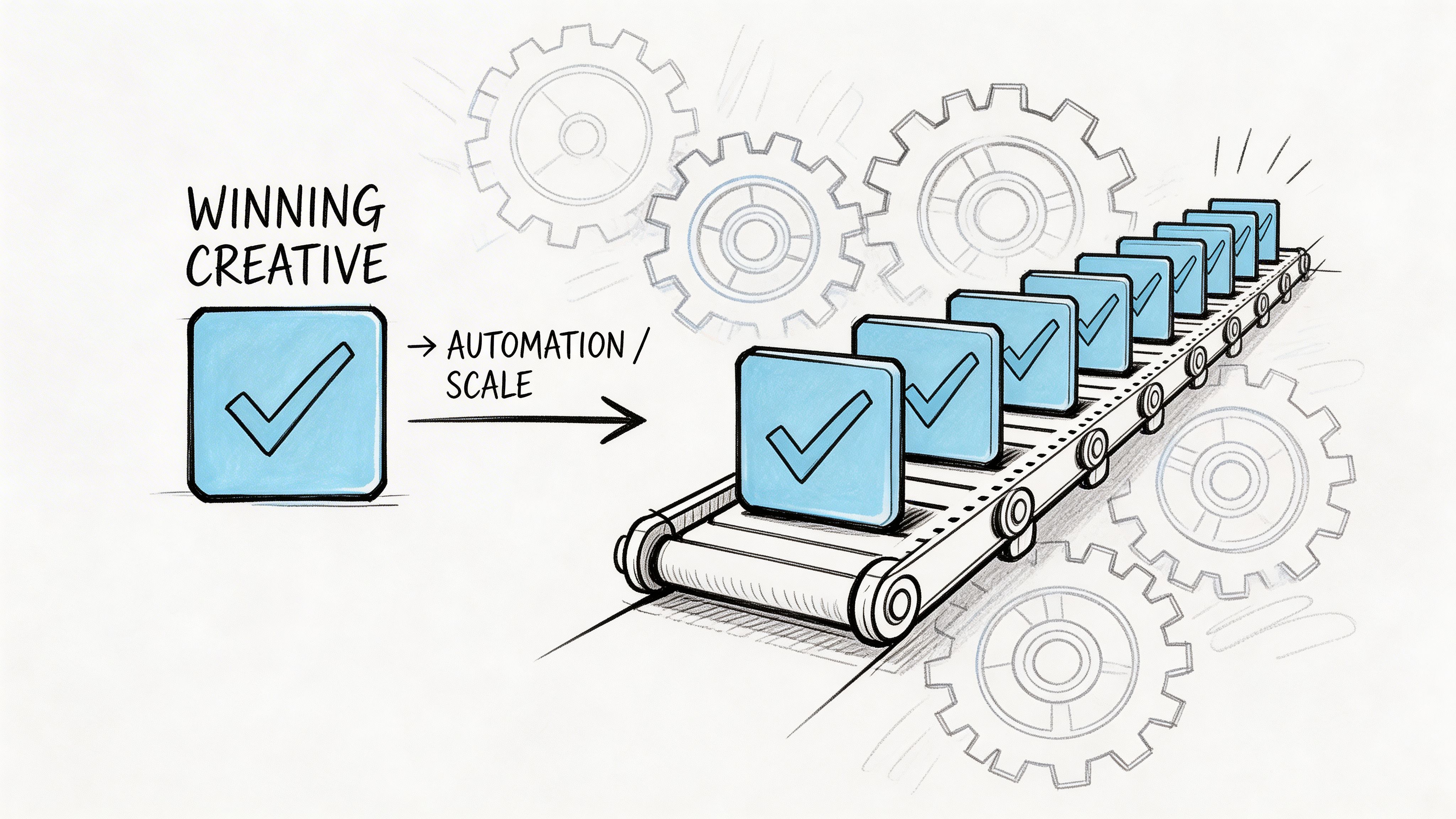

Scaling Wins and Automating Creative Production

A single winner won’t carry an account for long.

Once an ad proves it can work, the important work begins. You need to preserve the core mechanism while producing enough variation to fight fatigue, expand audience coverage, and keep learning. Manual workflows break here because the team starts treating every iteration as a brand new production task.

Deconstruct the winner before you duplicate it

Don’t just say, “This ad won.”

Ask what components won:

- Hook: What earned the initial stop?

- Body: What built belief?

- CTA: What pushed the action?

- Format cues: Was it the UGC feel, subtitle treatment, creator energy, or pacing?

- Angle: Was the primary driver social proof, aspiration, pain point, or demonstration?

When you separate the ad this way, you create reusable assets instead of one fragile success. That opens the door to modular iteration.

A testimonial hook can pair with a different body. A winning body can test against several CTA framings. A strong creator read can carry a new promise. Through this approach, teams move from “one good ad” to a portfolio of informed variants.

Why automation changes the economics of testing

The manual version of this process is slow. Editors re-cut intros, swap overlays, export endlessly, rename files, and hand assets off to media buyers who still need to launch and track the tests.

The newer approach is modular and automated. According to Billo’s discussion of AI-driven ad testing, a key angle for 2026 is integrating AI-generated modular components from tools like Sora 2 into high-velocity testing. The same source says AI platforms can test hundreds of variants by automating hook, body, and CTA combinations, with early data showing up to 3.5x ROAS improvement for some DTC brands, 40% higher CTR for AI-hybrid ads in recent TikTok campaigns, and a path to winners that can be as much as 10x faster.

That claim should be treated as directional, not universal. But the operating logic is strong even without the upside figures. More modularity means more shots on goal. More on-brand combinations mean faster learning loops.

What a modern workflow looks like

A scalable system usually includes these pieces:

| Workflow element | What it changes |

|---|---|

| Asset tagging | Makes old footage reusable instead of buried in folders |

| Natural language search | Helps teams find clips by angle, claim, creator, or context |

| Bulk rendering | Turns one winning structure into many testable variants quickly |

| Naming automation | Keeps ad ops clean when dozens of variants go live |

| Brand context storage | Keeps generated concepts aligned with claims, tone, and positioning |

One option in this category is Sovran, which lets teams upload clips once, tag them into reusable components, bulk-render Hook-Body-CTA combinations, search assets with natural language, and push organized variants into campaign workflows. This matters most for teams that are stuck between wanting more creative volume and not wanting more editing chaos. For a closer look at that type of workflow, this overview of video ad automation software is useful.

Scale doesn’t come from finding one ad. It comes from building a system that can generate the next ten logical descendants of that ad.

Keep the brand guardrails tight

Speed is useful only if the outputs stay usable.

That means storing approved claims, brand guidelines, winning scripts, customer review language, and angle notes somewhere the production workflow can reference them. AI-generated variation without constraints can create volume, but it can also create off-brand noise.

The best automation setups don’t replace strategy. They remove repetitive production work so the strategist can spend more time deciding what to test next.

Common Testing Pitfalls and Building an Optimization Cadence

Most bad test outcomes aren’t caused by bad creative. They’re caused by bad process.

Teams make the same avoidable mistakes. They stop tests too early. They change multiple variables at once. They chase vanity metrics. They fail to keep a control. Then they wonder why each round produces conflicting conclusions.

The mistakes that distort results

Some failure modes show up over and over:

- Ending tests during unstable delivery: Early results often reflect ramp-up noise instead of durable performance.

- Changing creative, audience, and bidding together: Once multiple variables move, attribution gets muddy fast.

- Picking winners on shallow metrics: A high CTR can hide poor conversion quality.

- Ignoring the control mindset: If you don’t compare against a stable baseline, every new ad can look “interesting” without being better.

- Treating each test as a one-off: Learnings disappear when nobody documents what the ad was designed to prove.

These mistakes are operational, not philosophical. That’s good news, because process can be fixed.

Build a repeatable cadence

The strongest accounts don’t “do testing” once in a while. They operate on a rhythm.

A practical cadence usually includes:

- A creative backlog: Maintain a running list of angles, hooks, objections, creators, and offer framings to test.

- A test calendar: Launch new rounds on a fixed schedule rather than waiting until performance collapses.

- A result log: Record the hypothesis, variable, outcome, and interpretation for every test.

- A promotion rule: Define which ads move from screening to validation to scale.

- A retirement rule: Remove fatigued or repeatedly weak variants without emotional attachment.

That system creates continuity. Creative strategy improves because each round starts from accumulated evidence instead of fresh opinion.

Don’t confuse cadence with volume

More tests isn’t always better.

If your team launches too many weakly structured experiments, you create busier dashboards, not better learning. A good cadence balances throughput with clarity. Every test should answer a real question and feed the next round.

The goal isn’t endless testing. The goal is an always-on learning loop.

A simple operating habit helps. After each round, write one sentence for each variant: what was tested, what happened, and what you think caused it. That tiny discipline compounds. Over time, your account develops its own playbook for angles, creators, formats, and offers.

That’s when testing stops feeling reactive. It becomes the engine behind creative production, media buying, and scale.

Conclusion From Testing to Winning

Video ad testing works when it stops being improvisation.

The strongest teams don’t rely on random creative batches and quick judgments. They start with hypotheses grounded in what the market already responds to. They design tests that can survive statistical scrutiny. They screen broadly, validate narrowly, and analyze results in a way that produces the next brief, not just a report.

That alone will improve how you test video ad creatives.

The bigger shift is operational. When you break winning ads into reusable components and automate iteration, testing stops being a slow editorial bottleneck. It becomes a learning system. That’s what allows teams to refresh creative faster, respond to fatigue earlier, and keep building from proven angles instead of starting from zero.

Winning creative is rarely one lucky ad. It’s usually the result of a process that keeps producing better bets.

If you want to turn this playbook into an actual workflow, Sovran helps performance teams automate modular video production, generate large batches of testable variants, and organize those assets for faster launch cycles on Meta and TikTok.

Manson Chen

Founder, Sovran

Related Articles

Create Video Ads with AI That Perform in 2026

AI UGC Video: A Guide to Scale Ads on Meta & TikTok