Mastering AI Character Voices for High-Converting Ads

Discover how to use AI character voices to scale ad creative on Meta and TikTok. Learn scripting, voice cloning, and workflow integration for peak performance.

Jump to a section

AI character voices are incredibly realistic, synthetic voices made with neural text-to-speech (TTS) tech. They're designed to give your ads a real personality. For performance marketers, this is a game-changer for creating video ads on platforms like Meta and TikTok, letting you slash production costs and timelines compared to hiring voice actors.

Why AI Voices Are Your New Creative Superpower

If you feel like you're trapped in a cycle of insane production costs and creative burnout, AI character voices are your way out. For those of us deep in performance marketing, this technology is way past the robotic novelties of a few years ago. It’s now a core tool for scaling ad creative and finding what works, faster.

This isn't just about saving a few bucks on voice actors. It’s a total strategic shift in how you produce and test your ads. The ability to spit out dozens of voiceover variations in minutes unlocks a level of A/B testing speed that was pretty much impossible before.

From Novelty to Necessity

Synthetic speech has come a long way. Can you imagine the buzz at the 1939 New York World's Fair when Bell Labs showed off the VODER, the world's first electronic speech synthesizer? Fast forward to today, and neural TTS is powering hyper-realistic AI voices that can hook viewers in the first few seconds.

The results speak for themselves: videos with voiceovers can crank up engagement by up to 40% on social media. Meanwhile, using TTS can cut your production costs by a whopping 70-80% compared to hiring traditional voice talent. If you're curious, you can learn more about the history of text-to-speech technology and its evolution.

This efficiency hits on the biggest headaches for modern ad teams:

Speed: You can go from a script to a final audio file in minutes, not days.

Scale: Instantly create hundreds of ad variations to test different hooks, offers, and character personas.

Cost-Effectiveness: Forget massive budgets for creative testing. Now you can do more with less.

The real win with AI voices isn't just cutting a line item from your budget. It's the strategic freedom you get. You can experiment like crazy, figure out what your audience actually responds to, and scale your winning ads faster than ever before.

The Impact on Performance Creative

For media buyers and creative strategists, getting good at AI voices is no longer optional. It's a must-have skill. The right voice can stop the scroll, build a sense of trust, and push people to take action. When you weave AI voices into your workflow, you can craft ads that feel native to platforms like Meta and TikTok and really connect with people.

This frees up your team to focus on what actually moves the needle: the strategy behind the creative. Instead of getting stuck in production hell, you can spend more time digging into performance data and refining your messaging. At the end of the day, that’s what leads to better campaigns and better results.

Finding and Crafting Your Perfect AI Voice

The right AI voice can absolutely make or break your ad, but I see so many marketers just grab the first one that sounds remotely decent. That’s a huge mistake. The goal isn't just to find a voice; it's to find the voice that perfectly embodies your brand's personality and connects with your audience, whether that’s Gen Z on TikTok or B2B pros on Meta.

Before you even start listening to samples, define your voice persona. Don't just think "male" or "female." Is your brand the energetic best friend? The trustworthy expert? The witty storyteller? Write it down. Having this persona documented helps you cut through the noise and filter the massive library of AI voices down to models that actually align with your brand.

Evaluating Potential AI Voices

Once you have that persona locked in, it's time to hold some auditions. You need to listen for more than just clarity—the real gold is in the subtle characteristics that make a performance feel human.

Here are the attributes I always focus on:

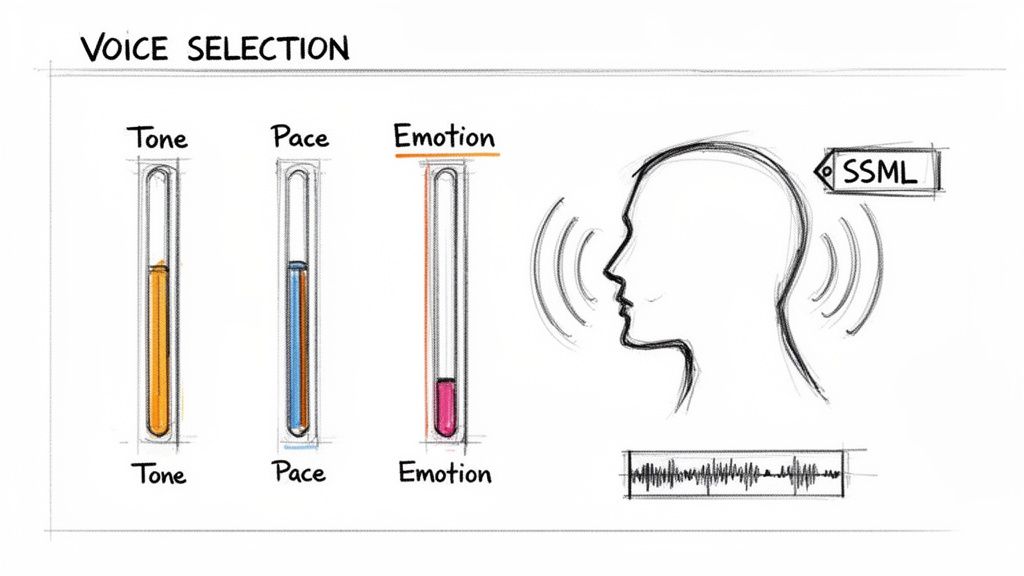

Tone: Does the voice naturally sound upbeat, serious, or calm? A voice that's inherently enthusiastic is going to have a hard time delivering a solemn, serious script in a believable way.

Pace: What's the model's default speaking speed? A faster clip is perfect for high-energy TikTok ads, but a slower, more deliberate pace can build a ton of trust in a B2B Meta campaign.

Emotional Range: How well does the voice handle different emotional cues? Test it with scripts that demand excitement, empathy, or authority. You want to see if it can adapt without sounding forced or robotic.

One of the most common pitfalls I see is choosing a voice that sounds great on its own but completely clashes with the visual creative. You have to test your top candidates against your actual ad footage. A jarring disconnect between what you see and what you hear will kill your ad's performance instantly.

Fine-Tuning Your Voice with SSML

Okay, so you've picked a great base voice. You're only halfway there. The real magic happens when you start directing its performance using SSML (Speech Synthesis Markup Language). This is a simple markup language that gives you granular control over the delivery, turning a good voice into a truly great one.

Think of yourself as a director guiding an actor. Instead of just dumping a block of text into the AI, you use SSML tags to add those little human-like imperfections and moments of emphasis. If you're new to this, you can find some great starter guides on how to leverage AI voiceover features.

Here are a few SSML tricks you can use for immediate impact:

Inject Pauses: Use

<break time="0.5s"/>to add a slight pause right before a key benefit or after asking a question. That half-second of silence gives the viewer a moment to process what you just said and builds anticipation.Emphasize Words: Wrap your call-to-action in

<emphasis level="strong">tags. This makes phrases like "Download Now" or "Shop the Sale" pop with more vocal energy.Adjust Pitch and Rate: The

<prosody>tag lets you tweak the speed and pitch. For instance, you can slow things down for a critical detail (<prosody rate="slow">) or raise the pitch for an exciting announcement (<prosody pitch="high">).

By getting comfortable with these simple SSML commands, you can craft AI character voices that don't just speak your script—they perform it. This level of detail is what separates robotic, forgettable ads from compelling creative that feels authentic and actually drives people to act.

Scripting That Makes AI Voices Sound Human

You can have the most advanced AI voice model on the planet, but it's completely wasted on a flat, lifeless script. Let's be clear: writing for an AI narrator isn't the same as writing for a human actor. You have to be far more explicit with your directions, essentially embedding the performance cues directly into your workflow.

The script itself has to be built for the brutal, fast-paced world of short-form video. That means a hard-hitting, undeniable hook in the first three seconds and a relentless drive toward a crystal-clear call-to-action. Don't meander. Every single word has to earn its place.

But the real magic for crafting believable ai character voices happens in the context prompts you feed the model right alongside the script. These prompts are your director's notes. They tell the AI not just what to say, but how to say it.

Directing Emotion and Intent with Prompts

If you give a voice model vague instructions, you'll get generic, forgettable results. The key is to be hyper-specific. Instead of just telling the AI to "sound happy," you need to paint a rich, detailed picture that guides its entire performance.

Think about layering your prompts with elements like these:

Emotion + Modifier: Don't just say "excited." Try something like, "sound excited but also trustworthy and reassuring." This simple bit of nuance stops the voice from becoming a one-dimensional caricature.

Audience Persona: Who is the AI actually talking to? Add context like, "You're speaking to a skeptical, busy marketer who thinks they've seen it all before." This pushes the AI to adopt a more direct, no-nonsense tone.

Contextual Scenario: Give the AI a role to play. Something as simple as, "Imagine you're sharing a game-changing secret with a close friend" can transform a robotic delivery into something intimate and conspiratorial.

A great script tells the AI what to say. A great prompt tells the AI who to be. This distinction is the difference between an ad that gets skipped and an ad that converts.

To get your AI character voices to deliver truly authentic content, you first have to master how to humanize AI-generated text. This goes way beyond just the words—it's about coaxing a real performance out of the model. For example, a script for a charity needs an empathetic tone that a generic AI will almost always miss without specific, human-centric direction.

Prompting Framework for AI Voice Performance

Crafting effective prompts is a skill. Vague inputs lead to bland, robotic outputs, while specific, layered instructions create nuanced and engaging performances. Here’s a breakdown of how to approach it.

Element | Ineffective Prompt (Vague) | Effective Prompt (Specific & Actionable) | Expected Outcome |

|---|---|---|---|

Emotion | "Be happy." | "Sound genuinely thrilled but a little surprised, like you just found a $20 bill in an old jacket." | A more authentic, relatable excitement with a natural, less forced feel. |

Pacing | "Speak a bit faster." | "Deliver the lines with a sense of urgency, quickening the pace on the second sentence to build momentum." | A dynamic delivery that emphasizes key points through speed variation, not just a uniform faster tempo. |

Tone | "Use a professional tone." | "Adopt the tone of a trusted advisor speaking to a colleague—knowledgeable, confident, but not condescending." | A voice that sounds authoritative and credible without being cold or distant. |

Persona | "Talk like a tech expert." | "You're a friendly tech enthusiast explaining a complex topic to a friend who is new to the subject." | A warm, approachable, and easy-to-understand delivery, avoiding overly technical jargon or a robotic cadence. |

By thinking through these elements, you move from simply generating audio to directing a performance. This level of detail is what separates AI-generated content from content that truly connects.

From Flat to Captivating: A Real Example

Let's look at a quick before-and-after to see this in action.

The Script: "Our new app tracks your expenses automatically. It saves you time and money. Download it today."

Before (No Prompt): The AI will read this with a completely flat, neutral tone. Sure, it's informative, but it's also instantly forgettable. It has zero personality and will get buried on a crowded social feed.

After (With a Specific Prompt):

Prompt: "Sound like a busy but organized friend who just discovered an amazing life hack. You're slightly breathless with excitement because you can't believe how much time this has saved you. You're talking to another busy friend who you know struggles with their finances."

With this prompt, the AI's delivery is completely different. The pace naturally quickens, the tone becomes enthusiastic and relatable, and key phrases like "automatically" and "saves you time" will carry more punch. This is how you create an AI voice that feels genuinely human and persuasive.

When you're putting your scripts together, you can also pull inspiration from proven formats. You might find our guide on how to write a script for a radio commercial useful, as many of the core principles of persuasion and pacing apply here, too.

The Smart Guide To Voice Cloning And Compliance

Imagine having a library of on-brand AI character voices ready for every campaign. It’s tempting, but voice cloning carries real-world risks—legal, ethical, and reputational. In my years running video ads, I’ve seen teams rush this step and end up with robotic voices and emergency calls to lawyers. This guide shows you how to get it right from the start.

You can’t build a crystal-clear clone on top of grainy conference-call audio. A treated vocal booth, a high-end condenser mic, and consistent settings are non-negotiable. Without them, your AI model won’t pick up the nuances that make a voice feel alive.

I remember a recent campaign for a D2C skincare brand where we spent days cleaning up hiss and leveling volume. The payoff was huge—a cloned voice so natural that engagement jumped by 27%.

What Makes A High-Quality Audio Sample

When you’re training your model, every hiss or echo works against you. Keep these essentials in mind:

Clean And Clear: No background chatter, hum, or reverb. A sound-treated room or vocal booth is ideal.

Sufficient Length: Record 15–30 minutes of monophonic audio. Include a range of emotions—excitement, calm, curiosity—to give the AI versatility.

Uniform Settings: Stick to one microphone and one preamp setup. Switching gear mid-session can confuse the model.

Garbage in, garbage out. Invest time in your audio foundation and your cloned voice will sound natural—not mechanical.

Navigating The Legal And Ethical Minefield

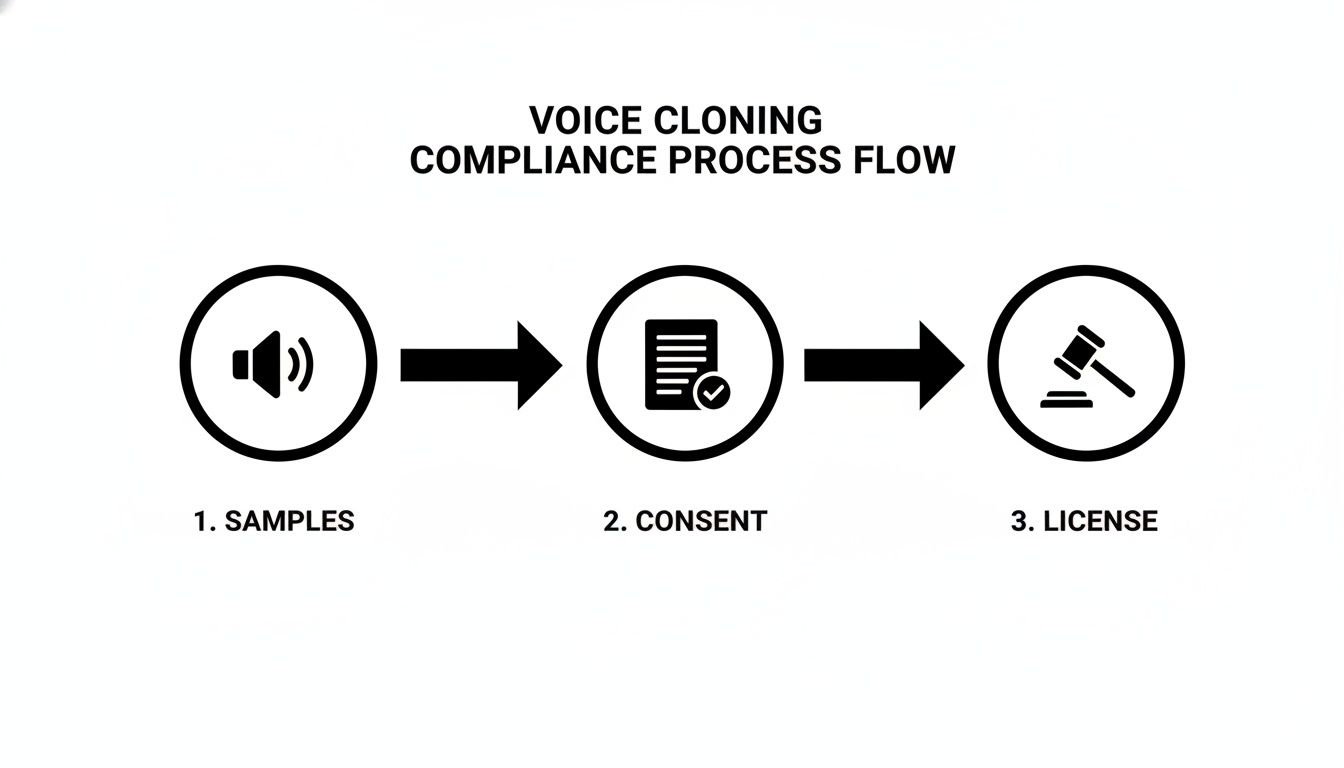

Cloning someone’s voice without airtight consent is a recipe for disaster. A casual “Sure, go ahead” won’t hold up in court. You need a robust licensing agreement, drafted with legal counsel, that leaves no room for misunderstanding.

Here’s what it should include:

Scope Of Use: Define exactly where the voice can appear—Meta ads, TikTok spots, internal demos.

Duration: One year, five years, or in perpetuity? Be crystal clear about the time frame.

Content Restrictions: Exclude political messaging or controversial topics unless explicitly approved.

Compensation: Outline flat-fee buyouts, royalty structures, or a hybrid payment model.

Drawing up this contract protects your brand, respects the talent, and lets you move forward confidently. Stay sharp against voice cloning scams and make sure your processes align with our data privacy and legal policies.

Building Your AI-Powered Ad Creative Workflow

Theory is great, but putting it into practice is where the real results are. So, let’s walk through a tangible workflow for plugging AI character voices into a rapid, scalable ad production system. This is the exact playbook performance teams use to ditch slow, one-off video production and build a high-velocity creative testing engine.

It all starts with centralizing your assets. You need a single source of truth for your creative. Platforms like Sovran let you upload all your raw video clips, and then AI steps in to tag everything with natural language. That shot of a person smiling at their phone? It's now instantly searchable with a simple query like "happy customer using app." This foundation is what makes speed possible.

From there, you start to generate, save, and organize your brand’s unique AI voiceovers. Once you’ve dialed in a voice that perfectly captures your brand persona, you can save it—along with its most effective context prompts—as a reusable asset. This is a game-changer for consistency. It ensures every ad maintains your brand's voice, no matter who on your team is building it.

Assembling Ad Variations at Scale

With your visual and audio assets tagged and ready to go, assembling ad variations becomes incredibly fast. Think of it less like video editing and more like using creative building blocks. You can test a ton of combinations in minutes.

Hook Testing: Take five different video hooks and pair them all with the same body, call-to-action, and AI voiceover.

Voice Persona Testing: Use the exact same script and visuals but test two different AI character voices—maybe one is energetic and upbeat, the other calm and authoritative.

CTA Testing: Keep the hook and body the same, but generate three different voiceovers for the call-to-action to see which phrasing drives the most clicks.

The goal is to stop thinking in terms of single "video ads" and start thinking in terms of "creative components." When you can mix and match hooks, body segments, and voiceovers on the fly, you unlock a level of testing velocity that manual editing simply can't match.

Modern ai character voices are now blending text-to-speech with recognition capabilities, opening the door for really interesting interactive ad experiences, like dynamic UGC testimonials. The performance lift we're seeing is huge. On Meta and TikTok, creatives using synthetic voices are seeing click-through rates jump by 25-35%, and about 70% of top-performing campaigns are using them for A/B testing at scale. This is all powered by recognition accuracy hitting 99% in some tools, which allows for real-time personalization that keeps creative from going stale. You can learn more about the evolution of voice recognition technology and its impact.

Batch Rendering and Final Touches

The final piece of this puzzle is rendering. Instead of exporting videos one by one in an editing tool like CapCut, you can queue up dozens or even hundreds of variations for batch rendering. Systems designed for this can automatically sync your chosen AI voiceover with the video and spit out perfectly timed subtitles.

But before you even get to rendering a cloned voice, there are critical compliance steps you can't ignore.

This flow—from gathering high-quality audio samples to securing explicit consent and a clear license—is absolutely non-negotiable for protecting your brand.

This whole automated workflow, from asset tagging to bulk rendering, compresses a process that used to take days into just a few minutes. For performance teams, this means more time spent analyzing data and way less time getting bogged down in manual production. Ultimately, that's how you find your winning ads faster.

Common Questions About AI Voices in Ads

As more performance marketers start using AI character voices, a lot of practical questions pop up. It's one thing to talk about the theory, but actually putting it into practice means figuring out brand consistency, avoiding common pitfalls, and knowing how to test these new assets effectively.

Let's dive into the questions I hear most often and give you some clear, actionable answers. Getting this right is the difference between an ad that feels genuinely human and one that just screams "robot."

How Do I Keep My AI Character Voice On-Brand?

Brand consistency is everything, and your audio identity is a massive part of that. The last thing you want is a different vocal persona in every ad—it just confuses your audience and weakens your brand.

The first step is to create a simple "Voice Brand Guideline." Think of it as your north star for audio. This document should define your sonic persona (are you a helpful expert, an energetic friend, a witty authority?), outline the key emotional tones you'll use, and set the ideal pacing for your ads.

When you're picking a base voice, find one that already feels like a natural fit for that persona. For the ultimate level of control, you can even clone a brand-approved voice actor, just make sure you secure the full legal rights first. Use a platform that lets you save these specific voice models and prompts so everyone on your team can generate on-brand audio, creating a consistent experience across all your campaigns.

The smartest brands treat their AI voice with the same care they give their visual logo or color palette. It’s not just a cool feature; it’s a core piece of your brand identity that builds recognition and trust.

What Are the Biggest Mistakes to Avoid With AI Voices?

The most common mistake is also the most damaging: using a generic, robotic-sounding voice that makes viewers instantly tune out. This usually happens when teams get lazy and skip the fine-tuning step. You absolutely have to use SSML to add natural pauses and inflections. A half-second pause right before you drop a key benefit can make a world of difference.

Another huge pitfall I see all the time is a script-voice mismatch. I’ve seen ads with a super upbeat, high-energy voice trying to deliver a serious, empathetic message. It creates this weird, jarring disconnect that completely kills the ad's credibility.

Finally, never, ever neglect the legal side of voice cloning. Failing to get explicit, written consent is a massive legal and brand risk. Make transparent, comprehensive agreements with any voice talent a top priority to protect everyone involved.

How Can I A/B Test AI Voices for Better Performance?

Systematic testing is how you win. The golden rule here is to isolate one variable at a time so you can get clean, reliable data.

Here’s a practical testing framework you can steal:

Test Voice Personas First: Run the exact same video creative and script, but test two totally different voice personas. Think Male vs. Female, or Energetic vs. Calm. This tells you which overall style clicks with your audience.

Test Hooks Second: Once you have a winning voice style, use that voice to test three different script hooks against the same video body and CTA.

Optimize the CTA Last: With the winning hook and voice locked in, test two or three variations of your call-to-action to see which phrasing drives the most clicks.

Use a platform that can bulk render these variants and push them to your ad accounts with clear naming conventions (e.g., Campaign_VoiceA_Hook1_CreativeX). Then, dig into metrics like Hook Rate, CTR, and CPA to find the combinations that actually move the needle.

Ready to scale your ad creative and find winning campaigns faster? Sovran automates your entire video ad workflow, from asset management to bulk rendering. Start your 7-day free trial and see the difference.

Manson Chen

Founder, Sovran

Related Articles

8 High-Converting Static Ads Examples for 2026

Discover 8 high-performing static ads examples from top apps. Learn to create text-overlays, testimonials, and lifestyle ads that convert.

How to Turn Link Into Video: Ads That Convert in 2026

Learn how to turn link into video for Meta & TikTok ads. Leverage AI to scale creation, boost ROI, and succeed in 2026.

Your Ultimate Guide to UGC Style Video Ads

Master UGC style video ads for Meta and TikTok. This guide reveals proven frameworks, scripts, and scaling tactics to boost your ROAS in 2026.